The Reality No One Tells You

You can spend months learning AI, ML, Python, LLMs, LLMOps, RAG, MCP, and still fail in a real job.

Why?

Because companies are not struggling to find people who can call an API.

They are struggling to find engineers who can design systems that don’t break under pressure.

In interviews today, whether it’s for AWS, Azure, or high-paying startups, you’ll notice something:

They don’t just ask “what is RAG?”

They ask:

- How will you scale it?

- What happens if traffic spikes?

- How do you prevent duplicate requests?

- How do you reduce LLM cost?

That’s where system design patterns separate average developers from high-value AI engineers.

The Hidden Gap in Most AI Engineers

Let’s be brutally honest.

Most AI engineers today:

- Know how to use LLMs and APIs

- Can build basic chat apps

- Understand prompt engineering

But they struggle with:

- Handling 10,000+ concurrent users

- Designing fault-tolerant systems

- Optimizing latency and cost

- Managing real-time + async workflows together

This gap is exactly why companies are paying a premium for engineers who understand system design + AI together.

Related Readings: Claude Code vs GitHub Copilot vs Cursor: Which AI Coding Assistant Should You Learn?

What Changes When You Understand System Design?

When you start applying these patterns, your mindset shifts completely.

You stop thinking like:

“How do I build this feature?”

And start thinking like:

“How will this system behave under scale, failure, and cost constraints?”

That’s the mindset of a Cloud AI Engineer in 2026.

AI is no longer just about models, it’s about systems.

Modern AI applications include:

- LLMs + APIs + Databases + Vector Stores

- Agentic AI workflows with MCP (Model Context Protocol)

- RAG pipelines for enterprise data

- Real-time inference + async processing pipelines

Without strong system design:

- Latency increases

- Costs explode

- Failures multiply

- User experience collapses

With strong system design:

- Systems scale smoothly

- AI becomes reliable

- Costs stay optimized

- Deployment becomes faster

Related Readings: Top 10 Claude Code Use Cases Every Developer Should Know

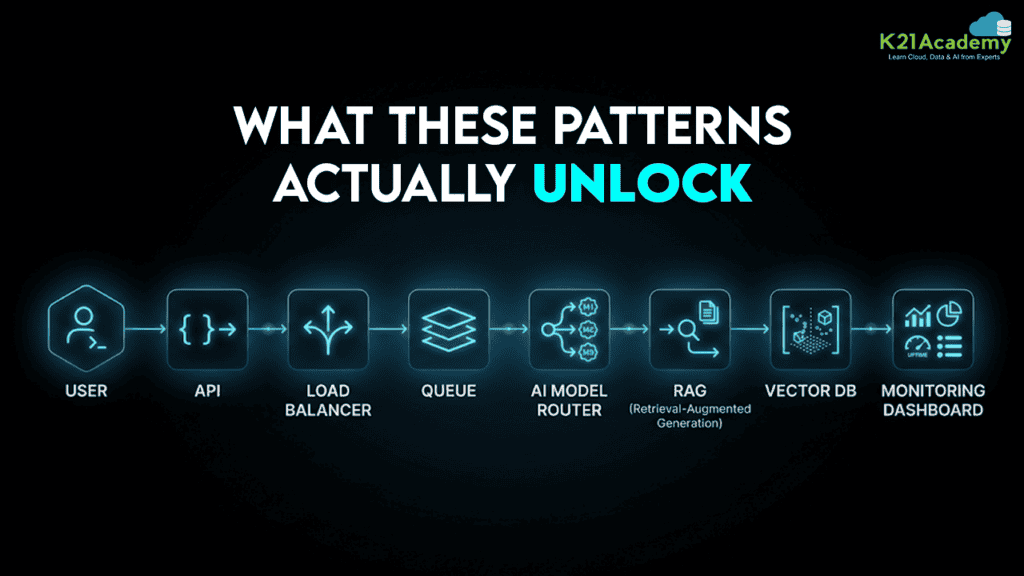

Deep Dive: What These Patterns Actually Unlock

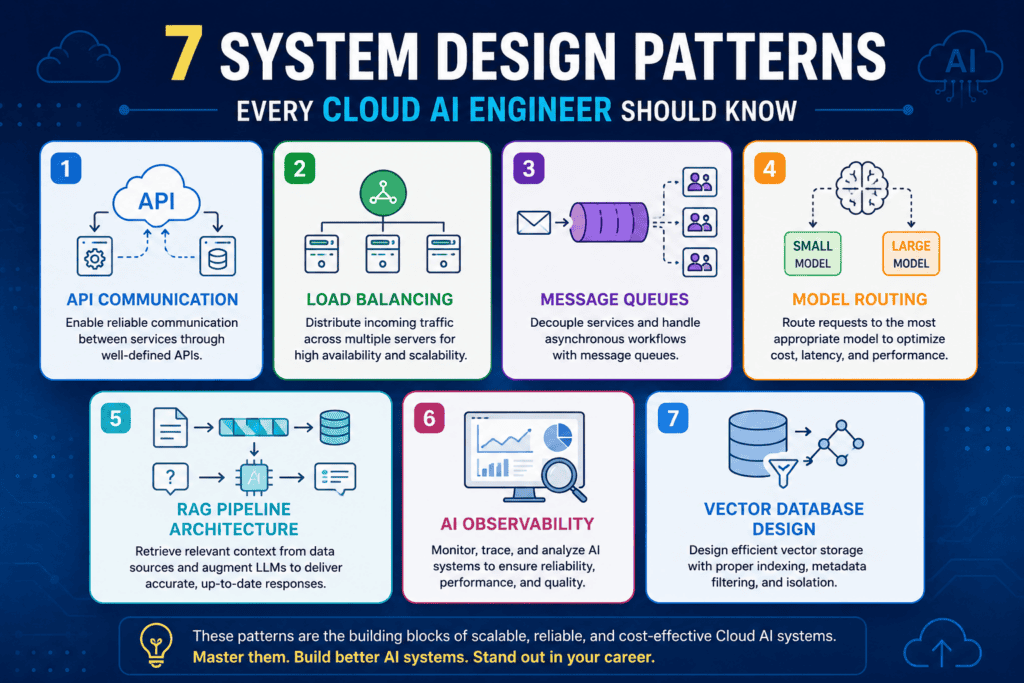

These 7 patterns are not isolated, they work together in real systems.

Imagine building a production AI assistant:

- API Communication handles user requests

- Load Balancer distributes traffic

- Message Queue processes heavy tasks

- Model Routing reduces cost

- RAG Pipeline adds knowledge

- Vector DB stores memory

- Observability tracks everything

This is not a theory.

This is exactly how modern AI systems at scale are built.

1. API Communication

Services in a distributed system need a way to talk to each other. An API defines that contract one service says: send me a request in this format, and I’ll send back a response in this format.

Two Modes:-

1. Sync (Synchronous)

- One service calls another and waits

- Used when immediate response is required

- Technologies: REST, gRPC

2. Async (Asynchronous)

- Service sends request and moves on

- Used for background tasks

- Examples: document processing, batch inference

Critical Concept: Idempotency

If a request is retried, it should not create duplicate results.

Why It Matters

Without proper API design:

- Duplicate transactions

- Lost responses

- Silent failures

Real Scenario

A user places an order → payment succeeds → response fails → retry → double charge

Missing: Idempotency + retry strategy

Related Readings: What is Prompt Engineering?

2. Load Balancing

A load balancer distributes incoming traffic across multiple servers.

Types:-

1. Round Robin

- Equal distribution

- Ignores server load

2. Least Connections

- Sends request to least busy server

- Better for uneven workloads

Why It Matters

Without load balancing:

- One server gets overloaded

- Others remain idle

- Performance becomes inconsistent

Scenario

Traffic spikes → one server slows → some users face 10x latency

Missing: Intelligent load balancing

Related Readings: What is Natural Language Processing (NLP)?

3. Message Queues

A queue acts as a buffer between services.

How It Works

- Producer sends job → queue stores it

- Worker processes when ready

- If failure → job retries

Tools

- AWS SQS

- Apache Kafka

- RabbitMQ

Why It Matters

Without queues:

- System blocks

- Requests fail under load

- No fault tolerance

Scenario

500 users upload documents → system handles only 20

Without queue: 480 failures

With queue: All processed gradually

Related Readings: Top AI Tools for Analyzing Big Data in 2026 | K21 Academy

4. Model Routing

Not every request needs a large LLM.

Concept

Route requests to different models based on complexity:

- Small model → simple queries

- Large model → complex reasoning

Routing Methods

- Rule-based (prompt length, type)

- Classifier-based (AI decides)

Why It Matters

- Reduces cost dramatically

- Improves latency

- Optimizes resource usage

Scenario

10,000 requests/day

- 70% simple

- 30% complex

Without routing → huge cost

With routing → optimized system

Related Readings: The Best Chatbot Development Tools

5. RAG Pipeline Architecture

RAG (Retrieval-Augmented Generation) connects AI with real data.

Two Phases:-

1. Ingestion

- Split documents

- Convert to vectors

- Store in vector DB

2. Query

- Convert query → vector

- Retrieve similar chunks

- Send to LLM

Key Challenge: Chunking

- Too big → noise

- Too small → lost context

Why It Matters

RAG powers:

- AI Chatbots

- Knowledge systems

- Enterprise AI

Scenario

Wrong answers despite correct data

Root cause: Poor chunking or retrieval

Related Readings: Learn about Claude Certified Architect Certificate

6. AI Observability

Traditional monitoring is not enough for AI systems.

Metrics That Actually Matter

- TTFT (Time to First Token) → perceived speed

- Token Throughput → capacity

- P99 Latency → worst-case delay

- Queue Depth → backlog

Why It Matters

Without observability:

- You don’t know what’s broken

- Users suffer silently

Scenario

System looks healthy

Users say “AI is slow”

Issue: Hidden latency metrics

Related Readings: Comparing the Best AI Chatbots for Your Business: What’s Best for You?

7. Vector Database Design

Vector DB = memory layer of AI systems

Key Concepts

1. Metadata Filtering

- Limits search scope

- Improves relevance

2. Namespace Isolation

- Separates client data

3. Embedding Consistency

- Same model for indexing + querying

Why It Matters

Poor vector design = bad AI responses

Scenario

Client A sees Client B data

Issue: No data isolation

Related Readings:- Comparing Copilot (Azure) Vs Amazon Q Vs Gemini

Salary Comparison: With vs Without System Design

Let’s talk about what actually matters, money and growth.

Without System Design Knowledge

- Role: Junior Developer / Basic AI Engineer

- Skills: Python, APIs, basic ML

- Salary (India): ₹4 – 8 LPA

- Salary (Global): $50K – $80K

- Work:

- API integrations

- Small features

- Support-level tasks

With System Design + AI Knowledge

- Role: Cloud AI Engineer / AI Architect / GenAI Engineer

- Skills:

- System Design

- RAG pipelines

- AI integrations

- Distributed systems

- AWS / Azure architecture

- Salary (India): ₹12 – 35 LPA

- Salary (Global): $120K – $200K+

The Real Difference

It’s not just salary, it’s responsibility and impact.

- One group builds features

- The other builds platforms

And platforms are where the money is.

Related Readings:- Top 15 Python IDEs and Code Editors for 2026 (Free & Paid)

Productivity & Efficiency Impact

Let’s compare two engineers working on the same AI project.

Engineer A (No Design Knowledge)

- Sends all requests to one model

- No queue → system crashes under load

- No caching → repeated work

- No observability → debugging takes hours

Engineer B (With Design Patterns)

- Uses model routing → reduces cost by 60%

- Uses queues → handles spikes smoothly

- Uses load balancing → stable performance

- Uses observability → fixes issues fast

Result

Engineer B:

- Delivers faster

- Builds scalable systems

- Gets promoted faster

Engineer A:

- Constantly firefighting issues

Real Industry Insight

Companies like Amazon, Microsoft, and top AI startups are no longer impressed by:

- “I built a chatbot”

- “I used OpenAI API”

They want to see:

- How your system behaves under load

- How you reduce inference cost

- How you design fault tolerance

- How you structure AI workflows

That’s why interviews now include:

- System design rounds

- Architecture discussions

- Real-world scenarios

Related Readings:- What is Generative AI & How It Works?

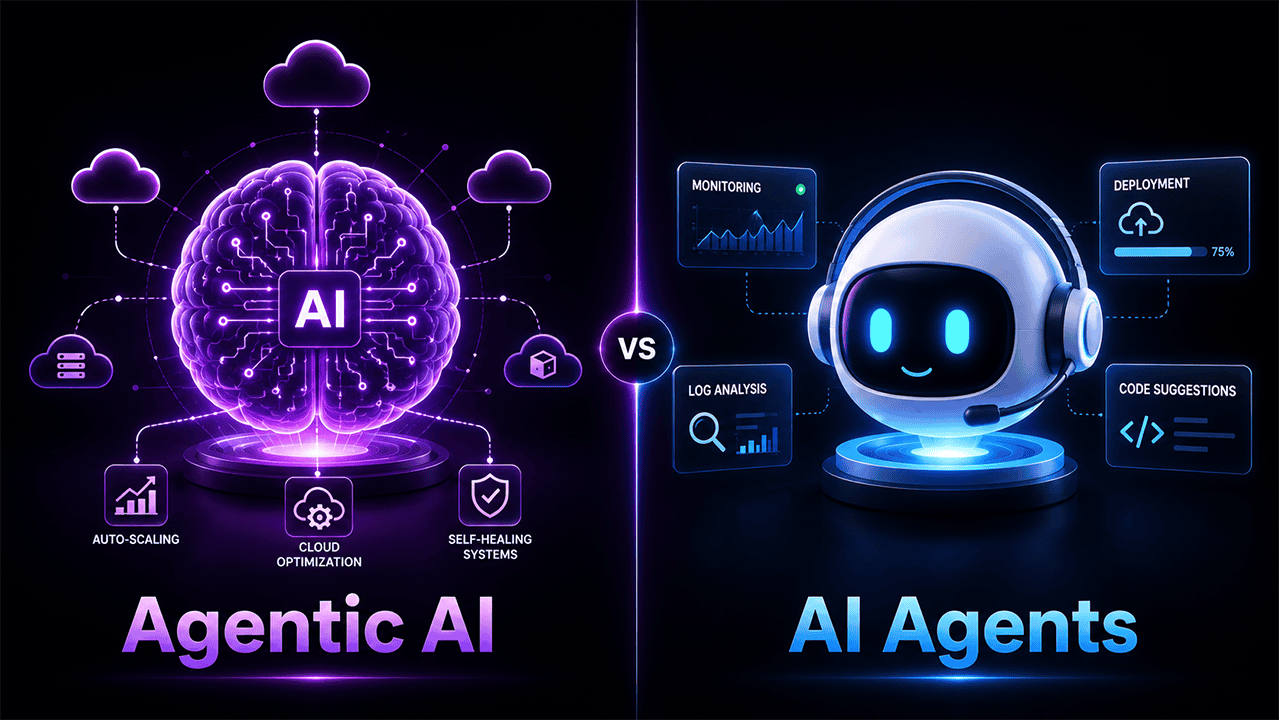

Advanced Layer: Where AI Meets System Design

This is where things get interesting.

Modern AI systems are not just APIs, they are intelligent systems.

Agentic AI Systems

- Multiple agents working together

- Require orchestration

- Design multi-agent systems

- Depend on queues + APIs + memory

MCP (Model Context Protocol)

- Standardizes tool interaction

- Enables structured agent workflows

- Requires strong system design

AI/ML Workflows

- Data ingestion → processing → inference → feedback loop

- Requires pipelines, queues, and observability

If you don’t understand system design, these concepts remain theoretical.

If you do, you can actually build them.

Related Readings:- Azure Machine Learning service workflow

Why Most Engineers Stay Stuck ?

Here’s the uncomfortable truth.

Most engineers avoid system design because:

- It feels complex

- It’s not taught properly

- It requires thinking beyond coding

But that’s exactly why it pays more.

Easy skills → low competition → low pay

Hard skills → high demand → high pay

Related Readings: Generative AI vs Agentic AI: Key Differences

The Career Shift That Changes Everything

When you master these patterns, your career shifts from:

Developer → Engineer → Architect

You move from:

- Writing code

To - Designing systems

And that’s where:

- Leadership roles come

- High salaries come

- Global opportunities open

Related Readings: Azure AI/ML Certifications: Step-by-Step Guide to Succeed in 2026

How to Start (Practical Approach)

If you want to actually apply this not just read it here’s what you should do:

Step 1: Pick One Cloud

Start with:

Step 2: Build a Real Project

Example:

- AI chatbot with RAG

- Add queue + load balancing + routing

Step 3: Add Observability

Track:

- Latency

- Token usage

- Errors

Step 4: Optimize

- Reduce cost

- Improve response time

- Handle failures

This is how you go from learner → builder.

Related Readings: Azure AI Foundry vs. Azure Machine Learning: Key Differences Explained by K21 Academy

Cloud AI Systems: AWS vs Azure vs GCP

These patterns apply across all cloud platforms:

- AWS → SQS, Lambda, Bedrock

- Azure → Azure AI Services, Service Bus, OpenAI

- GCP → Vertex AI, Pub/Sub

The tools change.

The architecture stays the same.

AI vs Traditional Systems

| Feature | Traditional Systems | AI Systems |

| Logic | Rule-based | Probabilistic |

| Debugging | Easy | Complex |

| Scaling | Predictable | Dynamic |

| Monitoring | CPU/Memory | Tokens/Latency |

| Data | Structured | Unstructured |

Key Takeaways

- AI success = Model + System Design

- APIs, queues, and load balancing are foundational

- RAG and vector DBs power modern AI

- Observability is critical for production

- Model routing saves cost at scale

AI is evolving fast, but system design is the multiplier.

Related Readings:- What Are AI Agents?

Anyone can use AI tools.

Very few can build AI systems that scale, survive, and succeed in production.

And those few are the ones:

- Getting hired faster

- Getting paid more

- Building real-world AI products

Final Thought

If you want to stay average, learn tools.

If you want to grow, learn AI.

But if you want to become top-tier in 2026,

learn how to design AI systems.

Because in the end, Models generate responses. Systems deliver value.

Frequently Asked Questions (FAQ’s)

Q1. What happens if I only learn AI tools but ignore system design?

You may still build small demos, but production systems can fail under real-world pressure. Companies now expect engineers to handle scalability, reliability, and cost optimization, not just API integration.

Q2. Can poor system design actually destroy an AI product?

Yes. Without queues, load balancing, and observability, traffic spikes can crash systems, increase latency, and create huge operational costs. Many AI products fail because the architecture cannot handle production workloads.

Q3. Is learning only prompt engineering enough to get high-paying AI jobs?

Not anymore. Prompt engineering helps at the beginner level, but senior AI roles now demand knowledge of distributed systems, RAG pipelines, model routing, and cloud architecture.

Q4. What if my AI application suddenly gets 10,000 users overnight?

Without scalable architecture, the system may slow down, crash, or generate failed requests. Proper load balancing, async queues, and caching are critical for handling sudden growth safely.

Q5. Can ignoring AI observability become dangerous for businesses?

Absolutely. If you cannot monitor latency, token usage, or failures, users may experience issues long before your team notices them. Hidden performance problems can damage trust and revenue.

Q6. Why are companies rejecting AI engineers who only know APIs?

Because APIs are the easy part. Businesses need engineers who understand fault tolerance, scaling, system reliability, and cost-efficient AI workflows in production environments.

Q7. What is the biggest mistake beginners make while building AI systems?

Most beginners focus only on model output quality and ignore infrastructure. Without strong backend architecture, even powerful AI models become unreliable and expensive to maintain.

Q8. Can bad vector database design create security risks?

Yes. Poor namespace isolation or metadata filtering can expose one client’s data to another. In enterprise AI systems, weak vector DB design can become a serious privacy issue.

Q9. Will AI engineers without cloud and system design skills struggle in the future?

Very likely. Modern AI systems depend heavily on cloud infrastructure, distributed services, and scalable workflows. Engineers without these skills may find it difficult to move into advanced AI roles.

Q10. Is AI system design harder than learning AI models themselves?

For many engineers, yes. Models can often be accessed through APIs, but designing systems that remain stable, fast, and cost-efficient under pressure requires much deeper engineering knowledge.

![AWS DevOps [DOP-C02] Professional Step By Step Activity Guides (Hands-On Labs)](https://k21academy.com/wp-content/uploads/2023/02/DOP-C02-1.png)