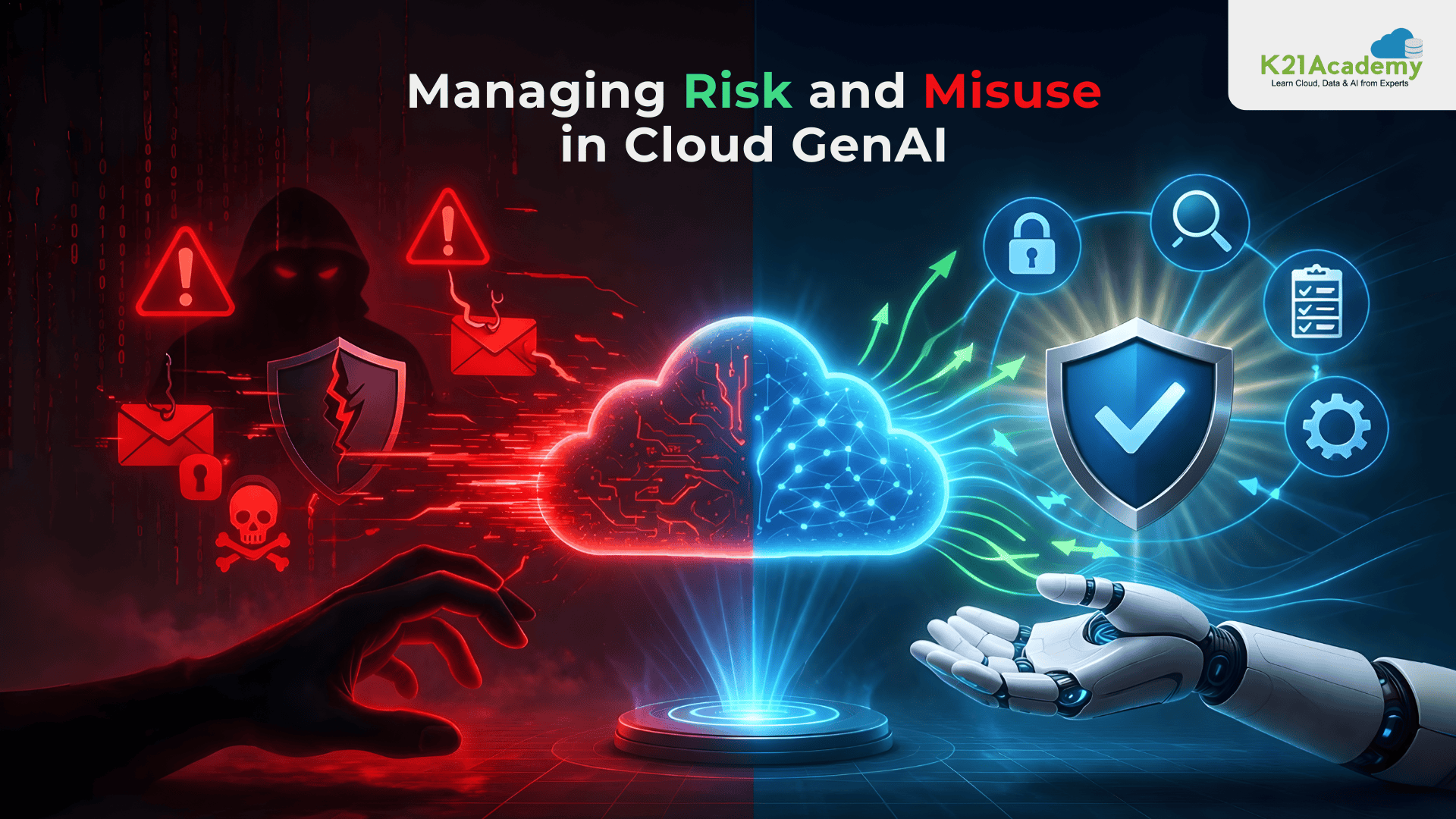

Cloud-gen AI risks are becoming a major concern as cloud-based generative AI transforms from a tech hype into a real-world necessity for business owners, corporate employees, academics, and individuals across industries. These systems, which were once experimental prototypes, are now deeply integrated into everyday operations, powering automation, decision-making, and digital innovation. However, as powerful and transformative as generative AI is, it also brings significant risks and potential misuse that organizations must actively monitor and manage.

Since businesses, organizations, and even individuals are building entire worlds centered around AI, these ecosystems can impact global markets, economies, and how people live their lives. This is why creating an environment where the patterns of AI misuse and risks are detected and handled has now become more crucial than ever.

Detecting these patterns can ensure that these systems, which are becoming a staple in our everyday lives, stay safe and reliable and prevent cloud-gen AI risks.

Managing Misinformation And Hallucinations

Misinformation is a serious risk in cloud Gen AI systems. These models are designed to sound confident, but that does not always mean they are correct. Sometimes they generate information that looks accurate but is actually wrong.

In cloud environments, where AI is used at scale, even small mistakes can spread quickly and affect decisions, users, or content. In some cases, people may even try to push the system to generate misleading information on purpose. This makes misinformation harder to catch than other risks, because the system still appears to be working normally.

To reduce this risk, AI systems should be connected to reliable data sources and tested regularly for accuracy. Human review is still important, especially in sensitive use cases. At the same time, an AI detector can help by identifying hallucinations and checking whether the information is factually correct. This adds an extra layer of protection and helps stop false information from spreading & prevents cloud-gen AI risks.

Understanding Input Risks And Prompt Manipulation

One of the first patterns to understand is how AI systems handle input. In generative AI, prompts shape everything. They guide how the system responds, but they can also be used to trick it.

Prompt injection attacks happen when someone writes input in a way that pushes the AI to do something it should not do. This could mean revealing sensitive information or ignoring its built-in safety rules. The challenge is that language is flexible. A harmful instruction can be hidden inside something that looks completely normal. Because of this, basic filtering is not enough. Strong systems treat every input as untrusted. They look not just at what is being said, but also at the intent behind it. By combining careful input checks with ongoing monitoring, organisations can reduce the cloud-gen AI risks of manipulation and keep responses more reliable.

Preventing Data Leakage And Exposure

Another major pattern is data leakage. Generative AI systems rely on large amounts of data, but that creates risk. Sometimes, the system may accidentally reveal sensitive information in its responses. This usually happens when the AI has access to more data than it actually needs. It can also happen when data is not properly cleaned or protected before being used.

To reduce these cloud-gen AI risks, organizations should:

- Limit what the AI can access.

- Sensitive data should be masked or removed.

- Access should be tightly controlled.

- Outputs should also be reviewed regularly to catch any accidental leaks early.

The less sensitive data the system knows, the less it can expose.

Managing Bias As A Security Risk

Bias in AI is often discussed as a fairness issue, but it is also a security concern. When a model shows consistent bias, it becomes predictable. That predictability can be exploited. Attackers can learn how a model behaves and then guide it toward certain outputs. This can be used to spread misinformation or influence decisions.

To manage this, organizations need to test their models regularly. They should look for patterns in how the AI responds across different scenarios. Human review is also important, especially when decisions have a real-world impact. Reducing bias is not a one-time task. It is an ongoing process that continues throughout the life of the system.

Responding To AI-Driven Phishing And Malware

Generative AI has made phishing and malware more advanced. Messages can now be highly personalized and convincing. Malicious code can also be generated more easily. This creates a pattern of amplification. Cloud-gen AI risks allows attackers to scale their efforts quickly and reach more targets.

To respond to this, organizations need to focus on behavior rather than just known threats. Instead of only blocking known attacks, they should look for unusual activity. Training employees is also key. People need to understand that AI-generated messages can look very real. Awareness helps reduce the chances of someone falling for these attacks.

Securing The AI Supply Chain

Cloud Gen AI systems depend on many external components. These include pretrained models, open-source libraries, and third-party APIs. Each of these introduces cloud-gen AI risks. If one part of the supply chain is compromised, it can affect the entire system. This is known as inherited risk.

To manage this, organizations should verify where their models and data come from. They should check the integrity of third-party components and monitor them over time. Security is no longer just about what you build yourself. It is also about everything you choose to use.

Controlling Shadow AI Usage

Shadow AI happens when employees use AI tools without approval. This often comes from a desire to improve AI productivity by working faster or more efficiently. The risk is that sensitive data may be shared with tools that are not secure or properly monitored. It also creates blind spots, where organisations do not know how AI is being used.

The solution is not to block AI tools completely. Instead, organisations should provide approved tools and clear guidelines. When people have safe options, they are less likely to use risky ones. Education plays a big role here. When users understand the risks, they make better decisions.

Related Readings: Latest Tools for Smarter AI Development in Azure AI

Strengthening Access And Authentication

Access control is a basic but critical pattern. In cloud systems, APIs and accounts control who can use the AI and how. Weak passwords, shared accounts, or poor permissions can allow unauthorized access. Once inside, attackers can misuse the system or extract data.

Strong authentication methods like multi-factor authentication help reduce this risk. Access should also be limited based on roles. Not everyone needs the same level of access. Regular monitoring helps detect unusual login activity or misuse early.

Designing With Zero Trust Principles

A growing approach in cloud security is the zero trust model. This means nothing is automatically trusted, even inside the system. Every request is checked. Every user is verified. Every action is monitored.

This approach works well for generative AI because the systems are dynamic and constantly changing. Instead of assuming safety, zero trust assumes risk and manages it continuously. It creates a stronger and more flexible security foundation.

Using Monitoring As A Core Defense

Monitoring is often overlooked, but it is one of the most powerful tools in managing cloud-gen AI risks. Every interaction with the system creates data. That data can reveal patterns. Unusual activity, strange outputs, or sudden spikes in usage can all signal potential misuse.

Modern systems use AI to monitor AI. They analyze logs and detect patterns that humans might miss. This creates a feedback loop where the system learns and improves over time. Good monitoring turns small signals into early warnings.

Balancing Risk And Functionality

Not all risks can be removed completely. Sometimes, reducing risk means limiting what the system can do. For example, restricting access to certain data may reduce risk but also reduce functionality. Organizations need to find the right balance.

The best approach is to align AI capabilities with real business needs. This avoids unnecessary exposure while still delivering value. It is about being intentional with what the system is allowed to do.

Adapting To Regulations And Compliance

Regulations around AI are still evolving. Different regions have different rules, and these rules can change over time. This creates a pattern of constant adjustment. Systems need to be flexible enough to adapt without major redesign.

Organizations should build governance frameworks that can handle change. This includes tracking data usage, managing permissions, and documenting how AI systems are used. Being prepared makes it easier to stay compliant as regulations evolve.

Final Thoughts

Generative AI in the cloud is not just a tool; it is a system shaped by data, users, and constant interaction. Risks will always exist, but they are not random. They follow patterns. When organizations understand these patterns, they can prevent problems instead of reacting to them. They can build systems that are not only powerful but also safer and more reliable.

From prompt manipulation to data leakage, and even the quieter risk of misinformation, each challenge shows where better control is needed.

Responsible AI use needs to be the baseline of interacting with this technology. Handling misuse is not about chasing every new threat. It is about understanding how those threats form in the first place. Once that becomes clear, managing them becomes much easier and far less stressful.

Next Task: Enhance Your AI/ML Skills

![AWS DevOps [DOP-C02] Professional Step By Step Activity Guides (Hands-On Labs)](https://k21academy.com/wp-content/uploads/2023/02/DOP-C02-1.png)