If you have incoming live streaming data that you wish to store or get insights by transforming it, or report on it with Power BI, but confused with the right Azure Service to process this data, then Azure Stream Analytics is the one you are looking for.

Azure Stream Analytics is the perfect solution when you require a fully managed service with no infrastructure setup hassle, and you pay only for what you use.

In this blog, we are going to cover:

- Overview of Azure Stream Analytics

- Benefits of Azure Stream Analytics

- How does Azure Stream Analytics Work?

- Use Cases of Azure Stream Analytics

- Steps To Create A Stream Analytics Job Using Azure Portal

Overview

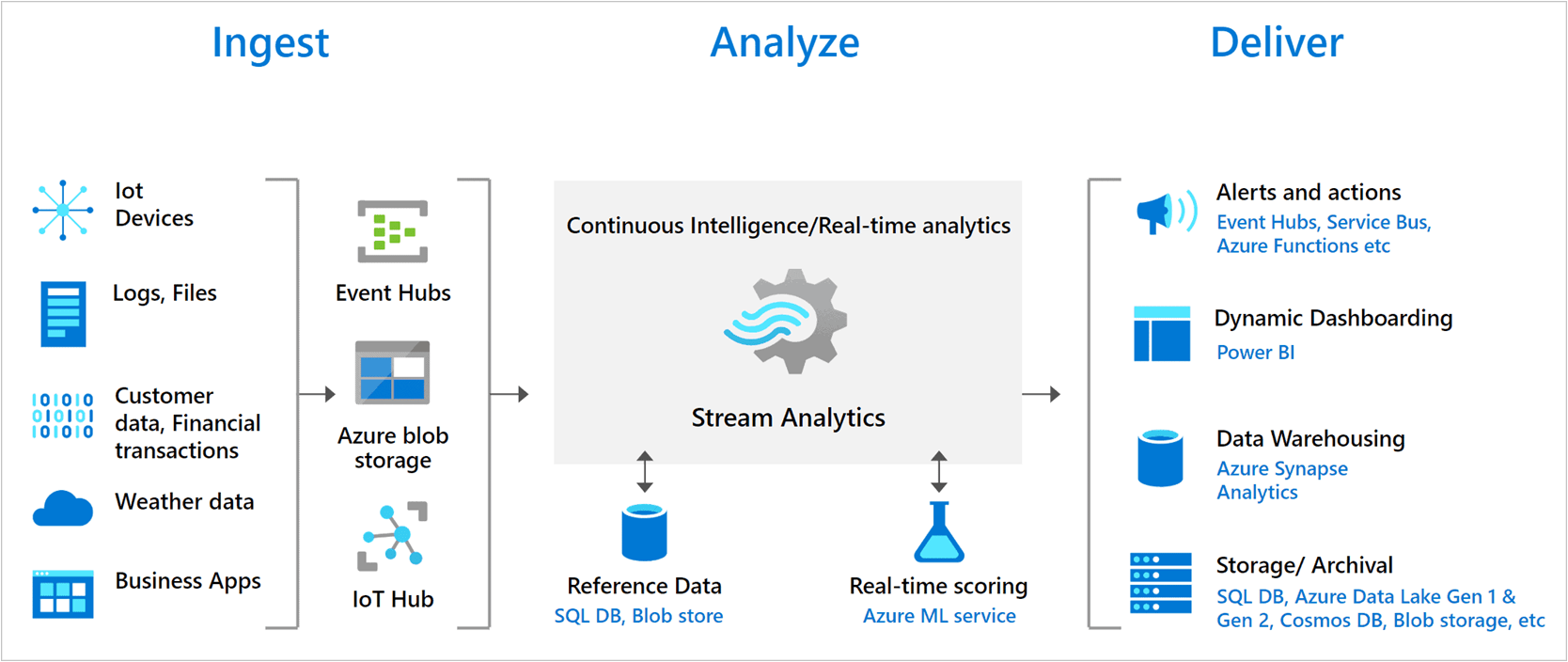

Azure Stream Analytics is a real-time and complex event-processing engine designed for analyzing and processing high volumes of fast streaming data from multiple sources simultaneously.

Patterns and relationships can be identified in information extracted from multiple input sources including devices, sensors, applications, and more. These patterns can be used for triggering actions and initiate workflows such as creating alerts, feeding information to a reporting tool, or storing transformed data for later use. Azure Stream Analytics is available on Azure IoT Edge runtime, enabling to process data on IoT devices.

Benefits Of Azure Stream Analytics

- It is easy to start and create an end-to-end pipeline.

- It is a fully managed offering on Azure.

- It can run in the cloud, for large-scale analytics, or run on IoT Edge or Azure Stack for ultra-low latency analytics.

- It is available across multiple regions worldwide.

- It is designed to run mission-critical workloads and supports reliability, security, and compliance requirements.

- It encrypts all incoming and outgoing communications.

- It can process millions of events every second and deliver results with ultra-low latencies.

Read this: Article on Azure Data Lake

How Does Azure Stream Analytics Work?

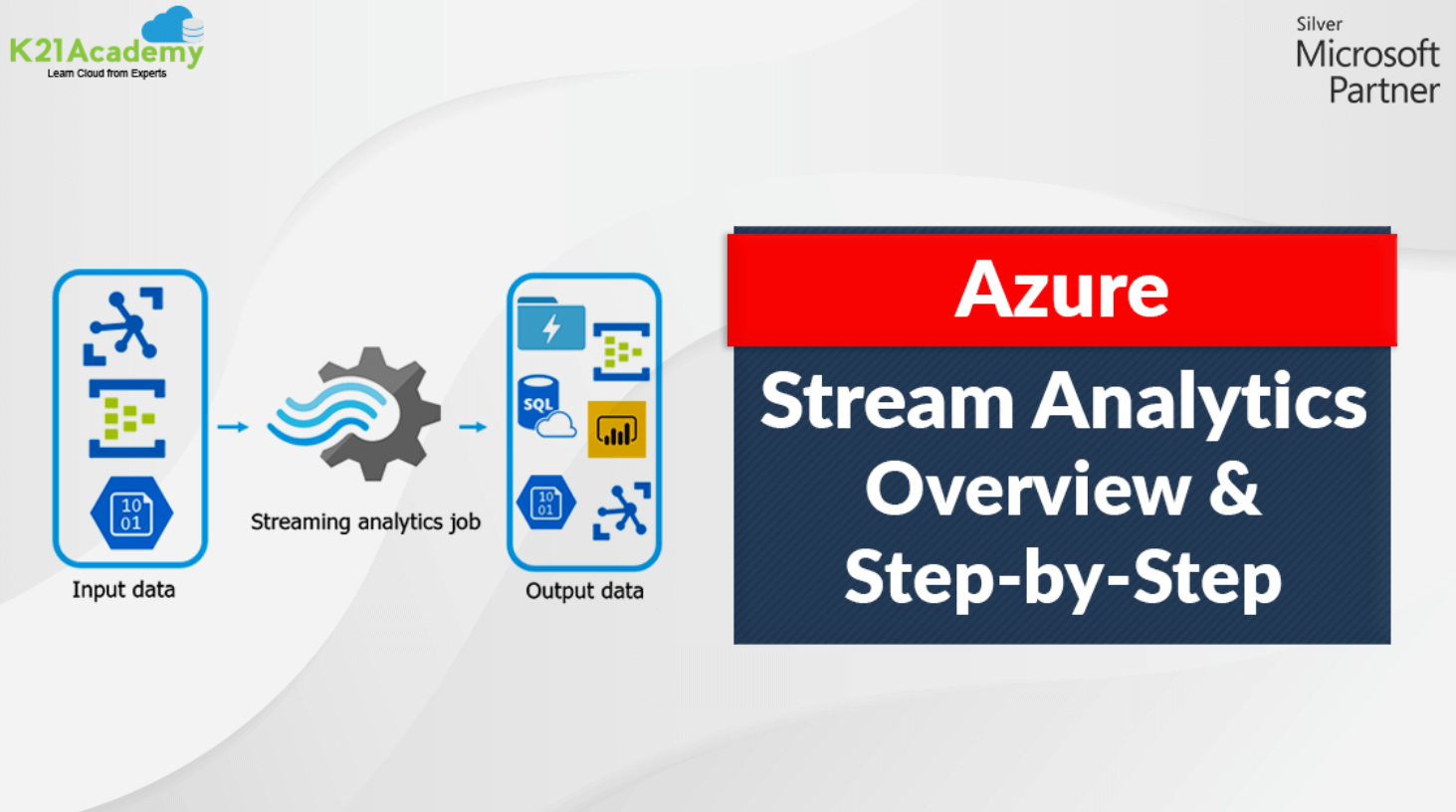

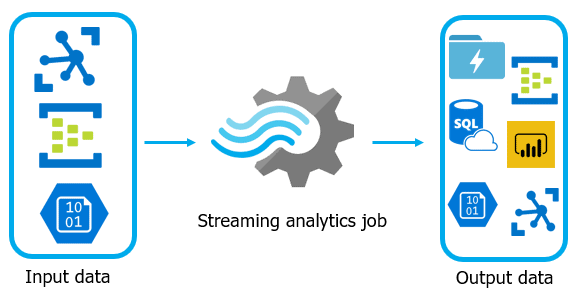

- An Azure Stream Analytics job consists of an input, query, and an output.

- It ingests data from Azure Event Hubs, Azure IoT Hub, or Azure Blob Storage.

- The query is based on SQL query language and can be used to easily filter, sort, aggregate, and join streaming data.

Each job has one or several outputs for the transformed data, and we can be controlled in response to the information analyzed. For example,

- Send data to Azure Functions, Service Bus Topics, or other services to trigger communications or custom workflows downstream.

- Store data in Azure storage services to train the machine learning models based on historical data or perform batch analytics.

This image represents how data is sent to Stream Analytics, analyzed, and sent for other actions like storage or presentation.

Check out: Our blog on Azure Databricks for Beginners

Use Cases Of Azure Stream Analytics

- Store streaming data and make it available to other cloud services for further analysis, reporting, etc.

- Transform and analyze data in real-time

- Real-time dashboarding with Power BI (monitoring purposes)

- send alerts

- Make decisions in real-time

- Machine learning (e.g. risk analysis, fraud detection, predict trends, etc.), although for more advanced analytics it has limited usage

- Geospatial analytics for fleet management and driverless vehicles

Steps To Create A Stream Analytics Job Using Azure Portal

W have covered the basics of Azure Stream Analytics, what are its benefits, and use cases. Now let us look at the steps to create a stream analytics job using the Azure portal.

Prerequisites: Create an Azure Free Trial Account. You can also check our blog on how to create an Azure Free Trial account.

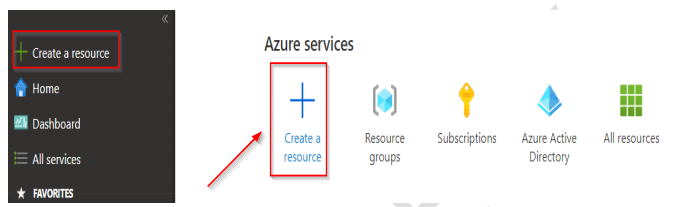

Step 1: Sign in to the Azure Portal.

Step 2: Click on Create a Resource option to add a new resource.

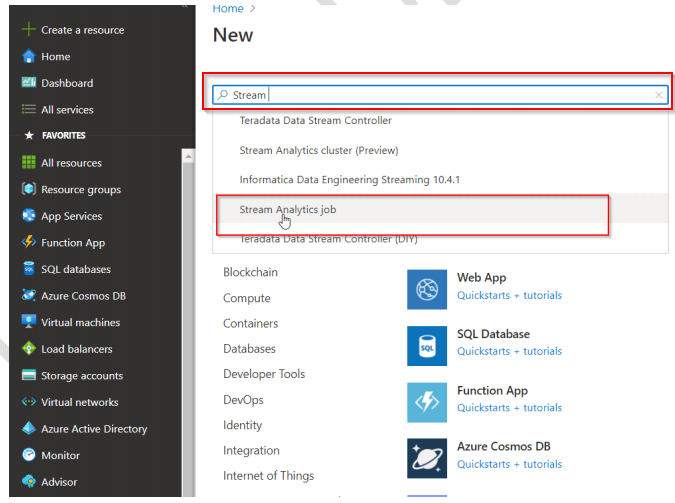

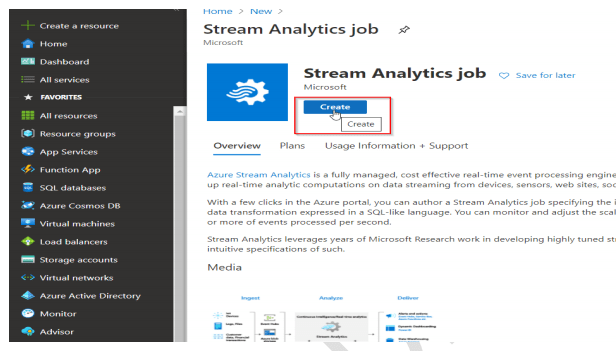

Step 3: In the search bar type Stream or Stream Analytics Job and click on Create.

Read More : About azure event hub

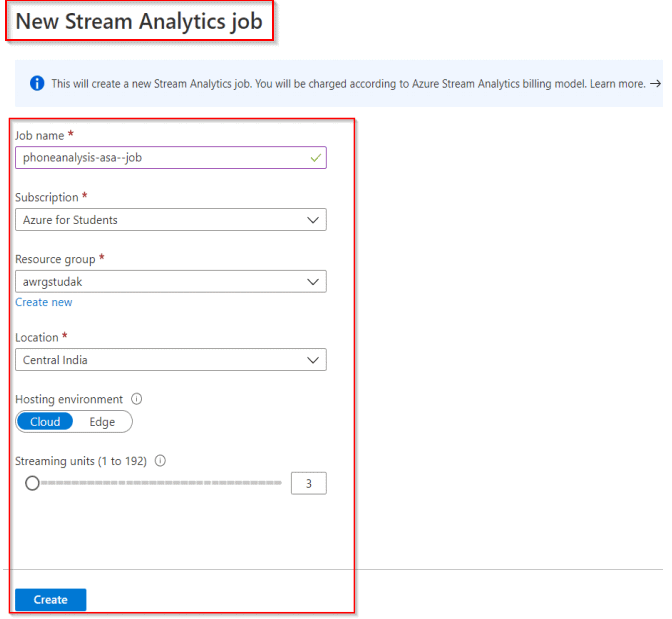

Step 4: In the New Stream Analytics job screen (if you do not have an existing resource group, then you can create a new one), fill out the following details and then click on Create:

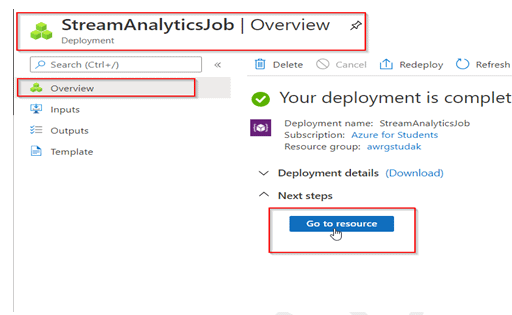

Step 5: Once the deployment is complete, click on Go to resource option.

To Know More About azure sql database

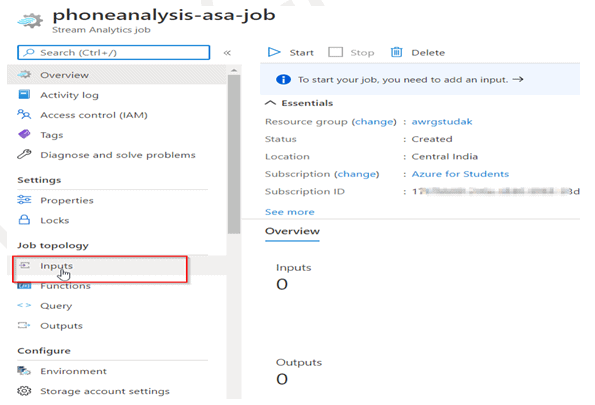

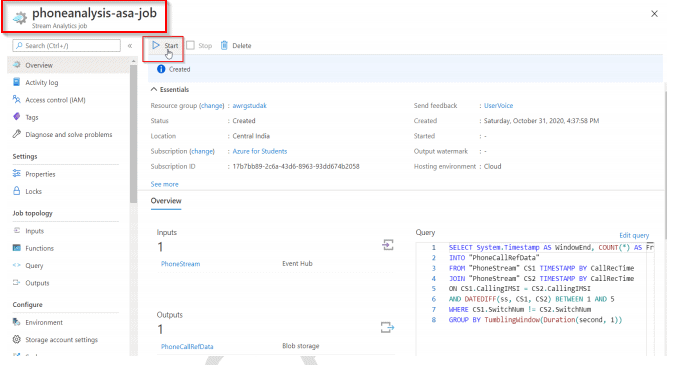

Step 6: In your phoneanalysis-asa-job Stream Analytics job window, in the left-hand side menu,

under Job topology, click Inputs to specify stream analytics job inputs.

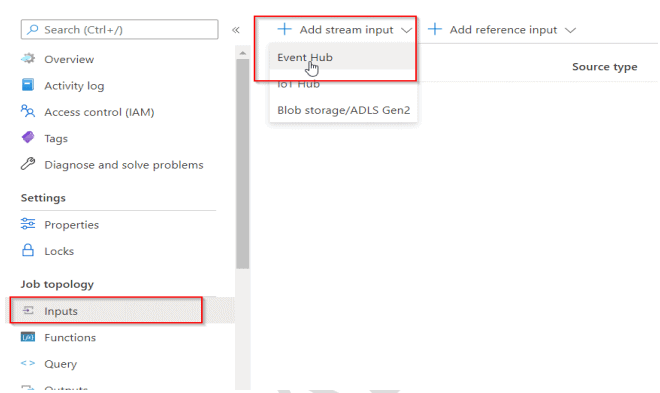

Step 7: In the Inputs screen, click + Add stream input, and then click Event Hubs.

Event Hubs are a big data streaming platform and event ingestion service that can receive and process millions of events per second.

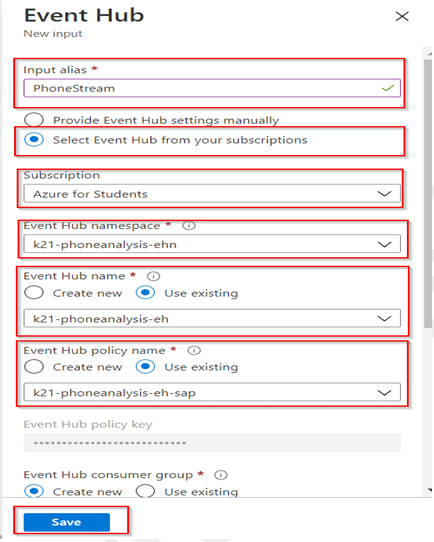

Step 8: In the Event Hub screen, type in the following values and click the Save button. Once completed, the PhoneStream Input job will appear under the input window.

Read More: About Data Engineer Azure.

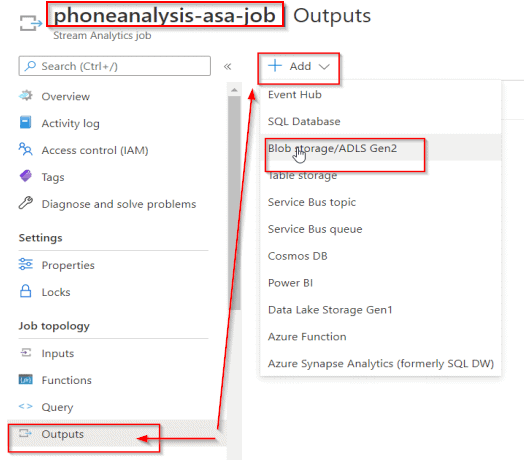

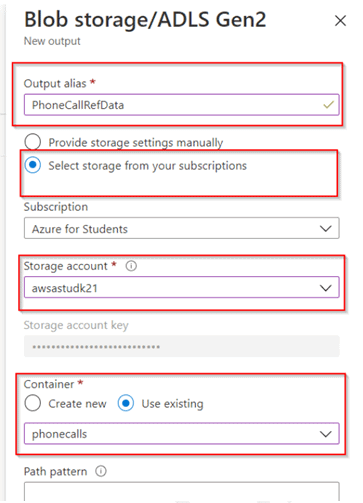

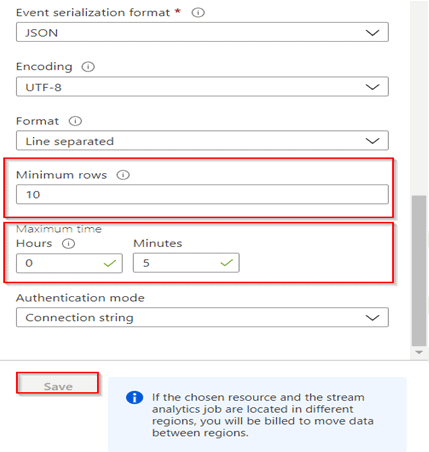

Step 9: In your phoneanalysis-asa-job Stream Analytics job window, in the left-hand side menu,

under Job topology, click Outputs to specify stream analytics job outputs and, click + Add, and then click Blob Storage.

Step 10: In the Blob storage window, type or select the following values in the pane, and in the Dropdown fill the following values Min row = 10 and Max time = 5, and finally, click Save. You can close the output screen to return to the Resource Group page.

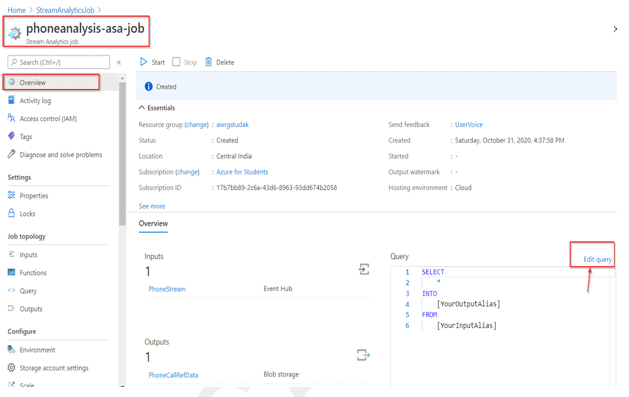

Step 11: In your phoneanalysis-asa-job window, in the Query screen in the middle of the window,

click on Edit query.

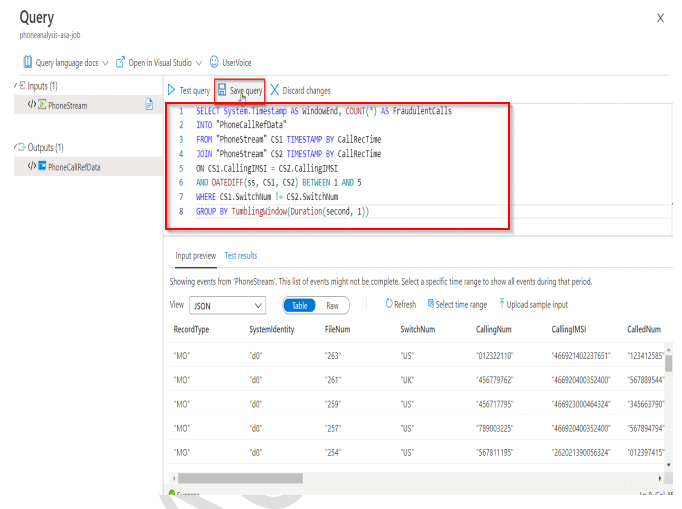

Step 12: Replace the default query with the new query and save the query.

SELECT System.Timestamp AS WindowEnd, COUNT(*) AS FraudulentCalls

INTO “PhoneCallRefData”

FROM “PhoneStream” CS1 TIMESTAMP BY CallRecTime

JOIN “PhoneStream” CS2 TIMESTAMP BY CallRecTime

ON CS1.CallingIMSI = CS2.CallingIMSI

AND DATEDIFF(ss, CS1, CS2) BETWEEN 1 AND 5

WHERE CS1.SwitchNum != CS2.SwitchNum

GROUP BY TumblingWindow(Duration(second, 1))

Close the Query window to return to the Stream Analytics job page.

Step 13: In your phoneanalysis-asa-job window, in the Query screen in the middle of the window,

Click on Start to start the stream analytics job.

Step 14: In the Start-Job dialog box that opens, click on Now, and then click Start.

You can validate the streaming data by going to the resource group that was created in the initial steps and selecting the storage container created.

So these are the steps that can be followed to create an Azure Stream Analytics job using the Azure portal, specify job input and output, and define a stream analytics query.

Related/References

- Microsoft Azure Data Engineer Associate [DP-200 & DP-201]: Everything You Need To Know

- Implementing an Azure Data Solution | DP-200 | Step By Step Activity Guides [Hands-On Labs]

- Designing an Azure Data Solution | DP-201 | Step By Step Activity Guides [Hands-On Labs]

- Microsoft Azure Data Fundamentals [DP-900]: All You Need To Know

- Microsoft Azure Data Fundamentals [DP-900]: Step By Step Activity Guides (Hands-On Labs)

Next Task For You

In our Azure Data Engineer training program, we will cover 28 Hands-On Labs. If you want to begin your journey towards becoming a Microsoft Certified: Azure Data Engineer Associate by checking out our FREE CLASS.

![AWS DevOps [DOP-C02] Professional Step By Step Activity Guides (Hands-On Labs)](https://k21academy.com/wp-content/uploads/2023/02/DOP-C02-1.png)