In this blog, we are going to cover Microsoft Azure Data Engineer Associate DP-203 Exam Questions that give you an idea and understanding that generally what type of questions are asked in the DP-203 Associate level exam.

Azure data engineers help stakeholders understand data through research and use a variety of tools and techniques to create and maintain a secure and compliant data processing pipeline. These professionals use a variety of Azure data services and languages to store and build clean, sophisticated datasets for analytics.

Azure Data Engineers also help ensure that your data pipelines and data stores are powerful, efficient, organized, and reliable in the face of a variety of business needs and constraints. Resolve unexpected problems quickly and minimize data loss. Azure data engineer also designs, implements, monitors, and optimizes data platforms to meet the requirements of the data pipeline.

If you are preparing for the Microsoft Azure Data Engineer Associate Certification [DP-203] Exam. Then check your readiness by attending to these questions for Azure Data Engineer Associate Certification.

Azure Data Engineer Associate DP-203 Exam Questions

Q1. You are working in a cloud company and you have been assigned the responsibility of building an enterprise data lake on Azure and accomplishing big data analytics. Which of the following Azure services would you use in this scenario?

A. Azure Files

B. Azure Blobs

C. Azure Disks

D. Azure Queues

E. Azure Tables

Explanation:

Correct Answer: B

Azure blobs allow storing and accessing unstructured data at a massive scale in block blobs. Azure blobs are recommended to use:

- When you want your applications to support streaming and random-access scenarios.

- When you want to access application data from anywhere.

- When you want to develop an enterprise data lake on Azure and carry out big data analytics.

- Option A is incorrect. Azure Files offer fully managed cloud file shares that can be accessed from anywhere using the industry-standard Server Message Block (SMB) protocol. Azure files are not the right choice for the given scenario.

- Option B is correct. Azure Blobs is the best choice to be used in the given scenario.

- Option C is incorrect. Azure Disks help persistently store and accessing the data from an attached virtual hard disk. In the given scenario, using Azure Disks is not the right choice.

- Option D is incorrect. Azure Queues is the best choice for decoupling the application components and using asynchronous messaging to communicate among them.

- Option E is incorrect. Azure tables should be used to store flexible data. In the given scenario, using Azure Tables is not the right choice.

Read: Microsoft Azure Certification Path

Q2. You are implementing an Azure Data Lake Gen2 storage account. You need to ensure that data will be accessible for write and read operations both even if an entire data center (zonal or non-zonal) becomes unavailable. Which kind of replication would you use for the storage account? (Choose the solution with minimum cost)

A. Locally-redundant storage (LRS)

B. Zone-redundant storage (ZRS)

C. Geo-redundant storage (GRS)

D. Geo-zone-redundant storage (GZRS)

Explanation:

Correct Answer: B

Zone-redundant storage replicates the Azure Storage data in a synchronous manner across 3 Azure availability zones in the primary region. With Zone-redundant storage, the data remains accessible for write and read operations even if a zone is not available.

- Option A is incorrect. LRS ensures availability only if a node within a data center becomes unavailable.

- Option B is correct. Zone-redundant storage replicates the Azure Storage data in a synchronous manner around 3 Azure availability zones within a primary region.

- Option C is incorrect. GRS is not a cost-effective method. ZRS will be a more suitable option in the given scenario.

- Option D is incorrect. GZRS will also ensure availability but it is not the redundant method with minimum cost. ZRS will achieve the goal in the given scenario.

Q3. While working on one of your company’s projects, your teammate wants to check the options for input to an Azure Stream Analytics task that needs high throughput and low latencies. He is confused about the input that he should use in this case. He approaches you and asks you to help him. Which of the following Azure product would you suggest to him?

A. Azure Table Storage

B. Azure Blob Storage

C. Azure Queue Storage

D. Azure Event Hubs

E. Azure IoT Hub

Explanation:

Correct Answer: D

Azure Event Hub is considered a highly scalable event ingestion service that can take and process over million events within a second. You can transform and store the data that is sent to the event hubs with the help of storage/batching adapters or a real-time analytics provider. Event Hubs are known for consuming data streams from applications with high throughput and low latencies.

- Option A is incorrect. Azure table storage is a NoSQL store that is used for schemaless storage of structured data.

- Option B is incorrect. Azure Blob Storage should be used when you desire your application to support streaming and random-access scenarios.

- Option C is incorrect. Azure Queue Storage is used to allow asynchronous message queueing among application components.

- Option D is correct. In the given scenario, You should suggest Azure Event Hubs to your teammate as Event Hubs are the primary choice for consuming the data streams from applications with high throughput and low latencies.

- Option E is incorrect. Azure IoT Hub offers a cloud-hosted solution back end for connecting any device virtually.

Q4. You work in Azure Synapse Analytics’ dedicated SQL pool that has a table titled Pilots. Now you want to restrict the user access in such a way that users in the ‘IndianAnalyst’ role can see only the pilots from India. Which of the following would you add to the solution?

A. Table partitions

B. Encryption

C. Column-Level security

D. Row-level security

E. Data Masking

Explanation:

Correct Answer: D

Row-level security is applicable on databases to allow fine-grained access to the rows in a database table for restricted control upon who could access which type of data.

- Option A is incorrect. Table partitions are generally used to group similar data.

- Option B is incorrect. Encryption is used for security purposes.

- Option C is incorrect. Column-level security is used to restrict data access at the column level. In the given scenario, we need to restrict access at the row level.

- Option D is correct. In this scenario, we need to restrict access on a row basis, i.e only for the pilots from India, there Row-level security is the right solution.

- Option E is incorrect. Sensitive data exposure can be limited by masking it to unauthorized users using SQL Database dynamic data masking.

Q5. You are considering deploying an Azure Databricks interactive cluster. You need to ensure that the cluster configuration is kept, even if the cluster is inactive for more than 30 days.

How would you achieve this goal?

A. Terminate the cluster manually

B. Clone the cluster after termination

C. Pin the cluster

D. Start the cluster manually

Explanation:

Correct Answer: C

30 days after a cluster is terminated, it is permanently deleted. To keep an interactive cluster configuration even after a cluster has been terminated for more than 30 days, an administrator can pin the cluster. Up to 20 clusters can be pinned.

Q6. You are designing a big data analytics solution that will use azure stream analytics to analyze real-time Twitter data with hashtag #cloud. Which windowing function would you recommend to be used in your query, if you need to give every 5 seconds the count of tweets over the last 10 seconds?

A. Session window

B. Sliding window

C. Tumbling window

D. Hopping window

Explanation:

Correct Answer: D

Hopping window functions hop forward in time by a fixed period. It may be easy to think of them as Tumbling windows that can overlap, so events can belong to more than one Hopping window result set.

Q7. You have created a data frame. But before issuing SQL Queries, you decide to save your DataFrame as a temporary view. Which of the following DataFrame method will help you in creating the temporary view?

A. createTempView

B. createOrReplaceTempView

C. ReplaceTempView

D. createTempViewForDataFrame

E. CreateorReplaceDFTempView

Explanation:

Correct Answer: B

Before issuing SQL queries, DataFrame needs to be saved as a table or temporary view. createOrReplaceTempView function is used to create the temporary view.

- Option A is incorrect. There is no DataFrame method like createTempView.

- Option B is correct. createOrReplaceTempView function is used to create the temporary view.

- Option C is incorrect. There is no such method as ReplaceTempView.

- Option D is incorrect. There is no such method as createTempViewForDataFrame.

- Option E is incorrect. There is no DataFrame method like CreateorReplaceDFTempView.

Q8. While copying a Data Factory pipeline, you get a “DelimitedTextMoreColumnsThanDefined” error. What might be the possible cause behind this error? (Choose the best possible option)

A. You have reached the capacity limit of integration runtime.

B. The folder you are copying has files with different schemas.

C. Browser cache issue.

D. You have invoked REST API in Web activity.

Explanation:

Correct Answer: B

If a folder you are copying consists of files with different schemas, like different delimiters, quote char settings, variable number of columns, or some data issue, the Data Factory pipeline may return the following error:

- Option A is incorrect. When you reach the capacity limit of the integration runtime, you might be running a huge amount of data flow through the same integration runtime at the same time that makes the pipeline run get fail.

- Option B is correct. “DelimitedTextMoreColumnsThanDefined” error is generally returned when the folder you are copying has files with different schemas.

- Option C is incorrect. A browser cache issue might result in a situation where you cancel a pipeline run, but pipeline monitoring often still demonstrates the progress status.

- Option D is incorrect. The option does not state the right cause for the given issue.

Download the Complete Microsoft Azure Data Engineer Associate DP-203 Exam Questions

When you have tested your knowledge by answering these DP-203 exam questions, I hope you have a clear stand in terms of your DP-203 exam preparation.

Note: K21Academy also offers a complete DP-203 Exam Questions Prep Guide where learners get to practice questions to test their Azure DP-203 exam preparation before the actual exam.

To download the complete DP-203 Azure Data Engineer Associate Exam Questions guide click here.

Related/References

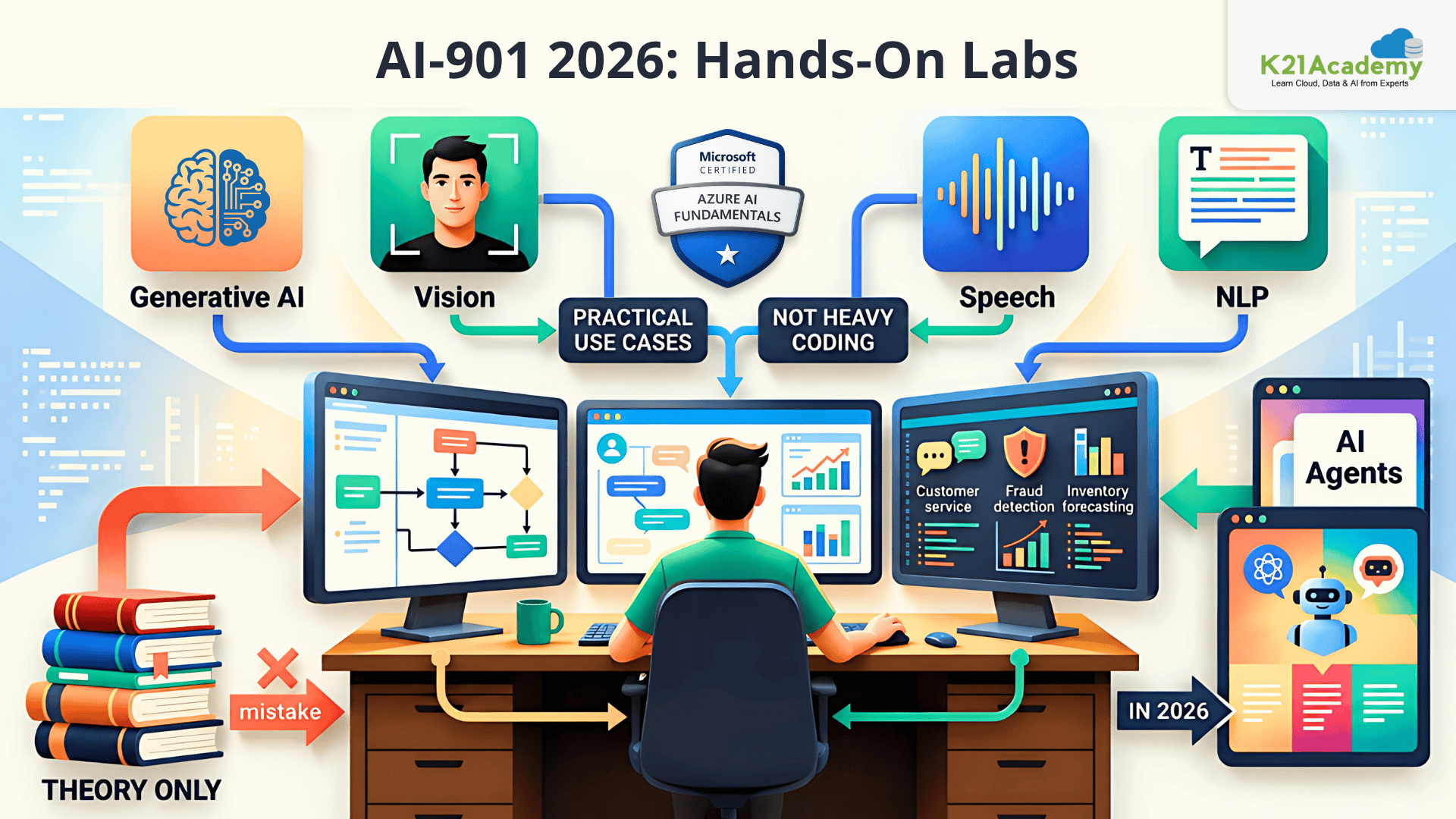

- Microsoft Certified Azure Data Engineer Associate | DP 203 | Step By Step Activity Guides (Hands-On Labs)

- Exam DP-203: Data Engineering on Microsoft Azure

- Azure Data Engineer Interview Questions 2023

- Azure Data Lake For Beginners: All you Need To Know

- Batch Processing Vs Stream Processing: All you Need To Know

- Introduction to Big Data and Big Data Architectures

- Azure Data Factory For Beginners

Next Task For You

In our Azure Data Engineer training program, we will cover 27 Hands-On Labs. If you want to begin your journey towards becoming a Microsoft Certified: Azure Data Engineer Associate by checking our FREE CLASS.

Disclaimer: These questions are best guesses for the kind of questions to expect in the examination.