In 2026, prompt engineering resembles software development in that prompts will be viewed as code that needs to be monitored, tested, versioned, and optimised. Older 2024/2025 tool lists are out of date as teams depend on contemporary rapid engineering tools to guarantee performance and dependability as AI systems grow.

In 2026, the top prompt engineering tools are Microsoft Azure AI Foundry for enterprise use, Promptfoo for testing, Helicone for monitoring, and LangSmith for development.

This guide, which was updated in April 2026, eliminates out-of-date articles like AI Dungeon and covers utilities like LangSmith, Promptfoo, Helicone, and Langfuse.

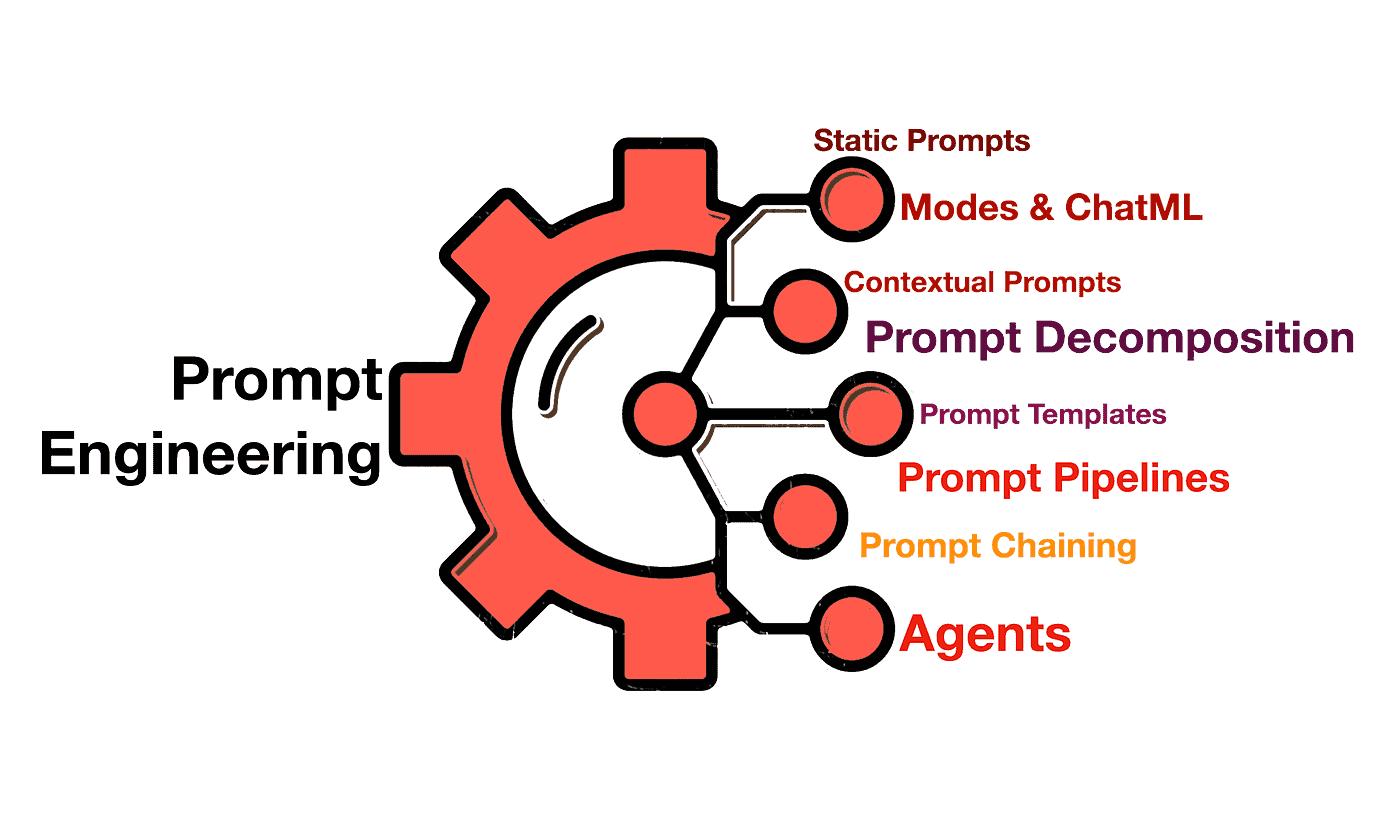

Overview of Prompt Engineering and Its Importance for AI Development

Prompt engineering is the practice of designing and refining inputs given to large language models (LLMs) to produce accurate, relevant, and context-aware outputs. But in 2026, managing prompts at scale with prompt engineering and LLM tools is more important than simply creating better prompts. These software programs assist teams in creating, testing, revising, monitoring, and refining prompts for use in practical applications.

Teams have progressed from basic chat-based testing to full production prompt operations (PromptOps). Similar to traditional software systems, this involves managing prompt regression, doing A/B tests to assess prompt performance, and keeping an eye on usage costs and latency.

By adopting the right prompt engineering tools, teams can move faster, reduce errors, and build more reliable AI systems that consistently deliver high-quality results.

How We Evaluated These Tools

We developed a rigorous prompt tool evaluation approach that prioritised practical usability above hype in order to compile this list of the best prompt engineering tools for 2026. The objective was to assist teams in selecting a prompt tool that functions across the entire PromptOps lifecycle (development → testing → deployment → monitoring).

Evaluation Criteria:

- Active development in 2026 – Consistent updates and strong product roadmap

- Production-readiness – Proven use in real-world LLM applications

- Pricing transparency – Clear and scalable pricing models

- LLM integrations – Compatibility with major providers (OpenAI, Anthropic, open-source models)

- Community adoption – Strong developer ecosystem and industry usage

- End-to-end coverage – Ability to support development, testing, observability, and optimization

Top 12 AI tools for Prompt Engineering

1) LangSmith

LangSmith is a leading development and observability platform built by LangChain, designed for debugging, tracing, and optimizing LLM applications in production.

Key Features:

- End-to-end tracing of LLM calls and prompt chains

- Prompt versioning and Prompt Hub

- Debugging and replay capabilities

- Dataset-based evaluation

Pricing:

- Free tier available

- Paid plans start at ~$39/seat/month

Related Readings: Overview of LangChain Expression Language (LCEL) | K21Academy

2) Promptfoo

Promptfoo is an open-source testing framework for prompt engineering, widely used for CI/CD-based evaluation and red teaming.

Key Features:

- YAML-based test cases

- CI/CD integration

- Red teaming (jailbreak + toxicity testing)

- Multi-model comparisons

Pricing:

- Free (open-source)

3) Helicone

Helicone focuses on monitoring LLM usage, cost, and latency in real time—critical for production systems.

Key Features:

- Request logging and analytics

- Cost tracking per prompt

- Rate limiting

- Performance dashboards

Pricing:

- Free tier available

- Paid plans typically usage-based (~$20–$100+/month)

4) Langfuse

Langfuse is an open-source observability and prompt management platform ideal for teams needing full control and self-hosting.

Key Features:

- Prompt versioning + CMS

- Cost, latency, and token tracking

- Evaluation with human + LLM scoring

- Self-hostable (MIT license)

Pricing:

- Free tier available

- Cloud plans from $29–$199/month

5) Microsoft Azure AI Foundry

Enterprise platform for building, testing, and deploying AI systems at scale.

Key Features:

- Prompt flow pipelines

- Integration with Azure OpenAI

- Governance and compliance

- Enterprise security

Pricing:

- Pay-as-you-go

6) PromptLayer

PromptLayer acts as a middleware layer over LLM APIs, enabling prompt tracking, versioning, and evaluation.

Key Features:

- Prompt registry and version control

- Evaluation workflows

- Cost and usage tracking

- Visual prompt editor

Pricing:

- Free tier available

- Paid plans start ~$49/month

7) Anthropic Console

Anthropic’s interface for testing prompts on Claude models.

Key Features:

- Prompt iteration

- Safety testing

- Model comparison

- API integration

Pricing:

- Usage-based

8) LlamaIndex

LlamaIndex helps structure data for LLMs and optimize prompts using context pipelines.

Key Features:

- RAG pipelines

- Data connectors

- Prompt orchestration

- Query optimization

Pricing:

- Open-source + paid cloud

9) Arize AI

Arize Phoenix provides advanced observability and evaluation for LLM and ML systems.

Key Features:

- Hallucination detection

- Model evaluation dashboards

- Data drift monitoring

- Root cause analysis

Pricing:

- Free + enterprise pricing (custom)

10) DSPy

DSPy treats prompts as programs, enabling automated optimization using feedback loops.

Key Features:

- Declarative prompt pipelines

- Automatic optimization

- Dataset-driven tuning

- Modular architecture

Pricing:

- Open-source (free)

11) OpenAI Playground

A simple interface for testing prompts across OpenAI models.

Key Features:

- Quick experimentation

- Parameter tuning

- Multi-model testing

- Easy UI

Pricing:

- Pay-per-token

12) Flowise

Flowise is a low-code tool for building LLM apps with visual prompt flows.

Key Features:

- Drag-and-drop interface

- LangChain integration

- Prompt chaining

- Open-source flexibility

Pricing:

- Free (open-source)

| Tool | Category | Pricing (Free Tier?) | Open Source | Best For | Our Rating |

|---|---|---|---|---|---|

| LangSmith | Dev + Observability | Free tier ✓ / ~$39+ | ❌ | Debugging, tracing, production apps | ⭐⭐⭐⭐⭐ |

| Promptfoo | Testing | Free ✓ | ✅ | CI/CD testing, red teaming | ⭐⭐⭐⭐⭐ |

| Helicone | Observability | Free tier ✓ / usage-based | ❌ | Cost + latency monitoring | ⭐⭐⭐⭐☆ |

| Langfuse | Observability + Dev | Free tier ✓ / $29+ | ✅ | Open-source prompt tracking | ⭐⭐⭐⭐⭐ |

| Microsoft Azure AI Foundry | Enterprise | Pay-as-you-go (No free tier) | ❌ | Enterprise AI systems | ⭐⭐⭐⭐⭐ |

| PromptLayer | Dev + Observability | Free tier ✓ / $49+ | ❌ | Prompt versioning & logging | ⭐⭐⭐⭐☆ |

| Anthropic Console | Development | Usage-based (Limited free credits) | ❌ | Claude prompt testing | ⭐⭐⭐⭐☆ |

| LlamaIndex | Framework | Free ✓ / paid cloud | ✅ | RAG + data pipelines | ⭐⭐⭐⭐⭐ |

| Arize AI | Observability + Evaluation | Free ✓ / enterprise | ❌ | Evaluation + hallucination detection | ⭐⭐⭐⭐⭐ |

| DSPy | Framework + Optimization | Free ✓ | ✅ | Prompt optimization via code | ⭐⭐⭐⭐⭐ |

| OpenAI Playground | Development | Pay-per-token (Free credits sometimes) | ❌ | Quick prompt testing | ⭐⭐⭐⭐☆ |

| Flowise | Framework (Low-code) | Free ✓ | ✅ | Visual prompt workflows | ⭐⭐⭐⭐☆ |

FAQs

How to use GPT-4 API for prompt engineering?

GPT-4 API allows for crafting detailed and context-aware prompts to optimize the performance of AI-driven applications. Developers can fine-tune the model’s outputs by adjusting the inputs, ensuring relevant and precise responses.

Which prompt engineering tool is best for enterprise AI projects?

Microsoft Azure OpenAI Service is ideal for enterprise-level AI applications, offering secure, scalable access to OpenAI models while ensuring compliance with industry standards.

What is the best AI tool for prompt?

The OpenAI GPT-4 API is widely regarded as one of the most powerful tools for it, thanks to its ability to generate contextually accurate and nuanced responses.

Conclusion

It is at the core of creating highly effective and tailored AI solutions. With the right tools, developers can refine their AI model’s output, making it more accurate, contextually aware, and suitable for specific use cases. The top tools listed in this blog are revolutionizing how we interact with AI, whether for content generation, conversational agents, or creative projects.

As AI technologies continue to evolve, mastering it and utilizing the best tools will help you stay ahead of the curve and create powerful, scalable AI solutions. Start experimenting with these tools today and unlock the full potential of your AI projects.

![AWS DevOps [DOP-C02] Professional Step By Step Activity Guides (Hands-On Labs)](https://k21academy.com/wp-content/uploads/2023/02/DOP-C02-1.png)