In this blog, we are going to cover What is Azure Data Factory is, How does Data Factory work, Copy Pipeline In Azure Data Factory.

Topics we’ll cover:

- Azure Data Factory

- How Azure Data Factory Works

- What are Pipelines

- Copy Activity In Azure Data Factory

- Copy Pipeline In Azure Data Factory

What Is Azure Data Factory?

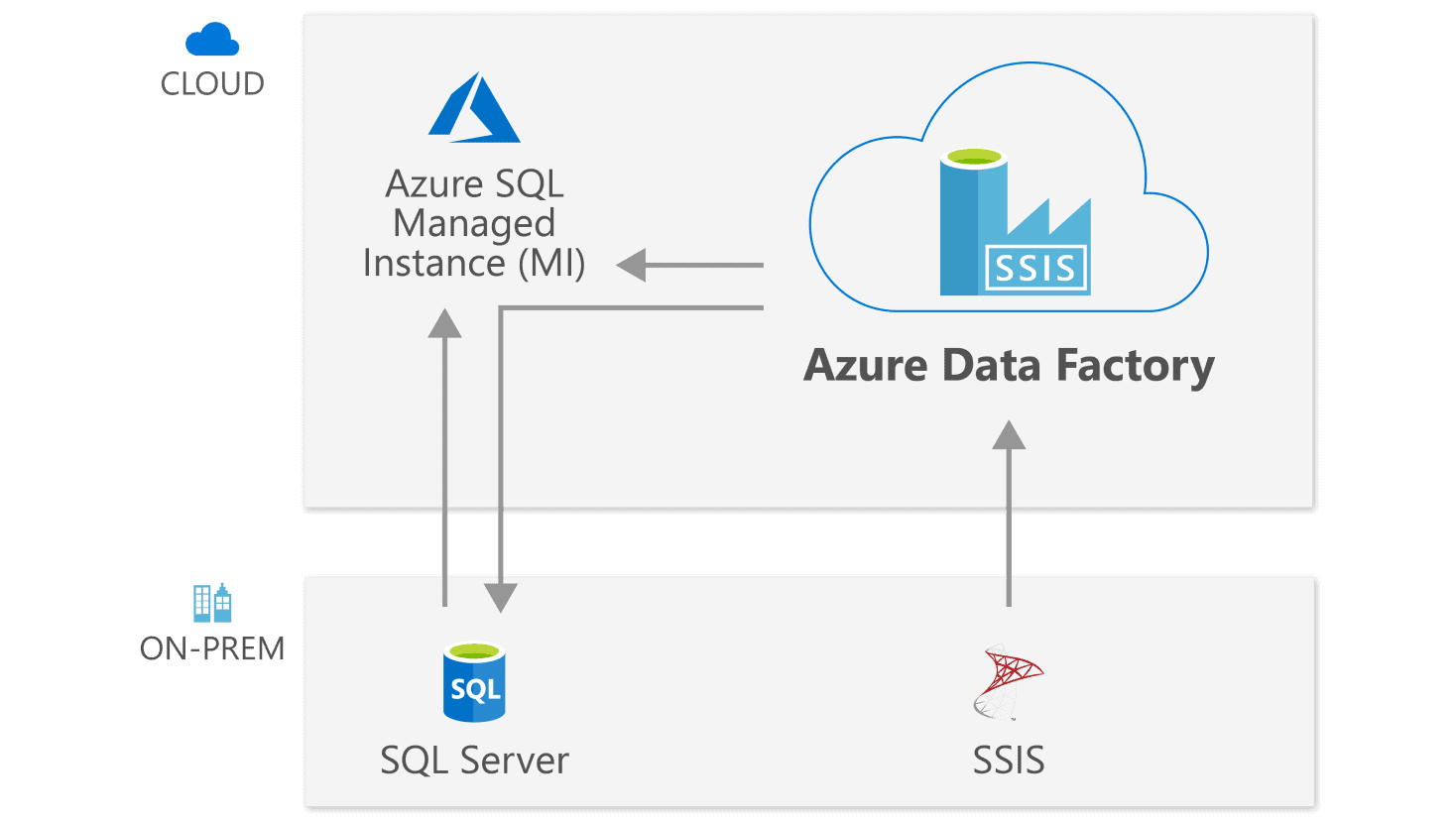

Azure Data Factory is a cloud-based data integration service that allows you to create data-driven workflows in the cloud for orchestrating and automating data movement and data transformation.

Source: Microsoft

Azure Data Factory does not store any data itself. It allows you to create data-driven workflows to orchestrate the movement of data between supported data stores and the processing of data using compute services in other regions or in an on-premise environment. It also allows you to monitor and manage workflows using both programmatic and UI mechanisms.

You can check out our related blog here: Azure Data Factory for Beginners

How Does Data Factory work?

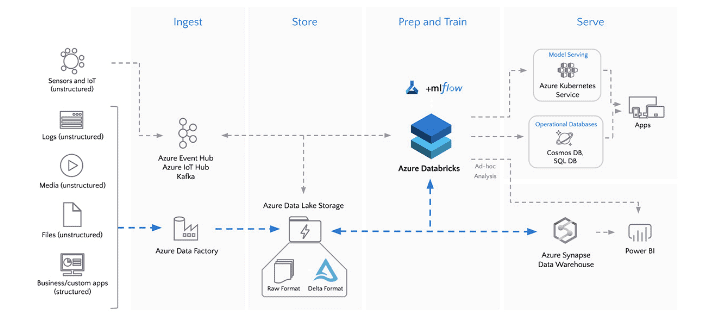

1) Extract: In this extraction process, data engineers define the data and its source. Data source: Identify source details such as the subscription, resource group, and identity information such as secretor a key. Data: Define data by using a set of files, a database query, or an Azure Blob storage name for blob storage.

2) Transform: Data transformation operations can include combining, splitting, adding, deriving, removing, or pivoting columns. Map fields between the data destination and the data source.

3) Load: During a load, many Azure destinations can take data formatted as a file, JavaScript Object Notation (JSON), or blob. Test the ETL job in a test environment. Then shift the job to a production environment to load the production system.

4) Publish: Deliver transformed data from the cloud to on-premise sources like SQL Server or keep it in your cloud storage sources for consumption by BI and analytics tools and other applications.

Read: Difference between Structured Vs Unstructured Data

What are Pipelines?

A pipeline is a logical grouping of activities that together perform a task. For example, a pipeline could contain a set of activities that ingest and clean log data, and then kick off a mapping data flow to analyze the log data. The pipeline allows you to manage the activities as a set instead of each one individually. You deploy and schedule the pipeline instead of the activities independently.

Copy Activity In Azure Data Factory

In ADF, we can use the Copy activity to copy data between data stores located on-premises and in the cloud. After we copy the data, we can use other activities to further transform and analyze it. We can also use the DF Copy activity to publish transformation and study results for business intelligence (BI) and application consumption.

- Monitor Copy Activity: We can monitor all of our pipeline’s runs natively in the ADF user experience.

- Delete Activity In Azure Data Factory: We can Back up your files before you are deleting them with the Delete activity in case you wish to restore them in the future.

Copy Pipeline In Azure Data Factory

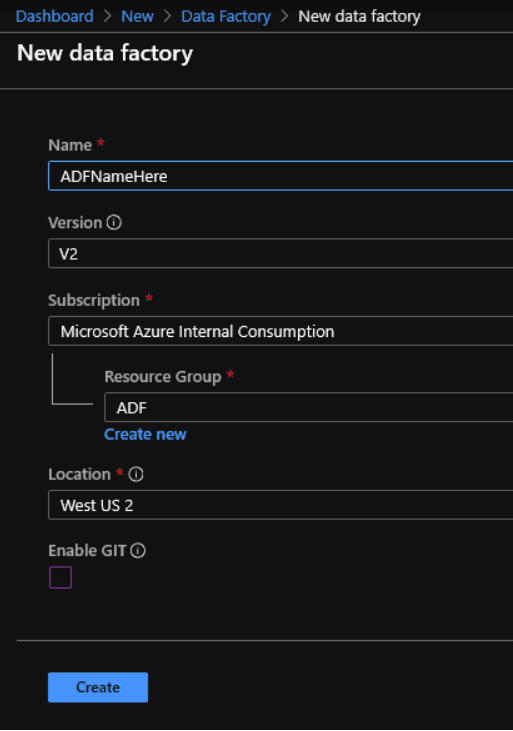

1.) Create Data Factory

1. Go to portal.azure.com and click the Create Resource menu item from the top left menu. Create a new Data Factory.

2. Fill in the fields similar to below.

3. Once your data factory is set up open it in Azure. Click the Author and Monitor button.

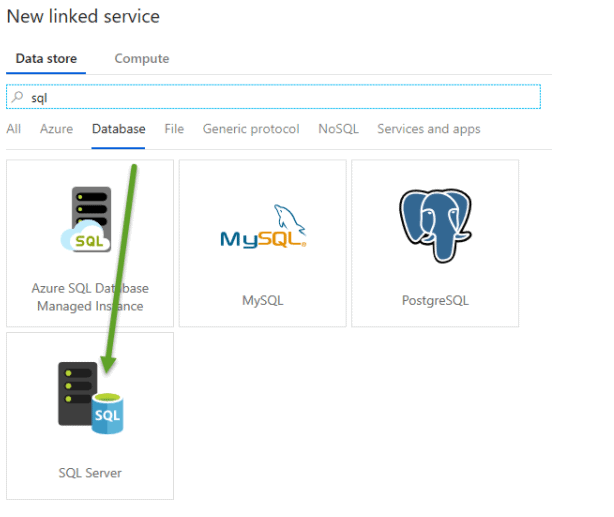

4. Click the Connections menu item at the bottom left and then Pick the Database category and then click SQL Server.

5. Create the new linked service and make sure to test the connection before you proceed.

2.) Create SQL Database

1. Go to portal.azure.com and click the Create Resource menu item from the top left menu. Create an Azure SQL Database.

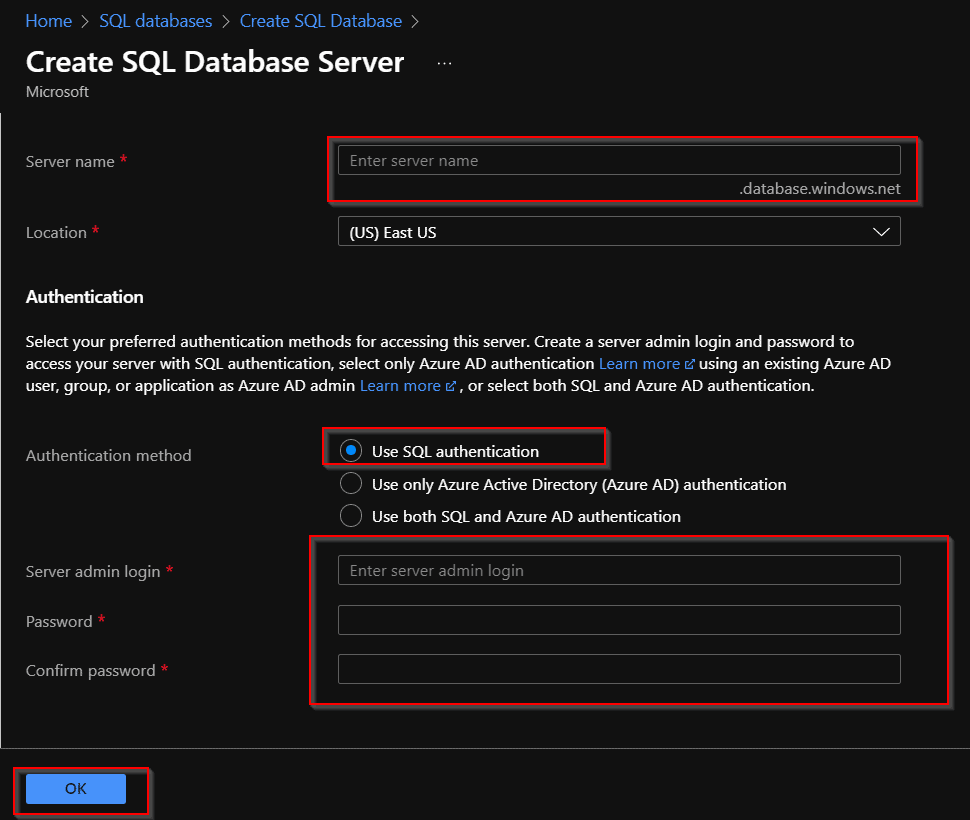

2. Fill in fields for the first screen similar to below. For the new server (it’s actually not a server but a way to group databases) give an ID and Password that you will remember.

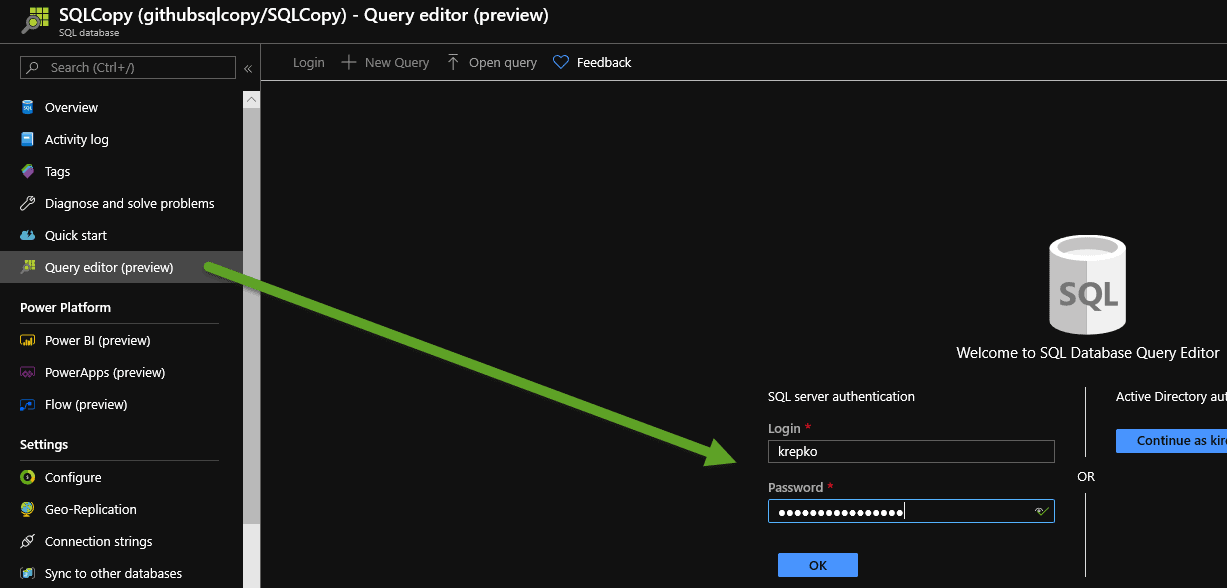

3. Now click the Query editor and log in with your SQL credentials which are the admin ID and password.

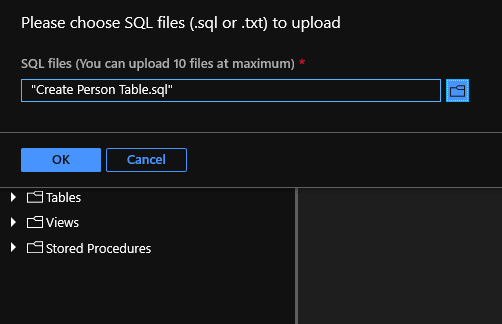

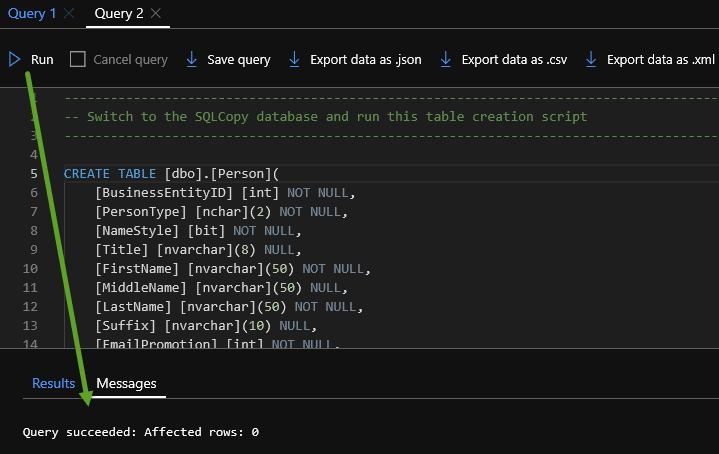

4. You have a choice to get the SQL script to create the destination table. Either open the “Create Person Table.SQL” in GitHub and copy and paste it into the Query editor or you can copy the file locally to your laptop.

5. Run the query and your Person table should be created.

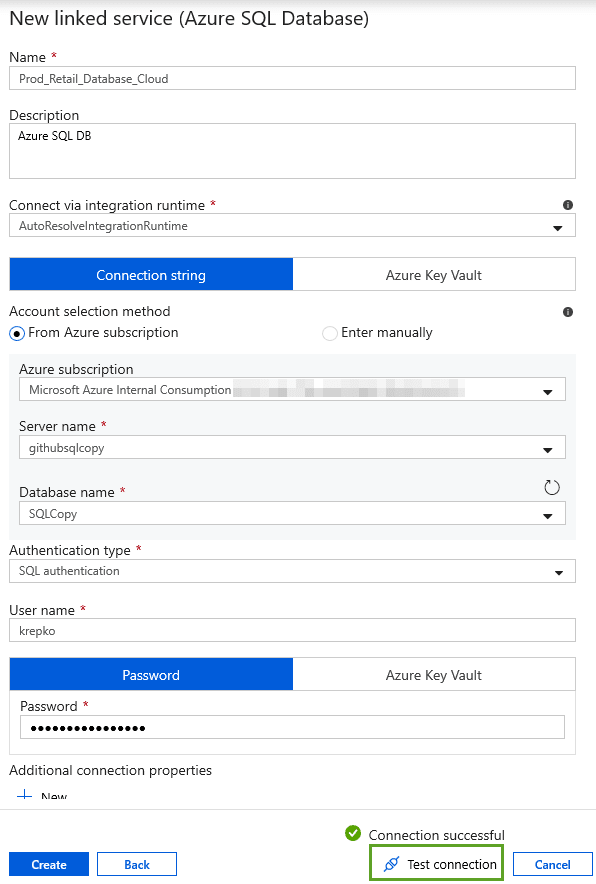

3.) Create a Linked Service

1. Go back to Data Factory, click the Author item and then click the bottom left Connections menu. Create a new Linked Service for your Azure SQL DB you created earlier. Use the Azure SQL DB admin ID and password you used in the earlier lab when you set up the Azure SQL DB.

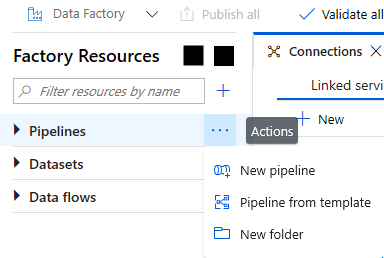

2. Now in Azure Data Factory click the ellipses next to Pipelines and create a new folder to keep things organized.

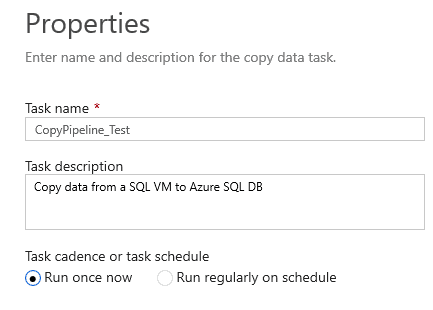

3. Click the + icon to the right of the “Filter resources by name” input box and pick the Copy Data option.

4. When working in a wizard like the Copy Wizard or creating pipelines from scratch make sure to give a good name to each pipeline, linked service, data set, and other components so it will be easier to work with later.

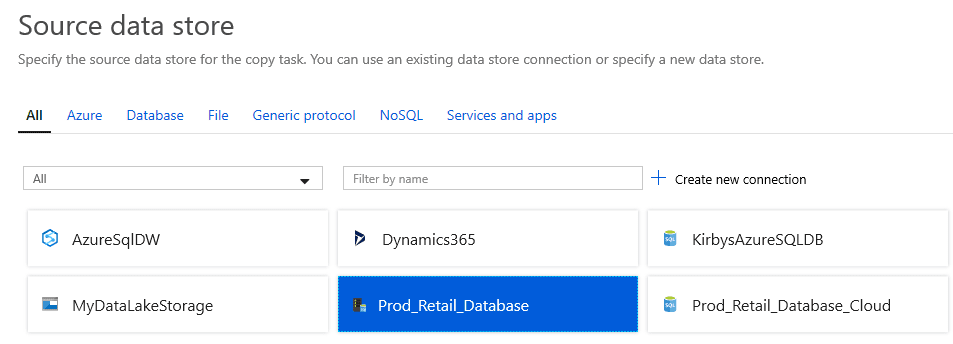

5. Click next and pick the Person.Person table.

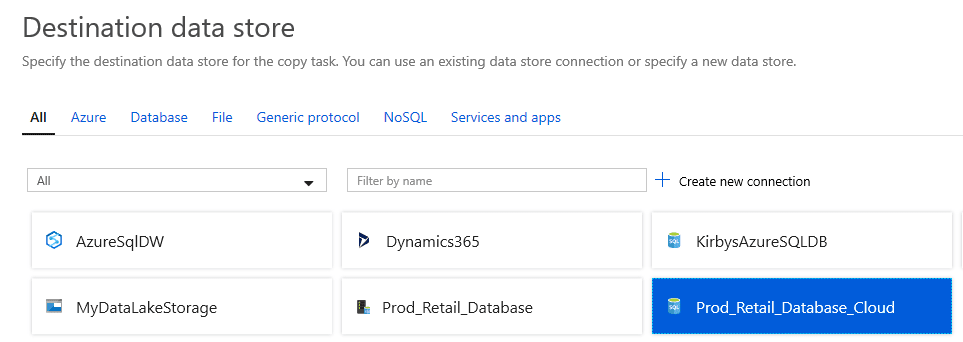

6. Click next twice and then for your destination pick the Azure SQL DB connection you created earlier and click Next.

7. Then pick the person table as the destination and leave the default column mapping and click next a few times until you come to the screen that says Deployment Complete.

4.) Monitoring

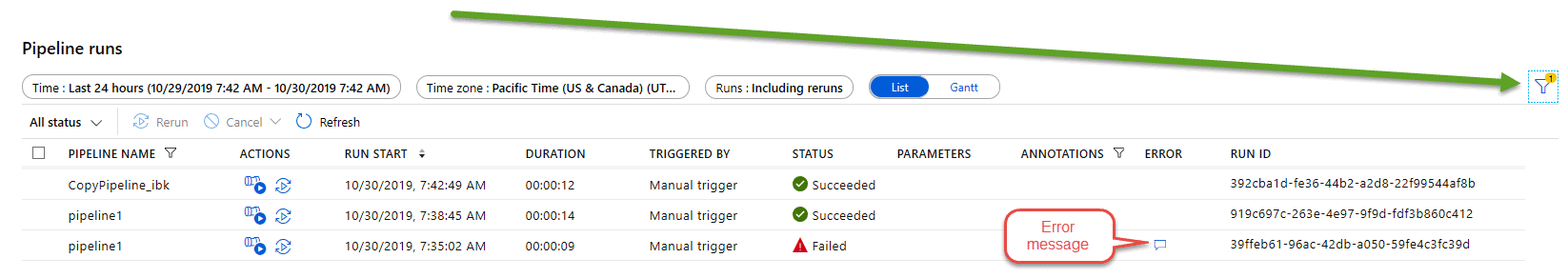

1. Now click on the Monitor button to see your pipeline job running. You should see a screen similar to below. If you don’t see your job pipeline check your filters on the top right.

Finally, You will get to know how to create pipelines to copy data from a SQL Server on a VM into Azure SQL Database (Platform as a Service).

Related/References

- Microsoft Certified Azure Data Engineer Associate | DP 203 | Step By Step Activity Guides (Hands-On Labs)

- Exam DP-203: Data Engineering on Microsoft Azure

- Microsoft Azure Data Engineer Associate [DP-203] Interview Questions

- Azure Data Lake For Beginners: All you Need To Know

- Batch Processing Vs Stream Processing: All you Need To Know

- Reading and Writing Data In DataBricks

Next Task For You

In our Azure Data Engineer training program, we will cover 28 Hands-On Labs. If you want to begin your journey towards becoming a Microsoft Certified: Azure Data Engineer Associate by checking out our FREE CLASS.

Leave a Reply