This blog post gives a walkthrough of the Step-By-Step Activity Guides & Nearly real-time Projects of the AWS Certified DevOps Professional DOP-C02 training program that you must perform to learn this course.

Note: Know more about AWS DevOps (DOP-C02) Engineer Professional

Here’s a quick sneak-peek of how to start learning AWS Certified DevOps Professional DOP-C02 by doing Hands-on.

List of Labs that we include in our training AWS Certified DevOps Professional DOP-C02

- Create an AWS Free Trial Account

- CloudWatch – Create Billing Alarm & Service Limits

- Create & Connect to an Amazon EC2 Machine

- Install & Configure AWS CLI, Setup GIT, Node JS & SDK

- Working with AWS CodeCommit

- Build an Application with AWS CodeBuild

- Create a Simple Pipeline (CodePipeline)

- Create and Update Stacks using CloudFormation

- Blue-Green Deployments Using Elastic Beanstalk

- Setting Up AWS Config to Assess Audit & Evaluate AWS Resources

- Build an API Gateway with Lambda Integration

- Event-Driven Architectures Using AWS Lambda, SES, SNS, and SQS

- Enable CloudTrail and Store Logs in S3

- Configure Amazon CloudWatch to notify a change in EC2 CPU Utilization

- Install CloudWatch Agent on EC2 Instance & View CloudWatch Metrics

- Amazon Athena

- Using AWS Systems Manager for Patch Management

- AWS Organizations and Service Control Policies

- Classify sensitive data in your environment using Amazon Macie

- Amazon Inspector

- Visualize Web Traffic Using Kinesis Data Streams

- Block Web Traffic with WAF in AWS

- Configure a Load Balancer And Autoscaling on EC2 Instances

- Registering a Domain Name For Free

- Working with Route53 for Disaster Recovery

- Configuring a MySQL DB Instance via RDS

- Create & Manage Amazon Aurora Global Databases

- Create & Query with Amazon DynamoDB

- Process New Items with DynamoDB Streams and Lambda

-

Real-Time Projects

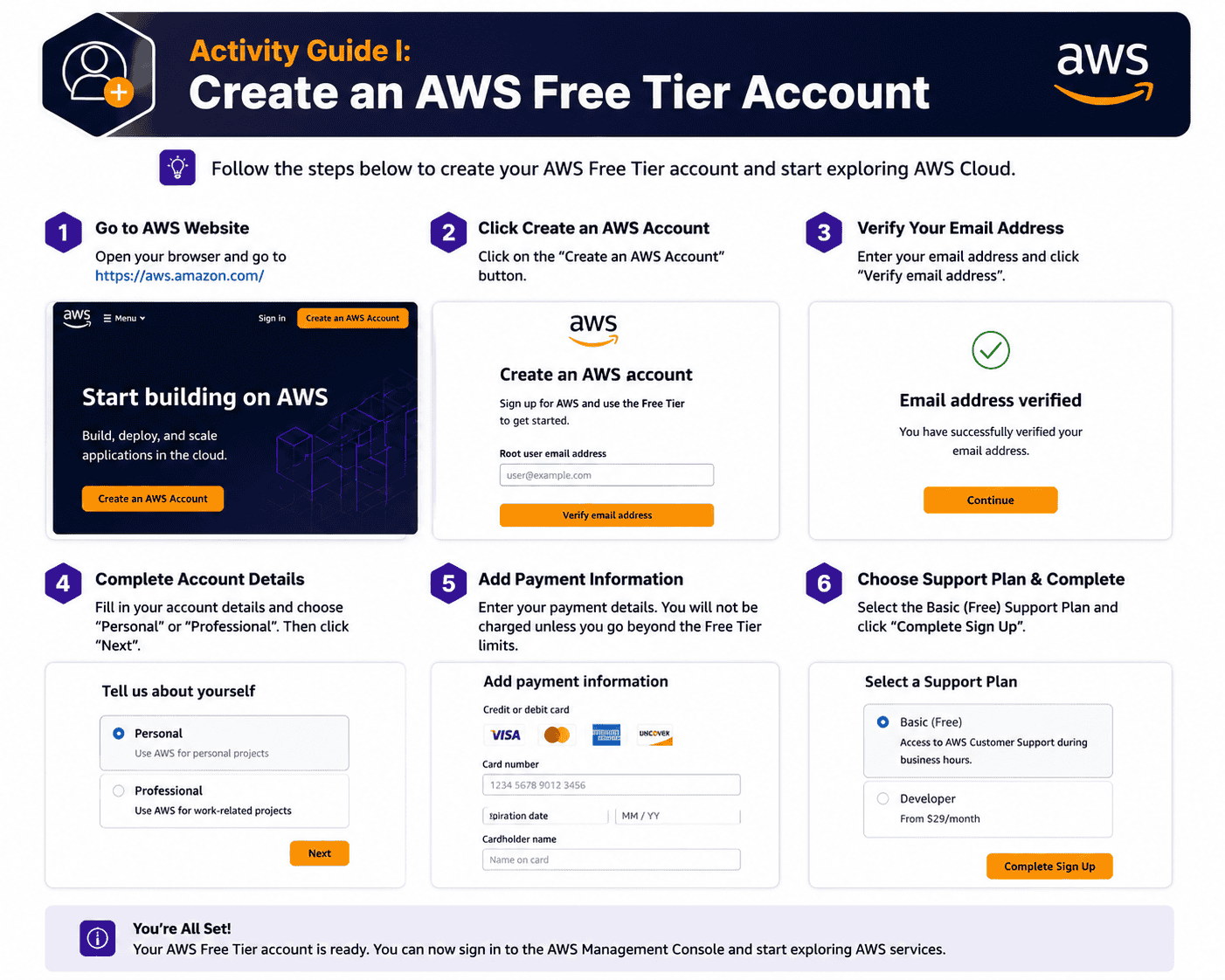

Activity Guide: Create an AWS Free Tier Account

Amazon Web Services (AWS) offers a free tier that gives new subscribers 6 months of hands-on access to most core services, with usage limits designed for learning rather than production workloads. For AWS users, the Free Tier is non-negotiable — you cannot pass the AWS exam reliably without practicing in a real account.

This lab covers signing up for AWS, validating your billing details, and confirming free tier eligibility on the AWS Management Console.

What you’ll learn:

- How to register for an AWS Free Tier account

- How to verify your account and set up MFA on the root user

- How to navigate the AWS Management Console for the first time

📘 Detailed walkthrough: How to Create an AWS Free Tier Account

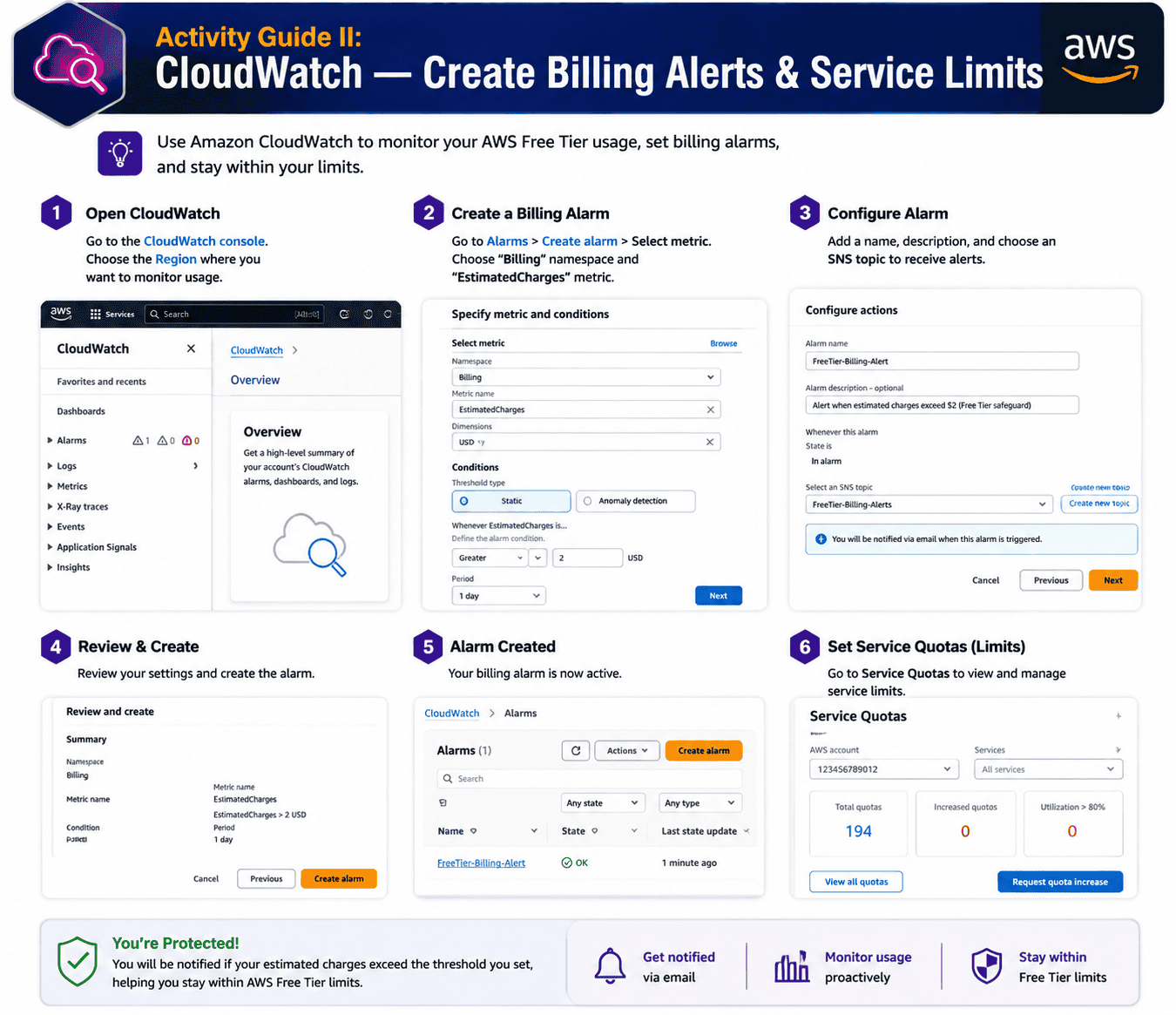

Activity Guide: CloudWatch – Create Billing Alerts & Service Limits

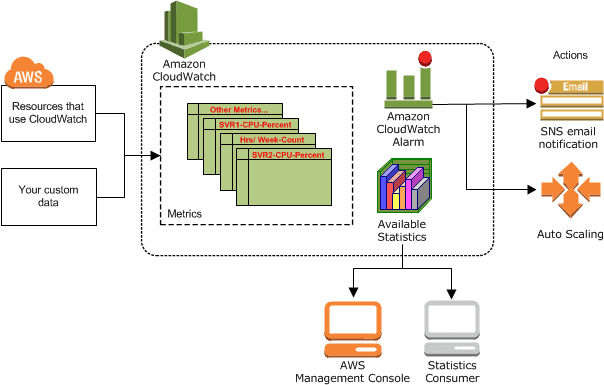

Even on the Free Tier, accidental over-usage can result in a surprise bill. Amazon CloudWatch lets you create billing alarms that notify you the moment your spend crosses a threshold – a critical Day 1 setup for any AWS Cloud Practitioner learner.

CloudWatch monitors AWS resources and applications, collects metrics and logs, and can trigger automated actions. In this lab, you’ll set a $10 billing alarm and configure SNS to email you when it fires.

What you’ll learn:

- How to enable AWS billing alerts in your account

- How to create a CloudWatch alarm tied to your AWS account billing metric

- How to configure SNS email notifications for the alarm

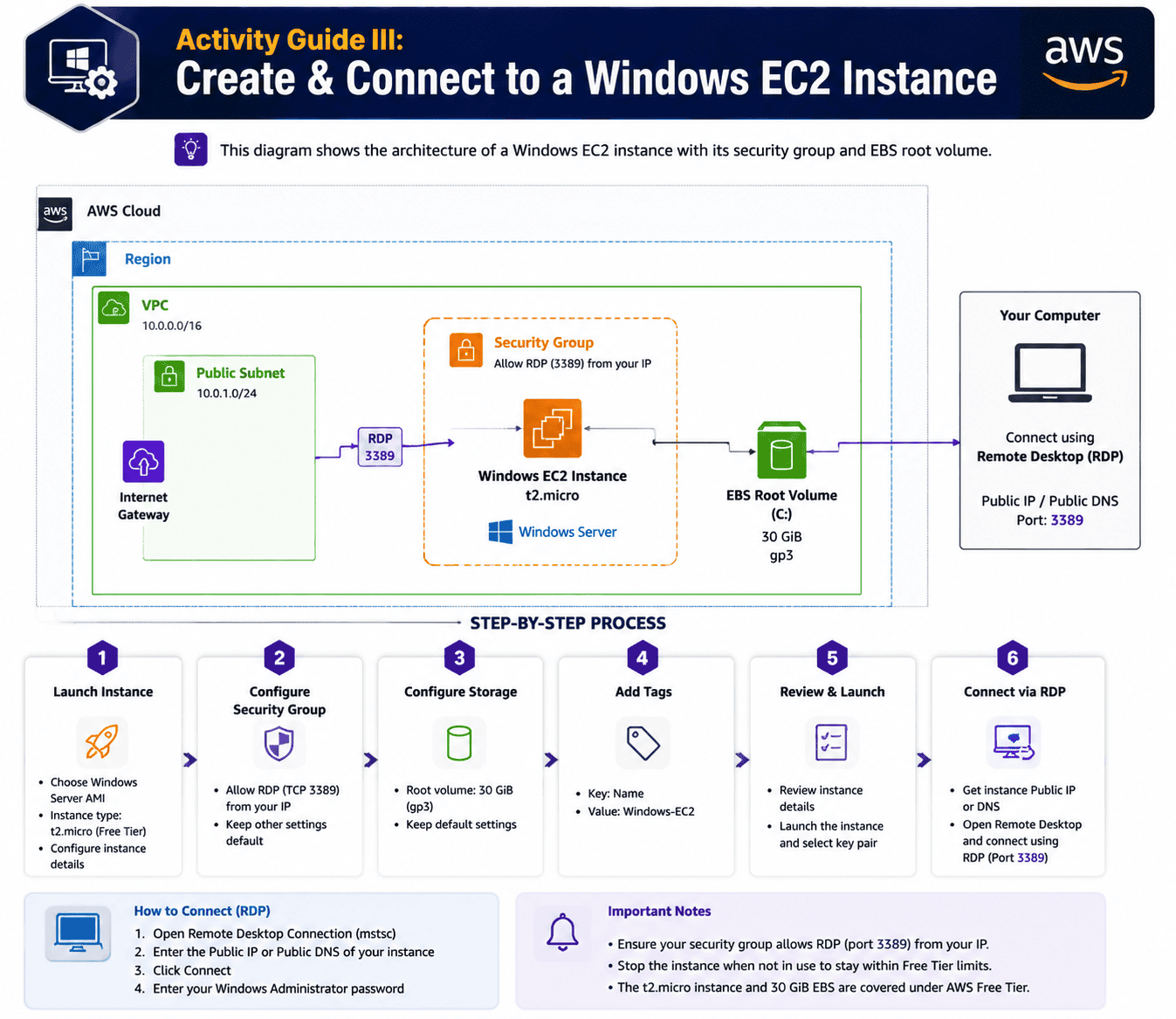

Activity Guide: Create & Connect to a Windows EC2 Instance

Amazon EC2 (Elastic Compute Cloud) provides resizable virtual servers in the AWS Cloud. EC2 appears in roughly 1 in 5 questions on the AWS Cloud Practitioner exam, so getting comfortable with launching, connecting to, and terminating instances pays back across the entire test.

This lab walks through launching a Windows Server EC2 instance, configuring its security group, generating a key pair, and connecting via Remote Desktop (RDP).

What you’ll learn:

- How to launch a Windows EC2 instance from an AMI

- How to configure security groups and key pairs

- How to connect to your instance using RDP

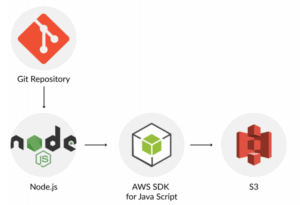

Activity Guide: Install & Configure AWS CLI, Setup GIT, Node JS & SDK

The AWS Command Line Interface is an open-source and unified tool that enables you to interact with AWS services/resources using commands in your command-line shell. With minimum configuration, AWS CLI also enables you to start running commands that implement functionality equivalent to that provided by the browser-based AWS Management Console from the command prompt in your favorite terminal program.

In this activity guide, you will learn how to set up and use the AWS CLI, GIT, and SDKs.

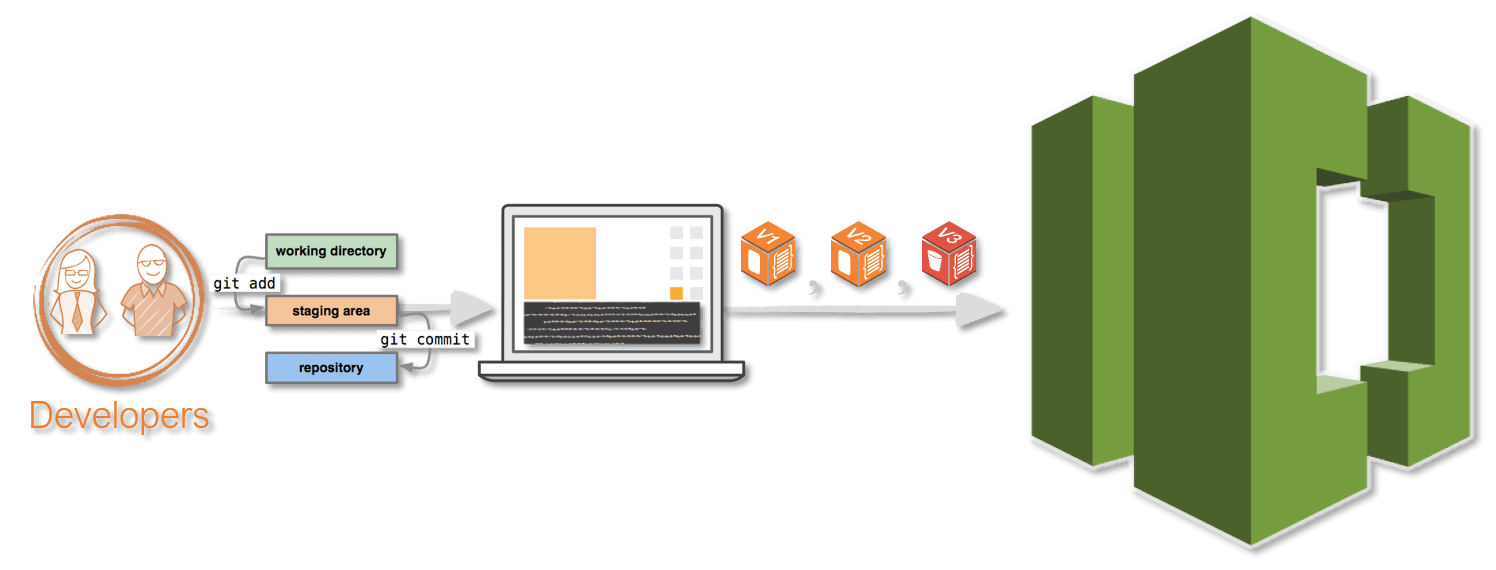

Activity Guide: Working with AWS CodeCommit

AWS CodeCommit is a fully-managed source control service that hosts secure Git-based repositories. It makes it easy for teams to collaborate on code in a secure and highly scalable ecosystem. CodeCommit eliminates the need to operate your source control system or worry about scaling its infrastructure. You can use CodeCommit to securely store anything from source code to binaries, and it works seamlessly with your existing Git tools.

In this activity guide, you will learn the steps to creating an IAM user, logging in to the account, creating CodeCommit, and Adding Files to the Repository

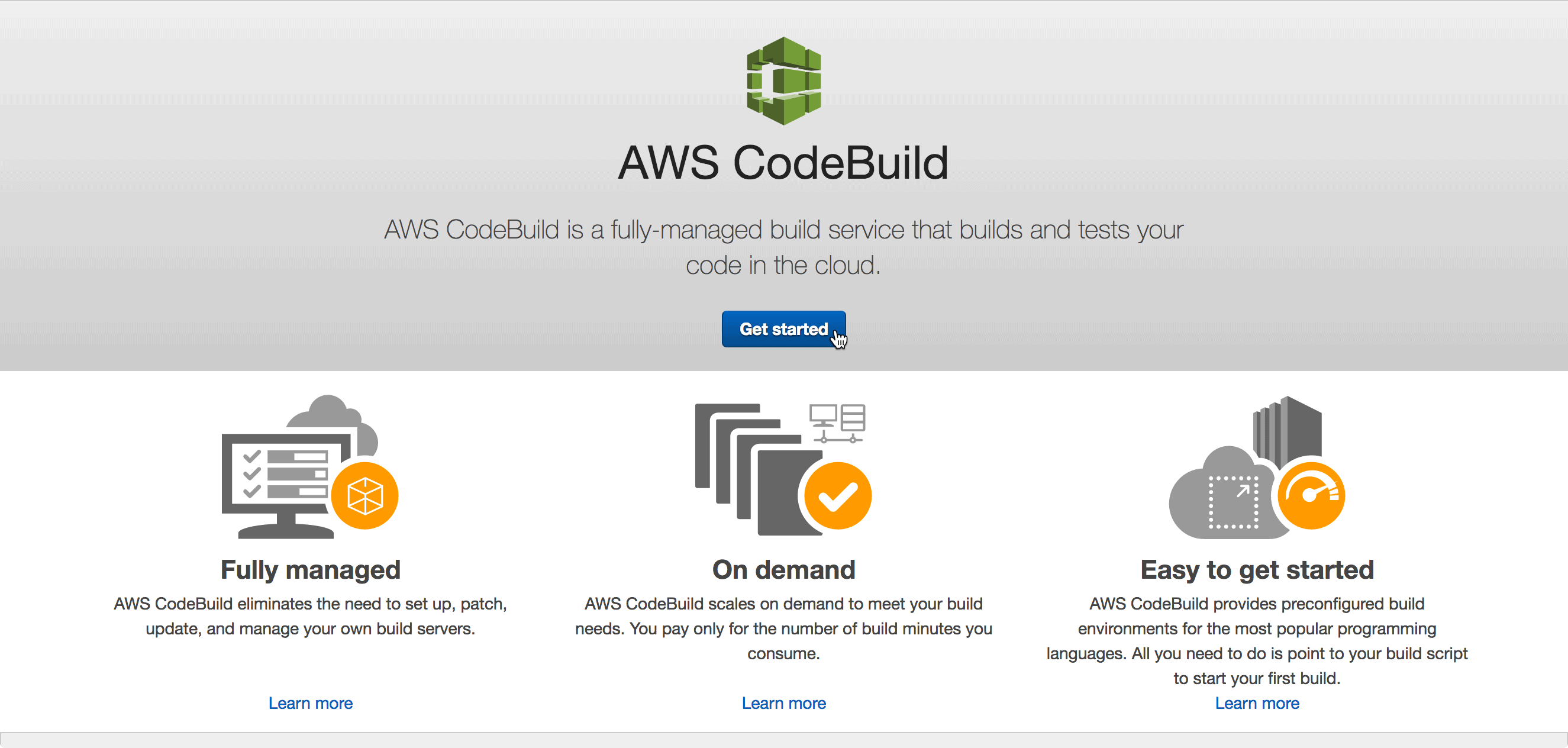

Activity Guide: Build an Application with AWS CodeBuild

AWS CodeBuild is a fully managed continuous integration service that compiles source code, runs tests, and produces software packages that are ready to deploy. With CodeBuild, you don’t need to provision, manage, and scale your own build servers. CodeBuild scales continuously and processes multiple builds concurrently, so your builds are not left waiting in a queue.

In this activity guide, you will learn to create two S3 buckets, create the source code, and upload the source code and the build spec file

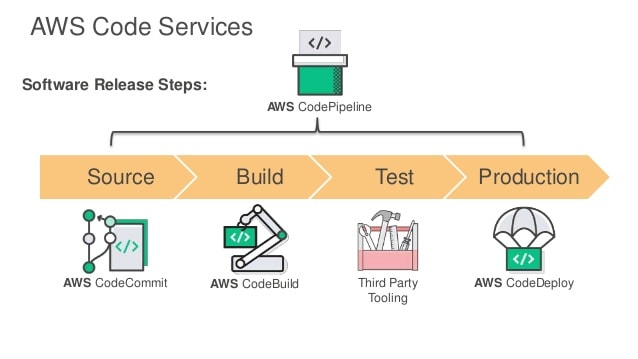

Activity Guide: Create a Simple Pipeline (CodePipeline)

AWS CodePipeline is a fully managed continuous delivery service that helps you automate your release pipelines for fast and reliable application and infrastructure updates.

CodePipeline automates the build, test, and deploy phases of your release process every time there is a code change, based on the release model you define. This enables you to rapidly and reliably deliver features and updates.

You can easily integrate AWS CodePipeline with third-party services such as GitHub or with your own custom plugin. With AWS CodePipeline, you only pay for what you use. There are no upfront fees or long-term commitments.

Check our blog on Deploy Web App From S3 Bucket To EC2 Instance Using CodePipeline.

In this activity guide, you will learn to build a complete pipeline to automate and deploy applications.

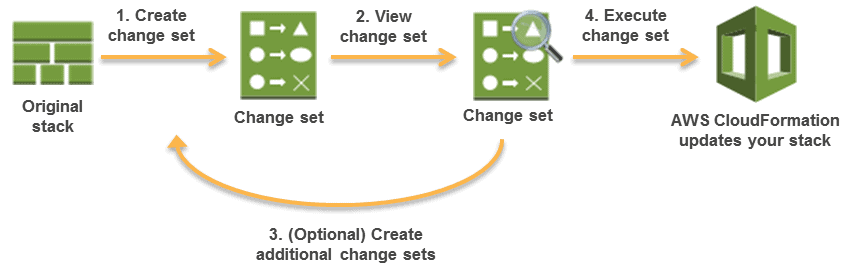

Activity Guide: Create and Update Stacks Using CloudFormation

AWS CloudFormation gives you an easy way to model a collection of related AWS and third-party resources, provision them quickly and consistently, and manage them throughout their lifecycles by treating infrastructure as code.

A CloudFormation template describes your desired resources and their dependencies so you can launch and configure them together as a stack.

Check our blog on Introduction to AWS CloudFormation

In this activity guide, you will learn to create, update, and delete an entire stack as a single unit, as often as you need to, instead of managing resources individually. You can manage and provision stacks across multiple AWS accounts and AWS Regions.

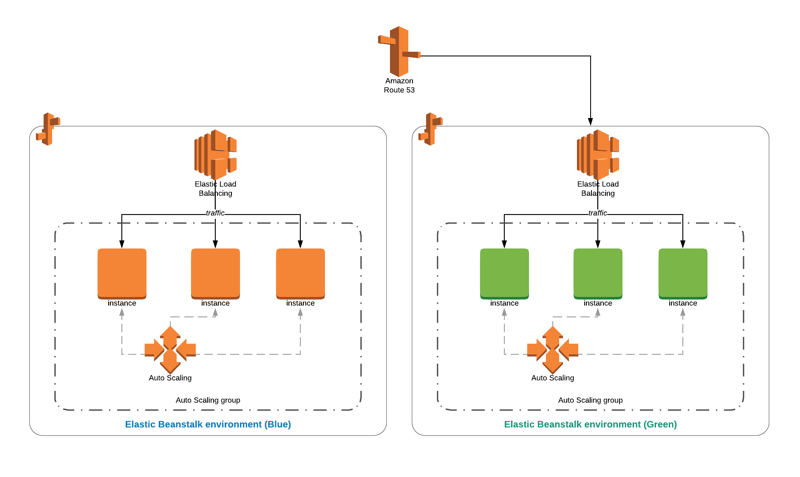

Activity Guide: Blue/Green Deployments using Elastic Beanstalk

AWS Elastic Beanstalk is an easy-to-use service for deploying and scaling web applications and services developed with Java, .NET, PHP, Node.js, Python, Ruby, Go, and Docker on familiar servers such as Apache, Nginx, Passenger, and IIS.

You can simply upload your code, and Elastic Beanstalk automatically handles the deployment, from capacity provisioning, load balancing, and auto-scaling to application health monitoring. At the same time, you retain full control over the AWS resources powering your application and can access the underlying resources at any time.

Check out our blog on Blue-Green Deployment in AWS – The Zero Downtime Deployment.

In this activity guide, you will learn to deploy and scale web applications and services developed with Node.js, PHP, Python, etc on different servers.

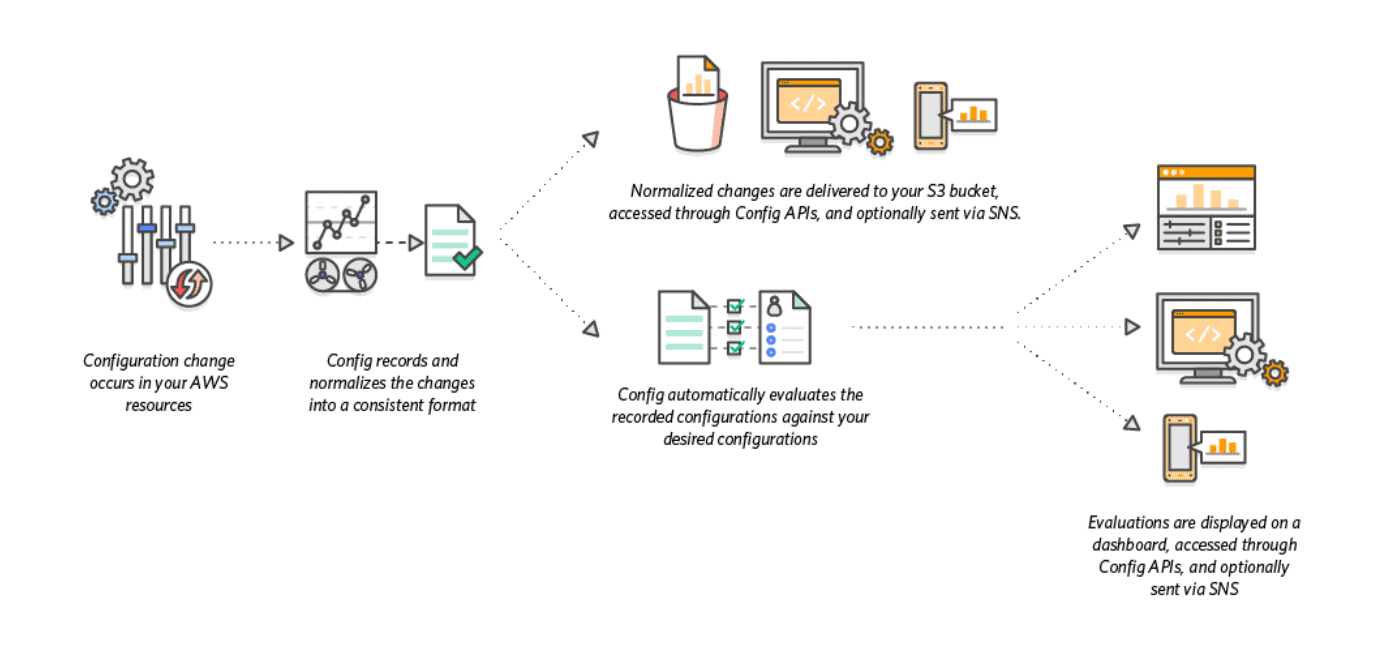

Activity Guide: Setting Up AWS Config to Assess Audit & Evaluate AWS Resources

AWS Config is a service that enables you to assess, audit, and evaluate the configurations of your AWS resources. Config continuously monitors and records your AWS resource configurations and allows you to automate the evaluation of recorded configurations against desired configurations. With Config, you can review changes in configurations and relationships between AWS resources, dive into detailed resource configuration histories, and determine your overall compliance against the configurations specified in your internal guidelines.

In this activity guide, you will learn how to enable governance using AWS Config.

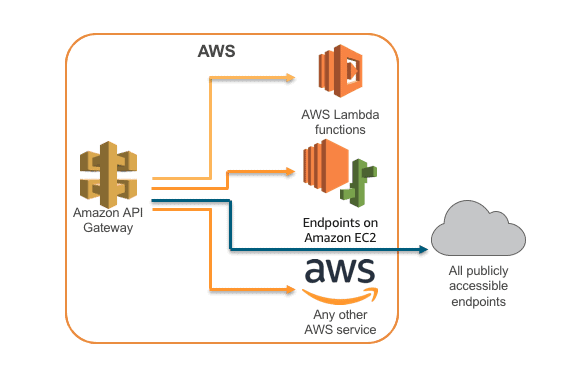

Activity Guide: Build API Gateway with Lambda Integration

Amazon API Gateway is a fully managed service that makes it easy for developers to create, publish, maintain, monitor, and secure APIs at any scale. APIs act as the “front door” for applications to access data, business logic, or functionality from your backend services.

AWS Lambda lets you run code without provisioning or managing servers. You pay only for the compute time you consume. With Lambda, you can run code for virtually any type of application or backend service – all with zero administration.

In this activity guide, you will learn how to create an API Gateway and integrate it with Lambda

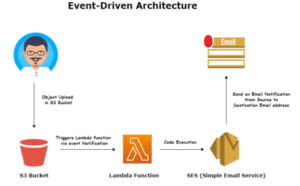

Activity Guide: Event-Driven Architectures Using AWS Lambda, SES, SNS, & SQS

AWS Lambda is a service by AWS that lets you run your code without managing the servers; you pay only for the compute time you consume. With the Lambda service, you can run code for virtually any type of app or backend services, all with zero administration. Here, you just have to upload your code, and Lambda takes care of everything required to run and scale your code with high availability and durability. Also, you can set up your code to automatically trigger from other AWS services or call it directly from any web or mobile application.

Amazon SQS is a fully configured message queue service that enables you to decouple and scale multiple microservices, distributed systems, and serverless applications. Using the SQS, you can send, receive, and store messages between the software components at any volume, without losing messages or requiring other services to be available.

Amazon SNS is a fully managed messaging service for both types of communication application-to-application (A2A) and application-to-person (A2P).

An event-driven architecture uses events to trigger and communicate between decoupled services, and it acts as a common modern application built with microservices. An event is a change in state, like an item being placed in a shopping cart on an e-commerce website. Events can either carry a state, or events can be identifiers.

It has three key components: event producers, event routers, and event consumers. A producer publishes an event to the router, which filters and pushes the events to users. Producer services and consumer services are decoupled, which allows users to scale, update, and deploy independently.

In this activity guide, you will learn about Event-driven architecture.

Activity Guide: Enable CloudTrail and store Logs in S3

Amazon S3 is integrated with AWS CloudTrail, a service that provides a record of actions taken by a user, role, or AWS service in Amazon S3. CloudTrail captures a subset of API calls for Amazon S3 as events, including calls from the Amazon S3 console and from code calls to the Amazon S3 APIs.

If you create a trail, you can enable continuous delivery of CloudTrail events to an Amazon S3 bucket, including events for Amazon S3.

In this activity guide, you will learn to implement step-by-step instructions to create a trail and store logs in an S3 bucket.

Activity Guide: Configure Amazon CloudWatch to Notify Change In EC2 CPU Utilization

Amazon CloudWatch is a monitoring and observability service built for DevOps engineers, developers, site reliability engineers (SREs), and IT managers. CloudWatch provides you with data and actionable insights to monitor your applications, respond to system-wide performance changes, optimize resource utilization, and get a unified view of operational health.

A metric alarm watches a single CloudWatch metric or the result of a math expression based on CloudWatch metrics. The alarm performs one or more actions based on the value of the metric or expression relative to a threshold over several time periods. The action can be an Amazon EC2 action, an Auto Scaling action, or a notification sent to an Amazon SNS topic.

In this activity guide, we cover step-by-step instructions on how to create CloudWatch Alarms to notify when the CPU Utilization of an Instance exceeds the threshold.

Activity Guide: Install CloudWatch Agent on EC2 Instance and View CloudWatch Metrics

Amazon CloudWatch is a monitoring and observability service built for DevOps engineers, developers, site reliability engineers (SREs), and IT managers. CloudWatch provides you with data and actionable insights to monitor your applications, respond to system-wide performance changes, optimize resource utilization, and get a unified view of operational health.

In this activity guide, we cover step-by-step instructions for installing CloudWatch Agent on an EC2 instance for Metrics Visualization

Activity Guide: Amazon Athena

Amazon Athena is an interactive query service offered by AWS that makes it easy to analyze the data in S3 using standard SQL. Hence, Athena is serverless, so there is no infrastructure to manage, and you pay only for the queries that you run.

Athena is easy to use, which points to your data in S3, defines the schema, and starts querying using SQL. Mostly, the results are delivered within seconds, which makes it easy for everyone with SQL skills to quickly analyze large-scale datasets.

In this activity guide, you will learn how to analyze data sets through Amazon Athena.

Activity Guide: Using AWS Systems Manager for Patch Management

AWS Systems Manager gives you visibility and control of your infrastructure on AWS. Systems Manager provides a unified user interface so you can view operational data from multiple AWS services, and allows you to automate operational tasks across your AWS resources.

In this activity guide, we cover the creation and management of Infrastructure using AWS SSM.

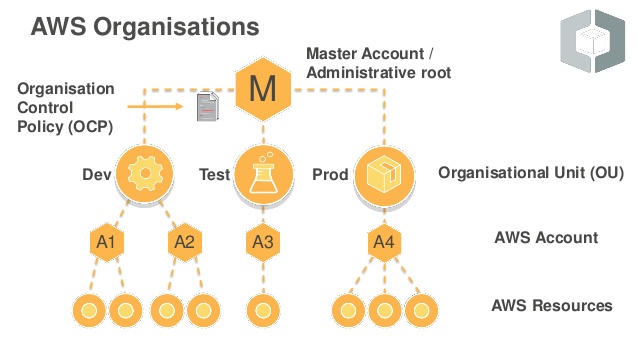

Activity Guide: AWS Organizations & Service Control Policies

AWS Organizations helps you centrally govern your environment as you grow and scale your workloads on AWS. Whether you are a growing startup or a large enterprise, Organizations help you to centrally manage billing, control access, compliance, and security, and share resources across your AWS accounts.

Using AWS Organizations, you can automate account creation, create groups of accounts to reflect your business needs, and apply policies for these groups for governance. You can also simplify billing by setting up a single payment method for all of your AWS accounts.

In this activity guide, you will learn to create and manage AWS Organizations using SCP (Service Control Policies).

Activity Guide: Classify Sensitive Data In Your Environment Using Amazon Macie

Amazon Macie uses machine learning and pattern matching to cost-efficiently discover sensitive data at scale. Macie automatically detects a large and growing list of sensitive data types, including personally identifiable information (PII) such as names, addresses, and credit card numbers.

In this activity guide, you will learn how to enable Amazon Macie, create your Amazon-Macie-activity-generator CloudFormation stack, and add the new sample data to Macie.

Activity Guide: Amazon Inspector

Amazon Inspector tests the network accessibility of your Amazon EC2 instances and the security state of your applications that run on those instances. Amazon Inspector assesses applications for exposure, vulnerabilities, and deviations from best practices. After performing an assessment, Amazon Inspector produces a detailed list of security findings that is organized by level of severity.

In this activity guide, you will learn how to set up an Amazon EC2 instance to use with Amazon Inspector and modify it.

Activity Guide: Visualize Web Traffic Using Kinesis Data Stream

Amazon Kinesis makes it easy to collect, process, and analyze real-time, streaming data so you can get timely insights and react quickly to new information. Amazon Kinesis offers key capabilities to cost-effectively process streaming data at any scale, along with the flexibility to choose the tools that best suit the requirements of your application.

In this activity guide, we cover. How to analyze real-time, streaming data using AWS Kinesis and Firehose, and learn to set up step by step.

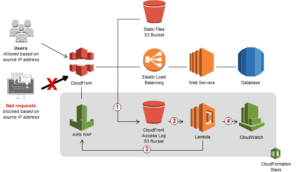

Activity Guide: Block Web Traffic With WAF in AWS

AWS Web Application Firewall is a firewall that helps you to protect your web application server against common web exploits that might affect the availability and compromise the security concerns of your application. The AWS WAF also gives you control over the traffic that reaches your applications by enabling you to create security rules that block common attack patterns like SQL injection and cross-site scripting.

Users can create their own rules/policies and specify the conditions that AWS WAF searches for in incoming web requests, and the AWS cost for using the WAF is only for what you use.

In this activity guide, you will learn how to create an IP set and test the working of WAF.

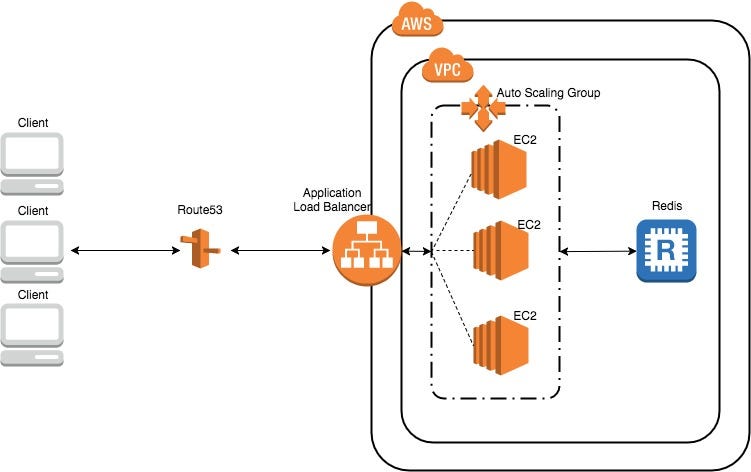

Activity Guide: Configuring Load Balancer And Autoscaling on EC2 Instances

AWS Auto Scaling monitors your applications and automatically adjusts capacity to maintain steady, predictable performance at the lowest possible cost. Using AWS Auto Scaling, it’s easy to set up application scaling for multiple resources across multiple services in minutes.

Elastic Load Balancing automatically distributes incoming application traffic across multiple targets, such as Amazon EC2 instances, containers, IP addresses, and Lambda functions. It can handle the varying load of your application traffic in a single Availability Zone or across multiple Availability Zones.

In this activity guide, you will learn how to create High Availability Architectures using Autoscaling and Load Balancers.

Activity Guide: Registering a Free Domain Name For Free

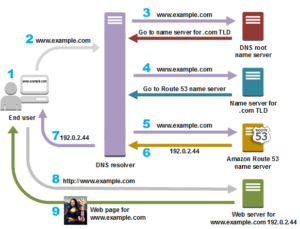

All computers on the Internet, from your smartphone, laptop/PC to the servers that serve content for massive retail websites, can be found and communicate with one another by using numbers. These numbers are called IP addresses. When you visit a website through your browser, you don’t have to remember and enter a long number. Instead, you can enter a DNS name (domain) like example.com.

In this activity guide, you will learn how to get a domain name for free.

Activity Guide: Working with Route53 for Disaster Recovery

Amazon Route 53 is a highly available and scalable cloud Domain Name System (DNS) web service. It is designed to give developers and businesses an extremely reliable and cost-effective way to route end users to Internet applications by translating names like www.example.com into the numeric IP addresses like 192.0.2.1 that computers use to connect. Amazon Route 53 is fully compliant with IPv6 as well.

In this activity guide, you will learn how to plan the recovery from a disaster using Route53, Active-Active Failovers, and Active-Passive Failovers.

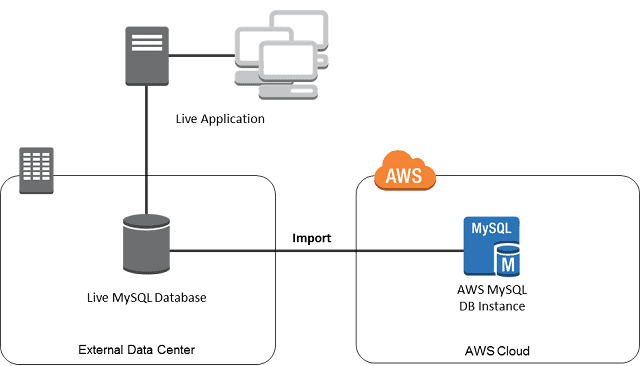

Activity Guide: Configure a MySQL DB Instance via RDS

Amazon Relational Database Service (Amazon RDS) makes it easy to set up, operate, and scale a relational database in the cloud. It provides cost-efficient and resizable capacity while automating time-consuming administration tasks such as hardware provisioning, database setup,

patching, and backups. It frees you to focus on your applications so you can give them the fast performance, high availability, security, and compatibility they need.

In this activity guide, you will learn how to download and install MySQL Workbench, configure a MySQL DB Instance via Relational Database Service (RDS), connect to the database via MySQL Workbench, and run some MySQL Queries

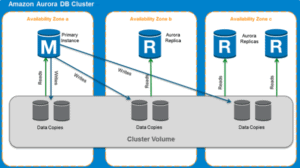

Activity Guide: Create & Manage Amazon Aurora Global Database

Amazon Aurora is a service offered by AWS, which is a fully managed relational database engine that’s compatible with both MySQL and PostgreSQL. This is a database in which you have a single Aurora database that spans multiple AWS Regions to support your globally distributed applications.

In this activity guide, you will learn how to create and configure the Amazon Aurora Database and add a secondary region to manage the Database.

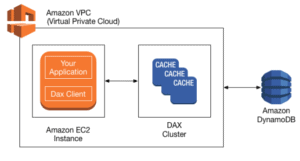

Activity Guide: Create & Query with Amazon DynamoDB

DynamoDB is a service by AWS that provides a fully managed Key-Value database service, by AWS which provides fast and predictable performance with compatible scalability. DynamoDB unloads the administrative burdens of operating, managing, and scaling a distributed database so that you don’t have to worry about hardware that you provisioned, set up, configured, replication, software patching, or cluster scaling.

With DynamoDB, you can create database tables in which you can store and retrieve any amount of data and serve it at any level. It allows you to create a full backup of your tables for long-term retention and archival for prospective compliance needs.

In this activity guide, you will learn about the creation and management of DynamoDB.

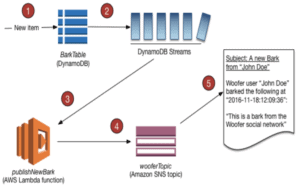

Activity Guide: Process New Item with DynamoDB Streams & Lambda

DynamoDB is a service by AWS that provides a fully managed Key-Value database service, by AWS which provides fast and predictable performance with compatible scalability.

AWS Lambda is a service by AWS that lets you run your code without managing the servers; you pay only for the computing time you consume. With the Lambda service, you can run code for virtually any type of app or backend services, all with zero administration. Here, you just have to upload your code, and Lambda takes care of everything required to run and scale your code with high availability and durability. Also, you can set up your code to automatically trigger from other AWS services or call it directly from any web or mobile application.

In this activity guide, we will learn how to create an AWS Lambda trigger to process a stream from a DynamoDB table.

Real-Time Projects: These consist of various projects

1. Create a Continuous Delivery Pipeline: This project involves creating a continuous delivery pipeline for an application that automates the entire software delivery process, from code changes to production deployment. It involves setting up a version control system, continuous integration tools, and a deployment pipeline that automates the process of deploying new versions of the application. This project is useful for software development teams that want to streamline their delivery process and increase the speed and reliability of their software releases.

2. Deploy a Web Application and Add Interactivity With an API and a Database: This project involves deploying a web application on a cloud platform like AWS. The web application is built using a programming language like Python, PHP, or Node.js and interacts with a backend API and a database to provide dynamic content and functionality. This project is useful for developers who want to learn how to build and deploy scalable web applications on the cloud.

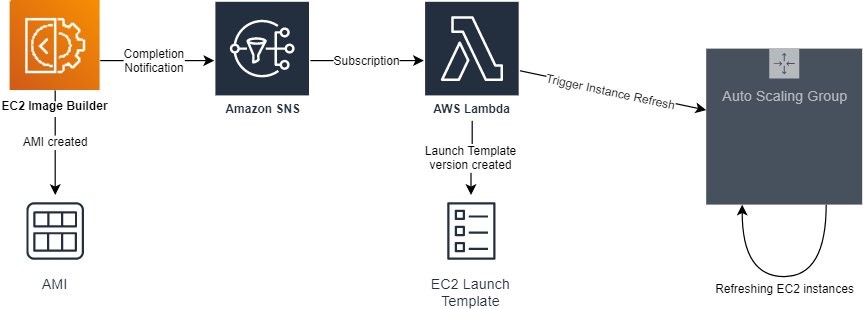

3. Provisioning SSL/TLS Certificates using AWS Certificate Manager: This project involves setting up and configuring SSL/TLS certificates using AWS Certificate Manager. SSL/TLS certificates are used to secure websites and ensure that all data transmitted between the website and its users is encrypted and secure. This project is useful for website owners who want to ensure that their website is secure and protects the privacy of their users.

4. Working With AWS AppConfig: AWS AppConfig is a service that enables the deployment of application configurations to applications hosted on AWS. This project involves learning how to use AWS AppConfig to manage and deploy application configurations, including feature flags, environment variables, and other settings. This project is useful for developers who want to learn how to manage and deploy application configurations at scale.

5. Build WordPress Website Using AWS Console: This project involves building a WordPress website using the AWS Console. WordPress is a popular content management system that powers millions of websites, and AWS provides a scalable and reliable platform for hosting WordPress sites. This project is useful for bloggers, small businesses, and anyone who wants to learn how to build and host a WordPress site on AWS.

6. Amazon EC2 Auto Scaling with EC2 Spot Instances: Amazon EC2 Auto Scaling is a service that automatically scales EC2 instances based on demand. EC2 Spot Instances are spare EC2 instances that are available at a significantly lower cost than on-demand instances. This project involves learning how to use Amazon EC2 Auto Scaling with EC2 Spot Instances to optimize costs and ensure that applications are always available, even during periods of high demand. This project is useful for developers who want to learn how to optimize costs and improve the availability of their applications on AWS.

7. Blue Green Deployment using ECS Fargate: Blue Green Deployment is a technique for deploying updates to applications with zero downtime. ECS Fargate is a managed service for deploying and managing containers on AWS. This project involves learning how to use ECS Fargate to deploy applications using a Blue-Green Deployment strategy. This project is useful for developers who want to learn how to deploy updates to applications with minimal downtime and without impacting users.

8. Host your Portfolio via S3 Bucket: In this project, the goal is to host your portfolio website using an Amazon S3 bucket. Amazon S3 (Simple Storage Service) is a scalable and reliable cloud storage service offered by Amazon Web Services (AWS). By utilizing S3 to host your portfolio, you can ensure high availability, durability, and cost-effectiveness.

Related Post

- Watch our five-part video series on “Docker & Kubernetes.”

- Docker & Certified Kubernetes Administrator (CKA) Training

- [Video] Containers (Docker) & Kubernetes In Azure For Beginners

- Certified Kubernetes Administrator (CKA) Certification Exam: Everything You Must Know

Related Links/References:

- Overview of Amazon Web Services & Concepts

- How to create a free tier account in AWS

- Amazon Elastic File System User Guide

- AWS CloudFormation

- AWS Elastic Beanstalk

- AWS Management Console Walkthrough

- AWS CodeCommit Overview & Its Benefits

- Deploy Web App On AWS Using CodePipeline

Next Task For You

Begin your journey towards becoming an AWS Certified DevOps Engineer Professional by checking our FREE CLASS. Click on the image below to register for our FREE CLASS.