In today’s ever-evolving digital landscape, the adoption of microservices architecture has become a go-to approach for designing and deploying applications. Microservices, with their ability to enhance scalability, flexibility, and maintainability, offer a compelling solution for complex software development. However, the implementation of microservices can be a daunting task without the right tools. Kubernetes for Microservices steps in as a game-changer, revolutionizing the deployment and management of microservices architecture.

In this blog we will discuss kubernetes for microservice & how it works.

we will learn:

- Understanding Microservices Architecture

- Challenges in Microservices Deployment and Management

- Managing Microservices with Kubernetes

- Best Practices for Microservices on Kubernetes

- Implementing CD with Kubernetes

Understanding Microservices Architecture

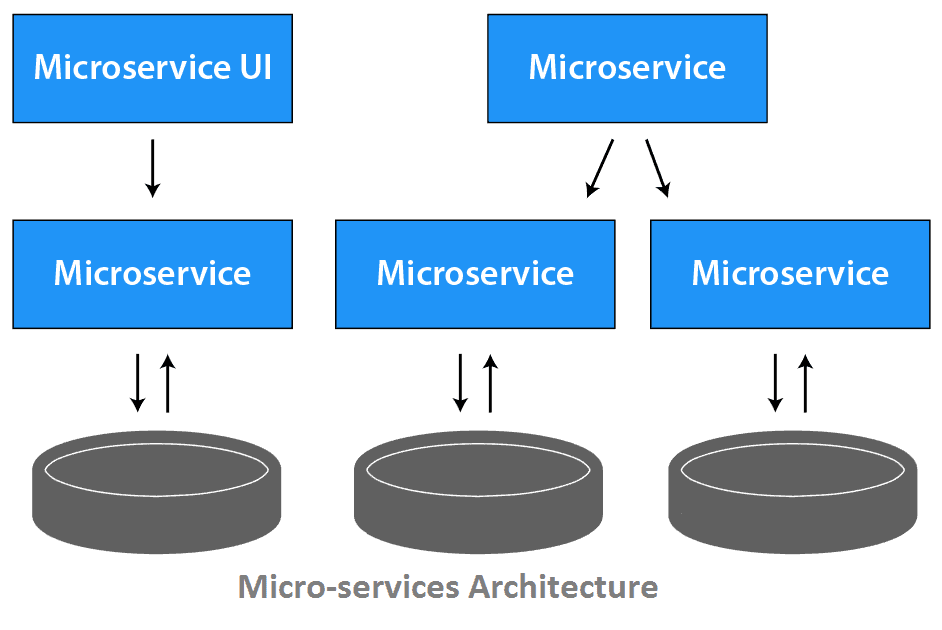

Before delving into the role of Kubernetes in microservices, it’s crucial to grasp the essence of microservices architecture. Unlike monolithic applications, where all components are tightly integrated, microservices decompose an application into smaller, independent services. Each service operates autonomously and communicates with others through APIs, allowing developers to work on individual components separately. This modularity enhances agility, facilitates easier updates, and accelerates innovation.

Learn more about Monolithic vs Microservices

Challenges in Microservices Deployment and Management

While the advantages of microservices are clear, managing a multitude of independent services presents its own set of challenges. Deploying and coordinating these services efficiently, ensuring their availability, scaling as per demand, and managing updates without disrupting the entire system can be complex and resource-intensive. Enter Kubernetes.

Managing Microservices with Kubernetes

Kubernetes, often abbreviated as K8s, is an open-source container orchestration platform that automates the deployment, scaling, and management of containerized applications. Originally developed by Google, Kubernetes has emerged as the de facto standard for deploying and managing containerized applications at scale.

Simplified Deployment

Kubernetes simplifies the deployment of microservices by abstracting away the underlying infrastructure complexities. It operates on a cluster of nodes where each node can host multiple containers. Kubernetes uses declarative configuration to define how applications should run and maintain their desired state. With the help of YAML or JSON files, developers can specify the deployment, networking, and scaling requirements, allowing Kubernetes to handle the execution and management.

Scalability and Load Balancing

Scaling microservices manually can be cumbersome. Kubernetes provides automated scaling by allowing users to define scaling policies based on CPU or memory usage. It dynamically adjusts the number of instances to match the workload demands, ensuring optimal performance. Additionally, Kubernetes integrates load balancing to distribute incoming traffic among service instances, enhancing reliability and performance.

Self-healing Capabilities

One of Kubernetes’ robust features is its self-healing capabilities. In case of failures or instances not meeting defined health checks, Kubernetes automatically restarts or replaces containers, maintaining the desired state and ensuring high availability without manual intervention.

Continuous Deployment and Updates

Kubernetes facilitates continuous deployment by supporting rolling updates and canary deployments. It enables seamless updates without downtime by gradually shifting traffic from old to new versions of services, minimizing disruptions and allowing easy rollbacks if issues arise.

Best Practices for Microservices on Kubernetes

1. Efficient Traffic Management with Ingress

Managing traffic within a microservices setup can be intricate. Kubernetes Ingress serves as an API object enabling HTTP and HTTPS routing to services within a cluster based on host and path. Acting as a reverse proxy, it efficiently routes incoming requests to the relevant service. This simplifies architecture by exposing multiple services under one IP address, enhancing manageability and facilitating additional features like SSL/TLS termination, load balancing, and virtual hosting.

Learn more about K8s Ingress

2. Utilize Kubernetes for Scalability

Microservices offer scalability benefits, and Kubernetes supports this through tools like Horizontal Pod Autoscaler (HPA), automatically adjusting pod numbers based on observed CPU utilization or custom metrics. Additionally, manual scaling within Kubernetes allows for on-demand pod adjustments, useful for anticipated load fluctuations.

3. Organize with Namespaces

Effective organization is crucial within complex applications. Kubernetes namespaces partition cluster resources among multiple users or teams, providing distinct name scopes. Utilizing namespaces helps manage related services as a unit, applying policies and access controls at the namespace level.

4. Implement Health Checks

Implementing health checks is essential for monitoring service status. Kubernetes offers readiness probes (to check if a pod is ready to accept requests) and liveness probes (to verify if a pod is running). These checks enable Kubernetes to automatically replace malfunctioning pods, ensuring application availability and responsiveness.

Learn more about Kubernetes Health Check with Readiness Probe and LivenessProbe

5. Employ Service Mesh

A service mesh serves as a dedicated layer managing service-to-service communication within a microservices architecture. It enhances reliability in delivering requests through the complex service topology. Service meshes offer advantages like traffic management, service discovery, load balancing, and failure recovery, essential for maintaining stability and performance.

Learn more about Service Mesh

6. Design for Single Responsibility

Each microservice should adhere to the single responsibility principle, promoting cohesion and a clean separation of concerns. This principle aids in easier scaling, monitoring, and management within Kubernetes, leveraging different scaling policies, resource quotas, and security configurations tailored to each service’s specific needs. Designing for single responsibility simplifies debugging and maintenance, making issue diagnosis and resolution more manageable in a distributed Kubernetes environment.

These best practices facilitate efficient deployment and management of microservices on Kubernetes, enhancing scalability, reliability, and maintainability within complex architectures.

Implementing CD with Kubernetes

Kubernetes forms a robust foundation for implementing continuous delivery or continuous deployment (CD) strategies tailored to microservices. The Kubernetes Deployment object offers a declarative approach for managing the intended state of microservices. This simplifies the automation of deploying, updating, and scaling microservices seamlessly.

Moreover, Kubernetes inherently supports rolling updates, enabling gradual implementation of changes across microservices. This method mitigates the potential risks associated with introducing critical alterations by ensuring a phased transition between the existing and updated versions.

Supplementary to Kubernetes, open-source tools such as Argo Rollouts enhance the rollback capabilities and introduce support for advanced deployment strategies like blue/green deployments and canary releases.

These combined functionalities facilitate a streamlined CD/CD pipeline for microservices, ensuring controlled deployment processes while minimizing potential risks and fostering a more manageable release lifecycle.

Conclusion

In the realm of microservices architecture, Kubernetes has emerged as an indispensable tool, empowering organizations to effectively deploy, manage, and scale complex applications. Its capabilities in automating tasks, ensuring high availability, enabling seamless updates, and simplifying the orchestration of containerized microservices make it a cornerstone in modern software development.

As businesses continue to embrace microservices for their agility and scalability benefits, the role of Kubernetes as the orchestrator of microservices will undoubtedly remain pivotal, enabling efficient, resilient, and scalable application architectures.

Kubernetes stands as a testament to the evolution of technology, offering a powerful solution that aligns perfectly with the demands of the dynamic and distributed nature of modern software development.

In conclusion, with Kubernetes, the path to leveraging the potential of microservices becomes not just accessible but also significantly more manageable and efficient.

What is Kubernetes, and how does it relate to microservices?

Kubernetes is an open-source container orchestration platform used to automate the deployment, scaling, and management of containerized applications. It provides the infrastructure needed to deploy and manage microservices efficiently by orchestrating containers across a cluster of machines.

How does Kubernetes benefit microservices architecture?

Orchestration: Kubernetes simplifies the deployment and scaling of microservices, ensuring they run reliably and efficiently. Fault Tolerance: It offers automated health checks and self-healing capabilities, ensuring high availability. Scaling: Kubernetes enables easy horizontal scaling of microservices based on resource demands. Isolation: Containers in Kubernetes provide isolated environments for microservices, enhancing security and encapsulation.

What is the significance of Kubernetes’ rolling updates in microservices?

Rolling updates in Kubernetes enable seamless updates by gradually replacing old instances of microservices with new ones. This process minimizes downtime and reduces the risk of service disruptions caused by introducing changes.

How can Kubernetes facilitate multi-environment deployments for microservices?

Kubernetes provides the flexibility to create multiple namespaces or use tools like Helm to manage different environments (dev, staging, production). This allows deploying and managing microservices across various environments consistently.

Related/References

- Subscribe to our YouTube channel on “Docker & Kubernetes”

- Docker & Kubernetes: Step-by-Step Activity Guide (Hands-on Lab) & Project Work for getting a Job(CKA) Certification: Step By Step Activity Guides/Hands-On Lab Exercise

- Kubernetes Architecture | An Introduction to Kubernetes Components

- Create AKS Cluster: A Complete Step-by-Step Guide

- [Solved] The connection to the server localhost:8080 was refused – did you specify the right host or port?

- CKA/CKAD Exam Questions & Answers 2022

- Kubernetes Monitoring: Prometheus Kubernetes & Grafana Overview

- How To Setup A Three Node Kubernetes Cluster: Step By Step

Join FREE CLASS Masterclass

Discover the Power of Kubernetes, Docker & DevOps – Join Our Free Masterclass. Unlock the secrets of Kubernetes, Docker, and DevOps in our exclusive, no-cost masterclass. Take the first step towards building highly sought-after skills and securing lucrative job opportunities. Click on the below image to Register Our FREE Masterclass Now!

![AWS DevOps [DOP-C02] Professional Step By Step Activity Guides (Hands-On Labs)](https://k21academy.com/wp-content/uploads/2023/02/DOP-C02-1.png)