Your generative AI app is delivering results, users are happy, adoption is rising, and then the bill shows up!

In 2026, enterprises scaling on Amazon Bedrock are realizing that costs escalate quickly due to overused premium models, bloated prompts, oversized context windows, excessive real-time inference, and limited monitoring. The difference between a scalable AI platform and an expensive experiment comes down to smart cost architecture.

In this guide, we’ll explore five practical strategies for AWS Generative AI Cost Optimization, from model tiering and prompt optimization to batching and governance frameworks, to help you reduce Bedrock expenses sustainably in 2026.

Why AWS Generative AI Cost Optimization matter in 2026?

Inference workloads increase dramatically as companies implement RAG assistants, LLM-based copilots, analytics engines, and automation tools. In contrast to conventional compute services, the following factors influence generative AI costs:

- Input token

- Output token

- Model choice

- Frequency of invocation

- Size of the context

In the absence of a well-organised AWS Generative AI Cost Optimisation plan, monthly Bedrock bills may become erratic. Cost optimisation is now required by architecture and is no longer discretionary.

Related Readings: AWS Cost Optimization: Maximize efficiency

Strategy 1: Model Tiering & Intelligent Routing

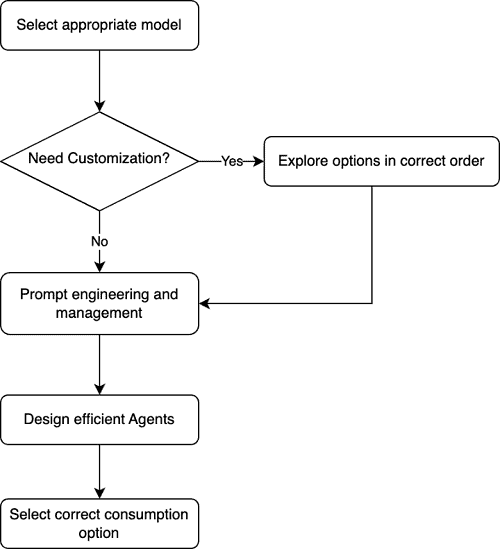

One of the biggest levers in AWS Generative AI Cost Optimization is selecting the right model for the right task. Models from companies like Anthropic, Meta, and Amazon’s Amazon Titan are available on Amazon Bedrock. Nevertheless, a lot of teams automatically use premium models for all requests, which raises expenses considerably.

Implement Model Tiering

Create three model categories:

- Lightweight models: Classification, tagging, summaries

- Mid-tier models: Contextual Q&A, business reasoning

- Premium models: Complex reasoning, deep analysis

By routing prompts intelligently, you can reduce inference costs by 25–40%. Model tiering is one of the most impactful AWS Generative AI Cost Optimization techniques available in 2026.

Related Readings: Enable foundation models in AWS Bedrock: Step By Step Guide

Strategy 2: Token Discipline & Prompt Optimization

In Bedrock, you pay per token. Poor prompt design directly increases cost.

Common Cost Leaks

- Repeating long system prompts

- Sending entire documents instead of chunks

- Unlimited output tokens

- Full conversation replay

Best Practices for AWS Generative AI Cost Optimization

- Set max output token limits

- Trim conversation history (sliding window memory)

- Use structured JSON outputs instead of verbose responses

- Break static and dynamic prompts for reuse

Token discipline is foundational to effective AWS Generative AI Cost Optimization, especially at scale. Even reducing 500–1,000 tokens per request can save thousands monthly in production systems.

Strategy 3: Prompt Caching & Context Reuse

One of the most underutilised techniques for AWS Generative AI Cost Optimisation is prompt caching. Bedrock can reduce re-computation costs by caching processing when identical prompt prefixes are reused.

Common Use Cases:

- Customer support bots

- Policy Q&A systems

- Document review assistants

- RAG knowledge bases

To maximize caching:

- Keep prompt prefixes consistent

- Use templates

- Separate dynamic variables cleanly

Strong caching techniques frequently result in 50–80% cost savings on repetitive tasks for organisations. One high-ROI AWS Generative AI Cost Optimisation tactic for 2026 is prompt caching.

Strategy 4: Moving Non-Critical Workloads to Batch Processing

The cost of real-time inference is high. An effective methodology for AWS Generative AI Cost Optimisation makes a distinction between the following:

Workloads in Real Time

- Chatbots that are interactive

- Co-pilots

- Live assistants

Batch & Asynchronous Workloads

- Generating content in bulk

- Analysis of sentiment

- Automation of reports

- Processing of legal documents

Compared to on-demand real-time inference, batch processing on Amazon Bedrock is substantially less expensive. Organisations can save 20–35% on AI infrastructure expenses by rethinking procedures Despite being frequently overlooked, batching is still a potent AWS Generative AI Cost Optimisation strategy.

Strategy 5: Cost Monitoring, Tagging & Governance

What you cannot measure, you cannot optimise.

The following are components of an established AWS Generative AI Cost Optimisation program:

- Monitoring with AWS Cost Explorer

- CloudWatch measurement

- Alerts about the budget

- Tags for cost allocation

Use of tags by:

- Environment of the Project Department (Dev/Test/Prod)

- A feature

Notice the following:

- The price per feature

- The price per user

- The price per API call

- Average number of tokens for each request

As a result, cost tracking becomes strategic governance for AWS Generative AI Cost Optimisation.

Real-World Example of AWS Generative AI Cost Optimization

Here is an example of how cost optimization can impact your workload

Before optimization:

- Single premium model

- No caching

- Unlimited output tokens

- Real-time for all use cases

After implementing structured AWS Generative AI Cost Optimization:

- Model routing introduced

- Prompt caching enabled

- Sliding window memory applied

- Batch processing for analytics

- Strict token limits enforced

Result:

- 45–65% reduction in Bedrock expenses

- Improved latency

- Better cost predictability

Related Readings: Troubleshooting AWS Billing Issues: Beware Amazon Bedrock Users

Conclusion

In 2026, AWS Generative AI Cost Optimization is not just about reducing spend, it’s about building scalable, sustainable AI systems. Building scalable, sustainable AI systems is the goal of AWS Generative AI Cost Optimisation in 2026, not merely cutting costs.

Businesses will grow more quickly and effectively if they integrate AWS Generative AI Cost Optimisation into their architecture, DevOps procedures, and governance frameworks. You can drastically cut Amazon Bedrock costs while preserving excellent generative AI performance by implementing these five tactics.

Next Task For You

Don’t miss our EXCLUSIVE Free Training on Generative AI on AWS Cloud! This session is perfect for those pursuing the AWS Certified AI Practitioner certification. Explore AI, ML, DL, & Generative AI in this interactive session.

Click the image below to secure your spot!