OpenShift is a Cloud development Platform as a Service (PaaS) hosted by Red Hat. It’s an open-source technology that helps organizations move their traditional application infrastructure and platform from physical, virtual mediums to the Cloud.

OpenShift is used by some of the big corporates, mainly in the telecom and finance industry, and is hugely popular. OpenShift uses CI/CD tools like Jenkins and Ansible; Container runtimes like Docker and Kubernetes.

In this blog, we are going to cover Openshift architecture, its layers & components. Also, we will be discussing the Openshift master node & node, infra nodes, and their components.

What is Openshift Architecture?

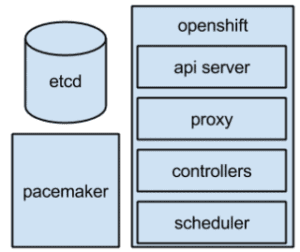

OpenShift Container Platform(OCP) has a microservices-based architecture of smaller, combined units that work together which run on top of a Kubernetes cluster. Data of the objects stored in etcd. Those services are broken down by function:

- REST APIs, which expose each of the core objects.

- Controllers, which read those APIs, apply changes to other objects, and report status or write back to the object.

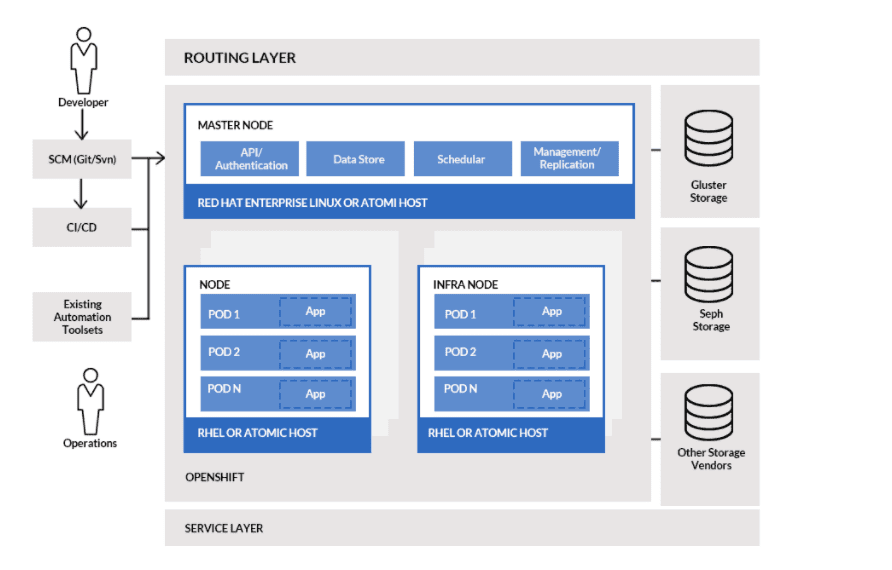

Openshift Architecture Diagram

- API Server– With the help of API servers, different tools and libraries communicate with each other.

- Controller Manager – Regulates and manages cluster state, also responsible for gathering and sending information to API servers.

- Etcd– Stores configuration information and has key-value to distribute among nodes for accessibility.

- Scheduler – Helps in distributing workloads to nodes.

- Kubernetes master makes communication with the node server with the following key components:

- Docker- a service that helps in running containers for the application.

- Kubelet Service- Responsible for transferring information to and from the control pane. It receives commands and works from master components. Helps in managing network issues as well as port forwarding.

- Proxy Service- They run on each node and make service available to external hosts. It manages load balancing, health checkups of containers and pods.

- Integrated OpenShift Container Registry- An inbuilt storage for storing Docker images.

Openshift Architecture Layers

OpenShift consists of the following layers, and each layer has its own responsibilities.

- Infrastructure layer

- Service layer

- Routing layer

Infrastructure layer: You can host your apps on physical servers, virtual servers, or even the cloud (private/public) in the infrastructure layer.

Service layer: The service layer is responsible for defining pods and access policy. The service layer assigns pods a permanent IP address and hostname, links apps, and facilitates internal load balancing by allocating duties among application components.

In an OpenShift cluster, there are two sorts of nodes: main nodes and worker nodes. The worker nodes are where applications are stored. The cluster can have several worker nodes; the worker nodes are where all of your coding adventures take place and can be virtual or actual.

Routing layer: The routing layer is the final component. It allows external access to the cluster’s applications from any device. Load balancing and auto-routing around unhealthy pods are also included.

Openshift Architecture Components

Master Node & its components

The master is the host or hosts that contain the master components, which include the API server, controller manager server, and etcd. The master manages the Kubernetes cluster’s nodes and schedules pods to run on them.

Source: Openshift Docs

- Pacemaker:

Optional, used when configuring highly-available masters.

A pacemaker is the core technology of the High Availability Add-on for Red Hat Enterprise Linux, providing consensus, fencing, and service management. It can be run on all master hosts to ensure that all active-passive components have one instance running.

- Virtual IP:

Optional, used when configuring highly-available masters.

The virtual IP (VIP) is the single point of contact, but not a single point of failure, for all OpenShift clients that:

-

- cannot be configured with all master service endpoints, or

- do not know how to load balance across multiple masters nor retry failed master service connections.

There is one VIP and it is managed by Pacemaker.

Worker Nodes & their components

Container runtime environments are provided by a node. Each node in a Kubernetes cluster has the necessary services for the master to manage. Nodes also have Docker, a kubelet, and a service proxy, which are required to run pods.

Nodes are created by OpenShift from a cloud provider, physical systems, or virtual systems. Kubernetes communicates with node objects, which are representations of those nodes. The information from node objects is used by the master to validate nodes with health checks. The master ignores nodes until they pass the health checks, and the master checks nodes until they are valid. More information on node management can be found in the Kubernetes documentation.

Administrators can use the CLI to manage nodes in an OpenShift instance. When launching node servers, use dedicated node configuration files to define full configuration and security options.

Kubelet:

Each node has a kubelet that updates the node in accordance with the container manifest, which is a YAML file that describes a pod. The kubelet relies on a set of manifests to ensure that its containers start and continue to run. The Kubernetes documentation includes a sample manifest.

A kubelet can be given a container manifest by:

- A command-line file path that is checked every 20 seconds.

- A command-line HTTP endpoint that is checked every 20 seconds.

- The kubelet monitors and acts on changes to an etcd server, such as /registry/hosts/$(hostname -f).

- The kubelet is listening for HTTP requests and responding to a simple API call to submit a new manifest.

Service Proxy:

In addition, each node runs a simple network proxy that reflects the services defined in the API on that node. This enables the node to perform basic TCP and UDP stream forwarding across a network of back ends.

Infrastructure Nodes

Infrastructure nodes are nodes that have been designated to run specific components of the OpenShift Container Platform environment. At the moment, the simplest way to manage node reboots is to ensure that at least three nodes are available to run infrastructure. When only two nodes are available, the scenario below illustrates a common mistake that can result in service interruptions for applications running on OpenShift Container Platform.

Persistent storage

All of your data is preserved and attached to containers in Persistent storage. Because containers are ephemeral, any saved data is lost when they are restarted or erased, it is critical to have persistent storage. As a result, persistent storage prevents data loss and allows stateful applications to be used.

Conclusion

OpenShift is a unique platform for building and shipping cloud-based applications. It opens opportunities for innovative products and services with faster delivery. An organization using Red Hat OpenShift can unleash many advantages and gain competitive benefits in business. I hope this blog may have helped you in providing information.

Related/References

- Openshift vs Kubernetes: What is the Difference?

- Kubernetes for Beginners

- Red Hat OpenShift- What, Why, and How?

- OpenShift For Beginners: 30+ Hands-On labs You Must Perform | Step-by-Step

- Deploy Applications Using OpenShift: Step-by-Step

- Install Single Node OpenShift Cluster (OKD): Step By Step

- OpenShift Official Documentation

Next Task For You: Join Our FREE Class

Begin your journey towards becoming a Red Hat Certified Specialist in OpenShift Administrator and earning a lot more in 2021 by joining our Free Class.

Leave a Reply