Note: A new version of [DP-201] Designing an Azure Data Solution has come, refer to DP-203

This blog cover Step-By-Step Activity Guides of the [DP-201] Designing an Azure Data Solution Hands-On Labs Training program that you must perform to learn this course.

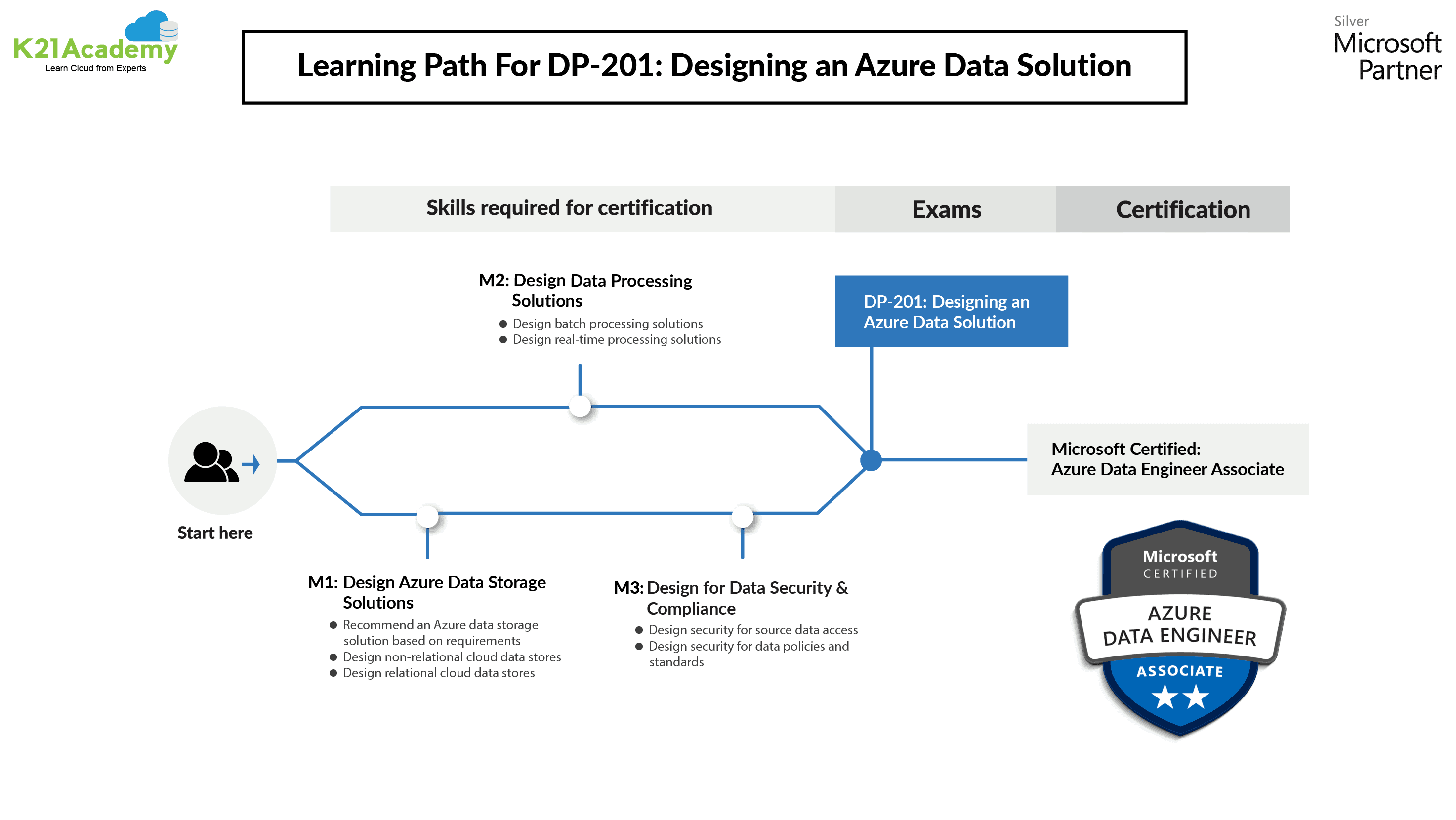

This course belongs to Data Architects, Data Professionals, and Business Intelligence Professionals who want to become a master in the data platform technologies that exist on Microsoft Azure. This course also refers to those who develop applications that pass content from the data platform technologies on Microsoft Azure.

The walkthrough of the Step-By-Step Activity Guides of the DP-201 training program will develop you completely for the DP-201 certifications.

To know more about the DP-201 Exam you can read our blog on Designing An Azure Data Solution [DP-201]: Everything You Need To Know

DP-201 | Designing An Azure Data Solution

- Azure Architecture Considerations

- Azure Batch Processing Reference Architectures

- Azure Real-Time Reference Architectures

- Azure Data Platform Security Considerations

- Designing for Scale and Resiliency

- Designing for Efficiency and Operations

Skills Measured In DP-201

- Implement data storage solutions (40-45%)

- Manage and develop data processing (25-30%)

- Monitor and optimize data solutions (30-35%)

Note: We will use the data gained in these modules and cover it to a scenario that is explained in a case study about AdventureWorks.

Lab 1 – Azure Architecture Considerations

In this lab, We will specify and add examples of how the core fundamentals for creating architectures will be practiced to AdventureWorks.

We will design with security in mind. We will also add specific examples of how to design performance and scalability in a solution, and characterize the availability and recoverability options that are essential by the organization. Finally, we will identify the efficiency and operations needed for AdventureWorks.

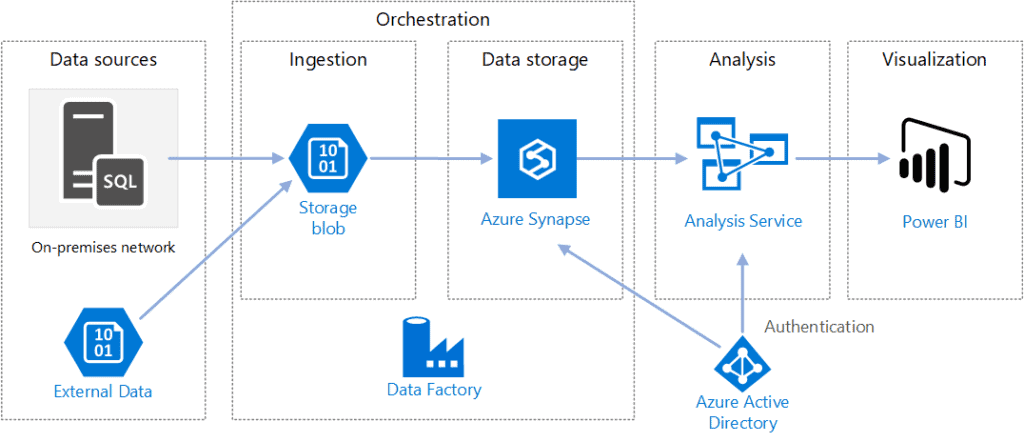

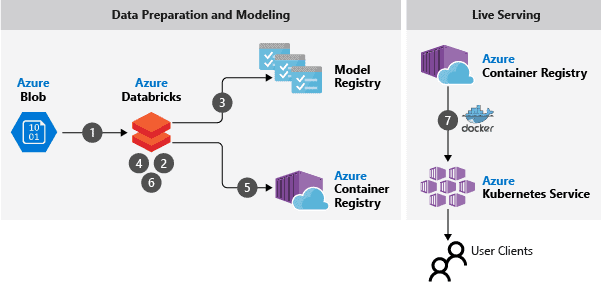

Lab 2 – Azure Batch Processing Reference Architectures

Batch processing: The high-volume type of big data generally means that solutions must process data files using long-running batch jobs to aggregate, filter, and prepare the data for analysis. Generally, these jobs related to read source files, process them, and writing the output to new files which we perform in Azure Data Solution.

In this lab, we’ll determine the requirements that would form part of the Batch mode processing of data in an Enterprise BI solution in AdventureWorks and develop a high-level Architecture that reflects the Enterprise BI solution in AdventureWorks. We’ll add a high-level Architecture to combine the automation of an Enterprise BI and conversational bot solution in AdventureWorks.

Note: A new version of [DP-201] Designing an Azure Data Solution has come, refer to DP-203

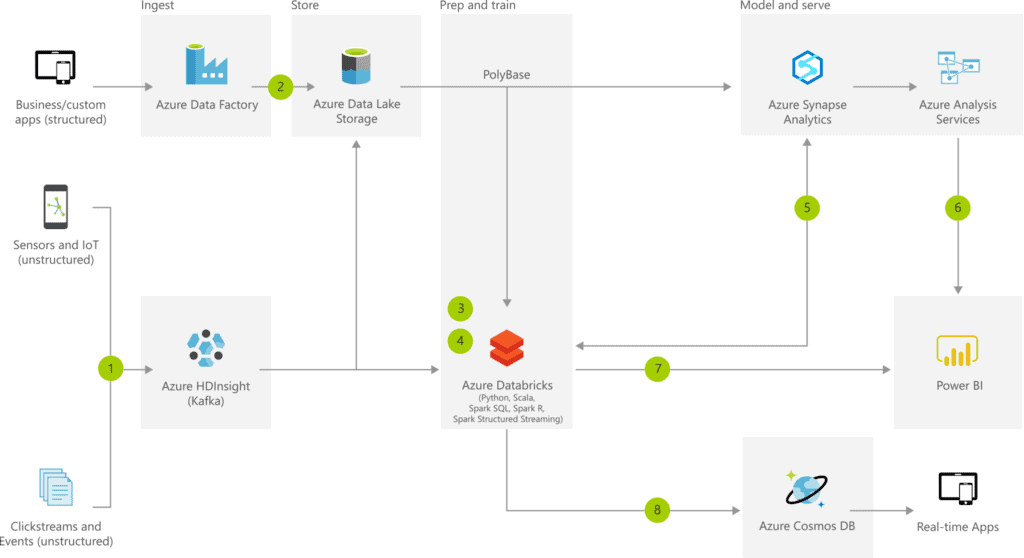

Lab 3 – Azure Real-Time Reference Architectures

In this lab, we’ll find the requirements that would form part of the real-time processing of data in works and design a high-level Architecture that reflects a stream processing pipeline with Azure Stream Analytics in Designing an Azure Data Solution.

We’ll develop a high-level Architecture to combine a stream processing pipeline with Azure Databricks solution in works and verify which architecture would form part of an Azure IoT reference architecture.

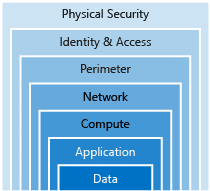

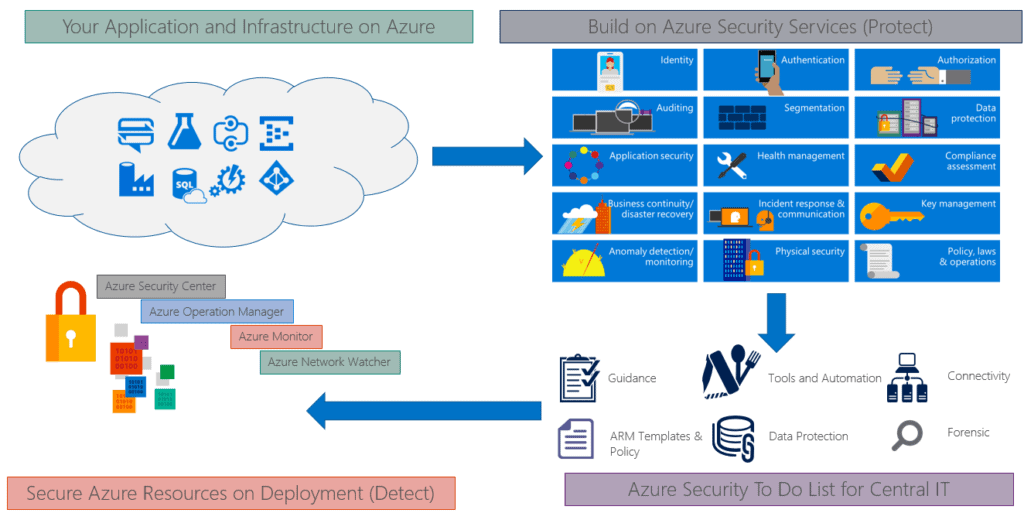

Lab 4 – Azure Data Platform Security Considerations

In this lab, we will try the range of security options that are available to add a defense-in-depth approach to securing the AdventureWorks environment and combine the authentication mechanisms that are supported by each service, as well as investigating the available network protection options.

We will also learn the encryption options that are available and establish an understanding of network-level and application-level protection. We need to understand the data encryption boundaries and at what points in the process is data unencrypted in Designing an Azure Data Solution.

Lab 5 – Designing For Scale And Resiliency

The scale system is divided into two categories i.e. scale-up and scale down.

- Scale up or down: scaling up or down is the process where we increase or decrease the capacity of a given instance. It adjusts the number of resources a single instance has available.

- scale in or out: scaling in or out is the process of adding or removing instances to support the load of our solution.

In this lab, we will analyze a range of resiliency and scale issues that would have to be studied when exemplifying solution architecture for an organization.

We will first consider how we incorporate scale into a solution. We will follow this by considering storage and database performance, and how solutions can be made highly available. Finally, we will be inspecting the issue of disaster recovery.

Lab 6 – Designing For Efficiency And Operations

In this lab, we will examine the way in which we can maximize the efficiency of using a cloud environment and how we can analyze and monitor operational efficiencies from the Azure portal. We will also look at how automation can be used to reduce effort and error in Designing an Azure Data Solution.

Note: A new version of [DP-201] Designing an Azure Data Solution has come, refer to DP-203

Related/References

- Designing an Azure Data Solution Certification [DP-201]: Everything you need to know

- [DP-100] Microsoft Certified Azure Data Scientist Associate: Everything you must know

- Microsoft Certified Azure Data Scientist Associate | DP 100 | Step By Step Activity Guides (Hands-On Labs)

- [AI-900] Microsoft Certified Azure AI Fundamentals Course: Everything you must know

- Microsoft Azure AI Fundamentals [AI-900]: Step By Step Activity Guides (Hands-On Labs)

Next Task For You

In our Azure Data Engineer training program, we will cover 17 Hands-On Labs. If you want to begin your journey towards becoming a Microsoft Certified: Azure Data Engineer Associate by checking our FREE CLASS.

Leave a Reply