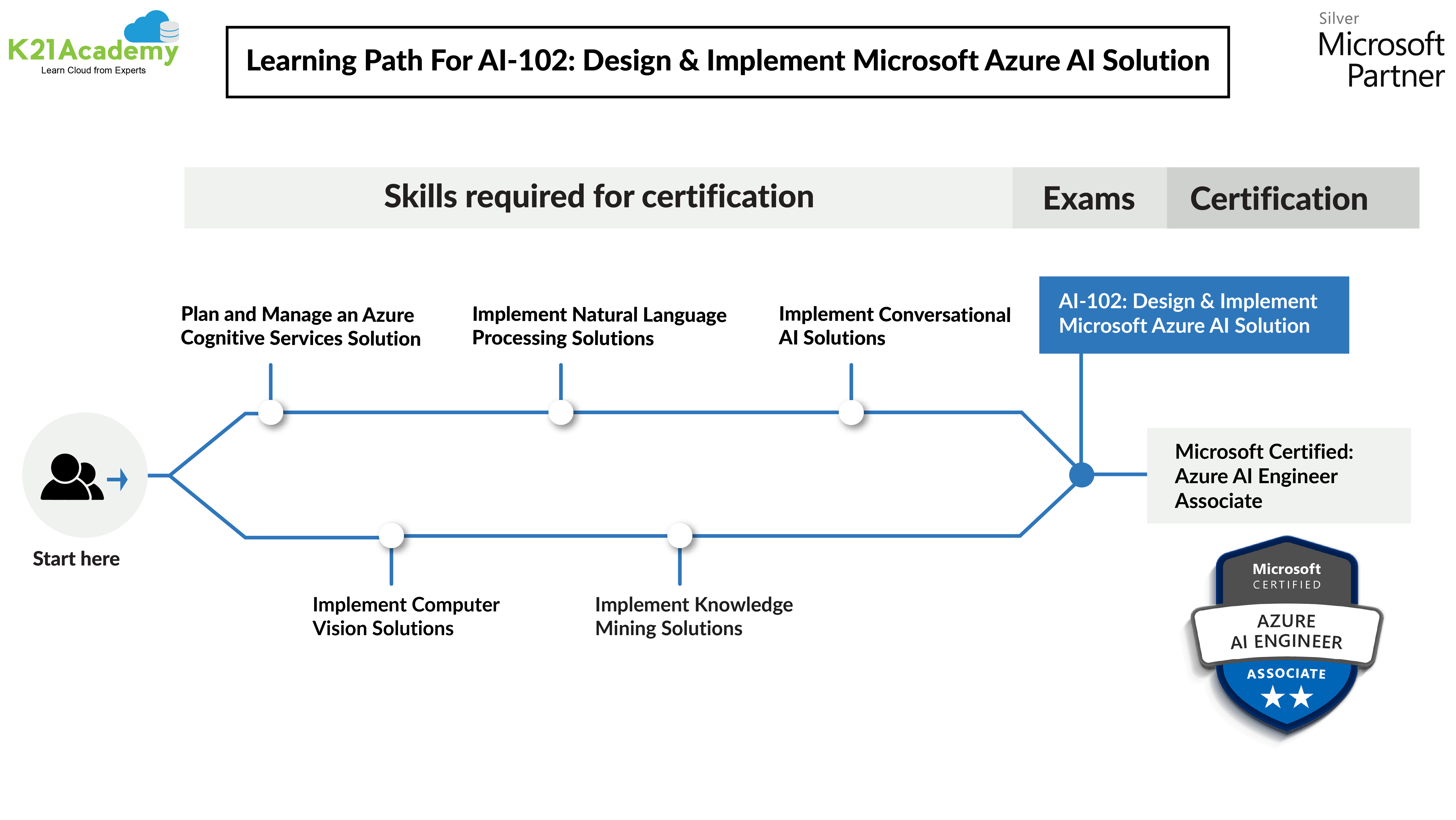

This blog post covers Hands-On Labs that you must perform in order to Design and Implement an Azure AI solution & clear the Microsoft Azure AI Engineer Associate Certification AI-102 exam.

This post helps you in Artificial Intelligence Engineering on the Microsoft Azure journey with your self-paced learning as well as for your team learning. There are 24 Hands-On Labs in this course.

- Get Started with Cognitive Services

- Manage Cognitive Services Security

- Monitor Cognitive Services

- Use a Cognitive Services Container

- Analyze Text

- Translate Text

- Recognize and Synthesize Speech

- Translate Speech

- Create a Language Understanding App

- Create a Language Understanding Client Application

- Use the Speech and Language Understanding Services

- Create a QnA Solution

- Create a Bot with the Bot Framework SDK

- Create a Bot with Bot Framework Composer

- Analyze Images with Computer Vision

- Analyze Video with Video Indexer

- Classify Images with Custom Vision

- Detect Objects in Images with Custom Vision

- Detect, Analyze, and Recognize Faces

- Read Text in Images

- Extract Data from Forms

- Create an Azure Cognitive Search solution

- Create a Custom Skill for Azure Cognitive Search

- Create a Knowledge Store with Azure Cognitive Search

Here’s the quick sneak-peak of how to start learning design and implement an Azure AI solution & to clear Microsoft Azure AI Engineer Associate Certification AI-102 by doing Hands-on.

Module 1: Introduction to AI on Azure

Artificial Intelligence (AI) is increasingly prevalent in the software applications we use every day; including digital assistants in our homes and cellphones, automotive technology in the vehicles that take us to work, and smart productivity applications that help us do our jobs when we get there. In this module, you’ll identify Azure services for AI application development like Azure Cognitive Services, Azure Bot Service, Azure Cognitive Search, etc.

Module 2: Developing AI Apps with Cognitive Services

1) Get Started With Cognitive Services

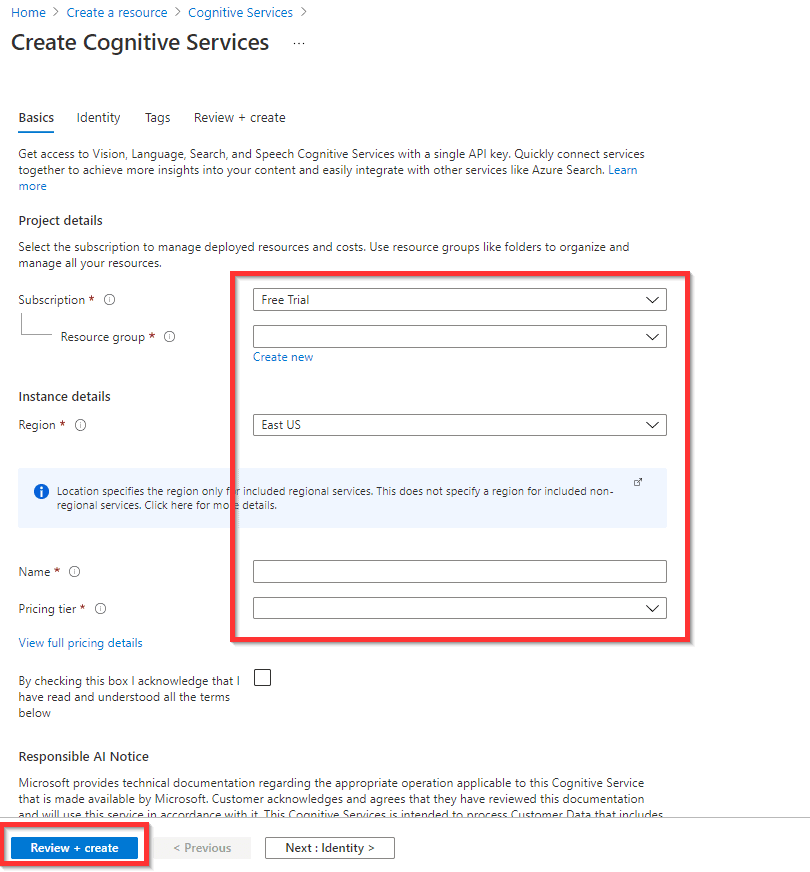

Azure cognitive services include a wide range of AI capabilities that you can use in your applications. To use any of the cognitive services, you need to create appropriate resources in an Azure subscription to define an endpoint where the service can be consumed, provide access keys for authenticated access, and manage billing for your application’s usage of the service.

In this lab, you will:

- Provision a Cognitive Services resource.

- Consume the cognitive service resource through a REST interface.

- Use an SDK to consume the cognitive service.

2) Manage Cognitive Services Security

Security is a critical consideration for any application, and as a developer, you should ensure that access to resources such as cognitive services is restricted to only those who require it. When you provision a cognitive service resource in your Azure subscription, you are defining an endpoint through which the service can be consumed by an application.

In this lab, you will:

- Manage subscription keys for a cognitive services resource.

- Use Azure Key Vault to protect a cognitive services key.

3) Monitor Cognitive Services

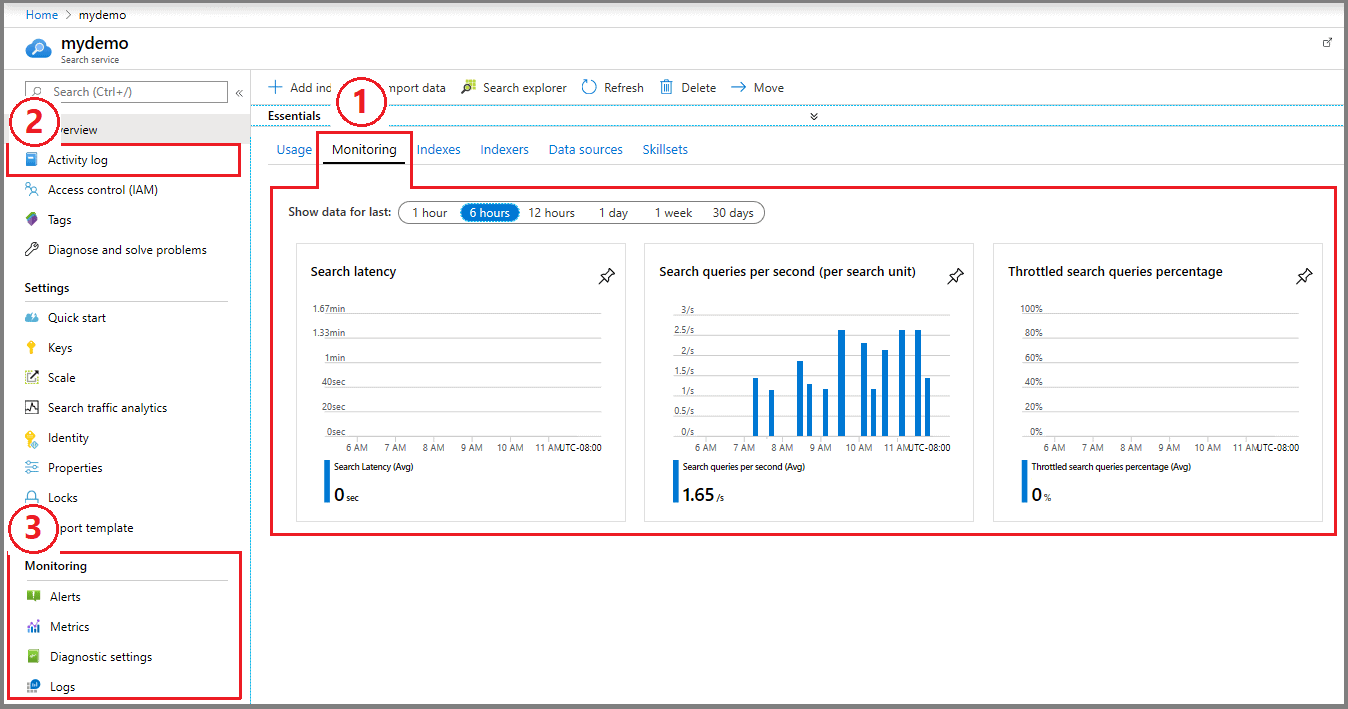

The Monitoring section of the Cognitive Services resource blade in the Azure portal includes four areas that you can use for monitoring your resource. These include Alerts, Metrics, Diagnostic settings, and Logs.

In this lab, you will:

- Configure an alert for your cognitive services resource.

- Visualize a metric for your cognitive services resource.

4) Use a Cognitive Services Container

Containers allow you to host Azure Cognitive Services either on-premises or on Azure. For example, if your application uses data in an on-premises SQL Server, you can deploy Cognitive Services in containers on the same network. Now your data can stay on your local network and not move to the cloud.

In this lab, you will:

- Deploy and run a cognitive services container.

- Use a container to consume a cognitive service.

Module 3: Getting Started with Natural Language Processing

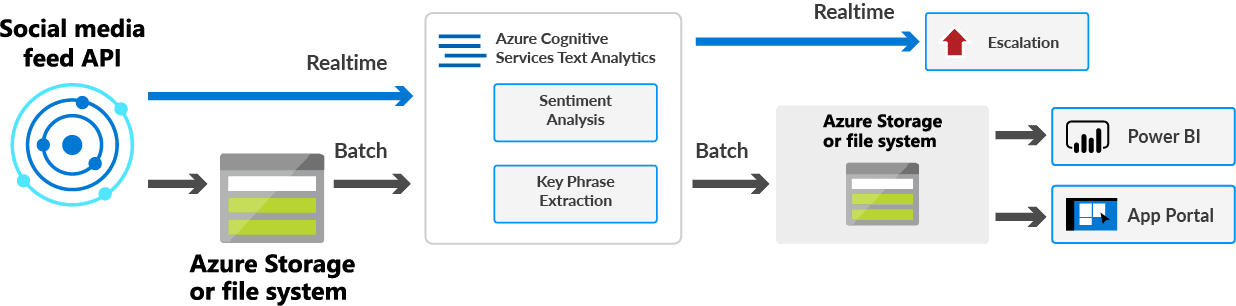

1) Analyze Text

The Text Analytics service is designed to help you extract information from text. It provides functionality that you can use for Language detection, Key phrase extraction, Sentiment analysis, Named entity recognition, & Entity linking.

You can provision Text Analytics as a single-service resource, or you can use the Text Analytics API in a multi-service Cognitive Services resource.

In this lab, you will use the Text Analytics service to:

- Detect language.

- Evaluate sentiment.

- Identify key phrases.

- Extract entities

- Extract linked entities

2) Translate Text

The Translator service provides a multilingual text translation API that you can use for Language detection, One-to-many translation & Script transliteration. You can provision Translator as a single-service resource, or use the Translator API in a multi-service Cognitive Services resource.

In this lab, you will use the Translator service to:

- Detect language.

- Translate text

Module 4: Building Speech-Enabled Applications

1) Recognize and Synthesize Speech

The Speech service provides APIs that you can use to build speech-enabled applications. Specifically, the Speech service supports such as Speech-to-Text, Text-to-Speech, Speech Translation, Speaker Recognition, and Intent Recognition.

In this lab, you will use the Speech service to:

- Recognize speech.

- Synthesize speech

2) Translate Speech

Translation of speech builds on speech recognition by recognizing and transcribing spoken input in a specified language, and returning translations of the transcription in one or more other languages.

In this lab, you will use the Speech service to:

- Implement speech translation.

- Synthesize the translation to speech

Module 5: Creating Language Understanding Solutions

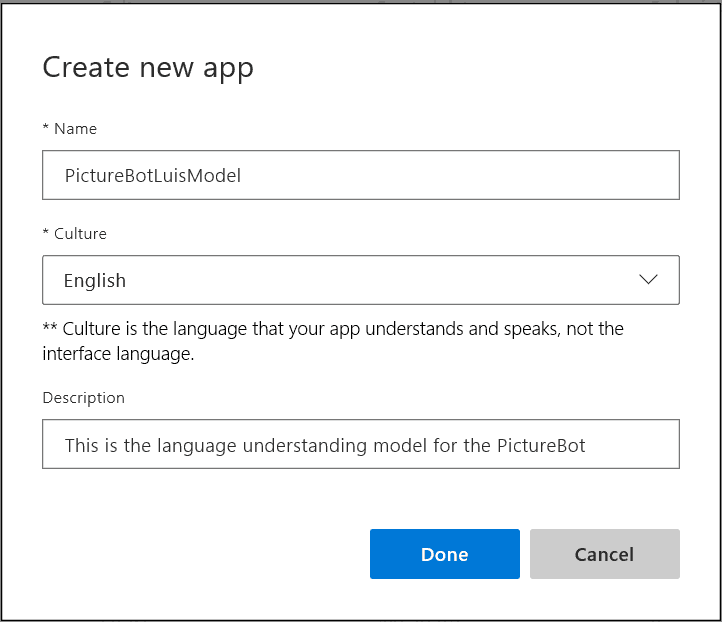

1) Create a Language Understanding App

The Language Understanding service enables you to train a language model (referred to as a Language Understanding conversation app) that can interpret natural language utterances to determine the user’s intent and any entities to which the intent should be applied.

In this lab, you will use the Language Understanding service to:

- Create intents.

- Create entities.

- Test and publish a conversational app.

- Use Active Learning to improve predictions.

2) Create A Language Understanding Client Application

You can create client applications that consume Language Understanding apps directly through REST interfaces, or by using language-specific software development kits (SDKs).

In this lab, you will use the Language Understanding service to:

- Use the SDK to submit an utterance.

- Process the resulting prediction.

3) Use the Speech and Language Understanding Services

While many applications work with text-based natural language input, it’s also common to see applications that engage with users through speech; for example, digital assistants on smartphones, home automation devices, and in-car systems.

In this lab, you will:

- Use the Speech SDK to recognize the intent.

- Process the resulting prediction.

Module 6: Building a QnA Solution

1) Create a QnA Solution

The QnA Maker service enables you to define a knowledge base of question and answer pairs that can be queried using natural language input. The knowledge base can be published to a REST endpoint and consumed by client applications, commonly bots.

In this lab, you will use the QnA Maker service to:

- Create a knowledge base.

- Create a QnA bot.

Module 7: Conversational AI and the Azure Bot Service

1) Create a Bot with the Bot Framework SDK

Bot solutions on Microsoft Azure are supported by the following technologies:

- Azure Bot Service. A cloud service that enables bot delivery through one or more channels, and integration with other services.

- Bot Framework Service. A component of Azure Bot Service that provides a REST API for handling bot activities.

- Bot Framework SDK. A set of tools and libraries for end-to-end bot development that abstracts the REST interface, enabling bot development in a range of programming languages.

In this lab, you will use the Bot Framework SDK to create a bot.

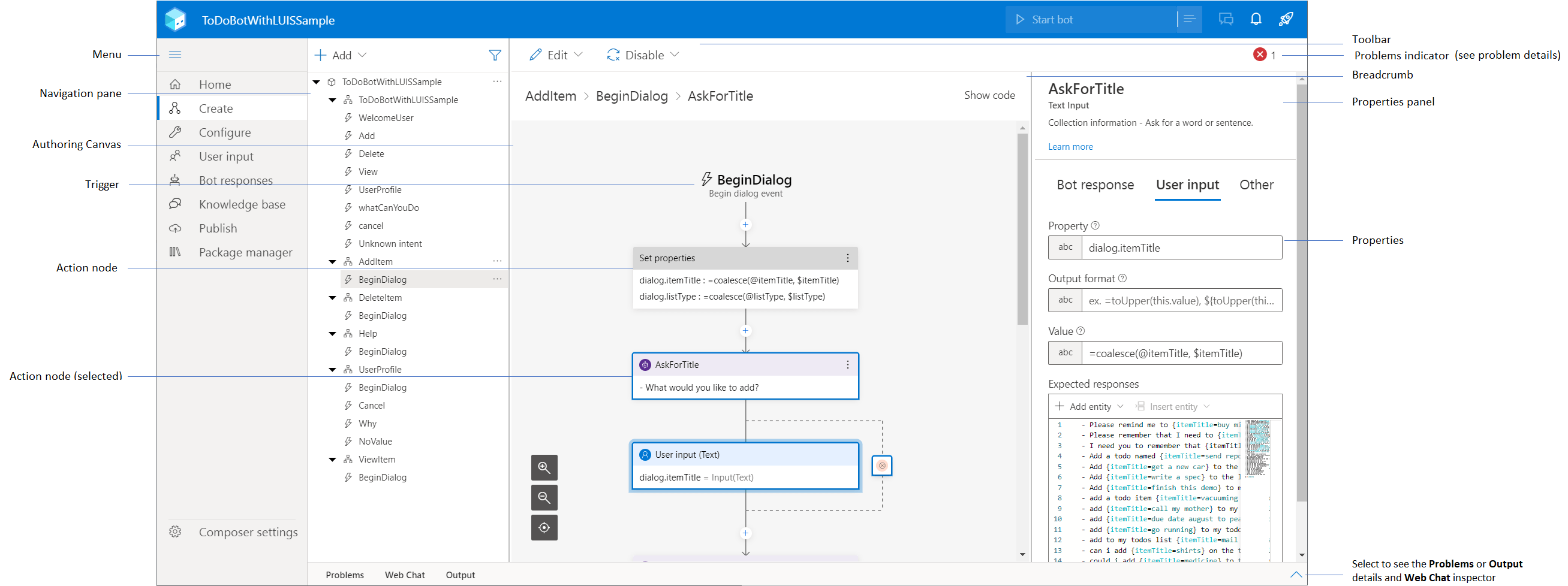

2) Create a Bot with Bot Framework Composer

Bot Framework Composer is a visual designer that lets you quickly and easily build sophisticated conversational bots without writing code. A composer is an open-source tool that presents a visual canvas for building bots. It uses the latest SDK features so you can build sophisticated bots with relative ease.

In this lab, you will use the Bot Framework Composer to create a bot.

Module 8: Getting Started with Computer Vision

1) Analyze Images with Computer Vision

The Computer Vision service is designed to help you extract information from images. It provides functionality that you can use for Description and tag generation, Object detection, Face detection, Image metadata, color, and type analysis, Category identification, Brand detection, Moderation rating, Optical character recognition, and Smart thumbnail generation.

In this lab, you will use the Computer Vision service to:

- Analyze images.

- Create a thumbnail.

2) Analyze Video with Video Indexer

The Video Indexer service provides a Video Indexer portal website that you can use to upload, view, and analyze videos interactively. You can use a free, standalone version of the Video Indexer service (with some limitations), or you can connect it to an Azure Media Services resource in your Azure subscription for full functionality.

Module 9: Developing Custom Vision Solutions

1) Classify Images with Custom Vision

Image classification is a computer vision technique in which a model is trained to predict a class label for an image based on its contents. Usually, the class label relates to the main subject of the image (in other words, it indicates what this is an image of).

In this lab, you will use the Custom Vision service to:

- Create an image classification model in the Custom Vision portal

- Use the Custom Vision SDK with a training resource.

- Use the Custom Vision SDK with a prediction resource.

2) Detect Objects in Images with Custom Vision

Object detection is a form of computer vision in which a model is trained to detect the presence and location of one or more classes of objects in an image.

In this lab, you will use the Custom Vision service to:

- Create an object detection model in the Custom Vision portal

- Use the Custom Vision SDK with a training resource.

- Use the Custom Vision SDK with a prediction resource.

Source: Microsoft

Module 10: Detecting, Analyzing, and Recognizing Faces

1) Detect, Analyze, and Recognize Faces

There are two cognitive services that you can use to build solutions that detect faces in images.

- The Computer Vision service: The Computer Vision service enables you to detect human faces in an image, returning a bounding box for its location. It also returns some facial feature information about the detected face.

- The Face service: The Face service offers more comprehensive facial analysis capabilities than the Computer Vision service.

In this lab, you will use:

- Use the Computer Vision service to detect faces.

- Use the Face service to analyze faces.

- Create a facial recognition model.

Module 11: Reading Text in Images and Documents

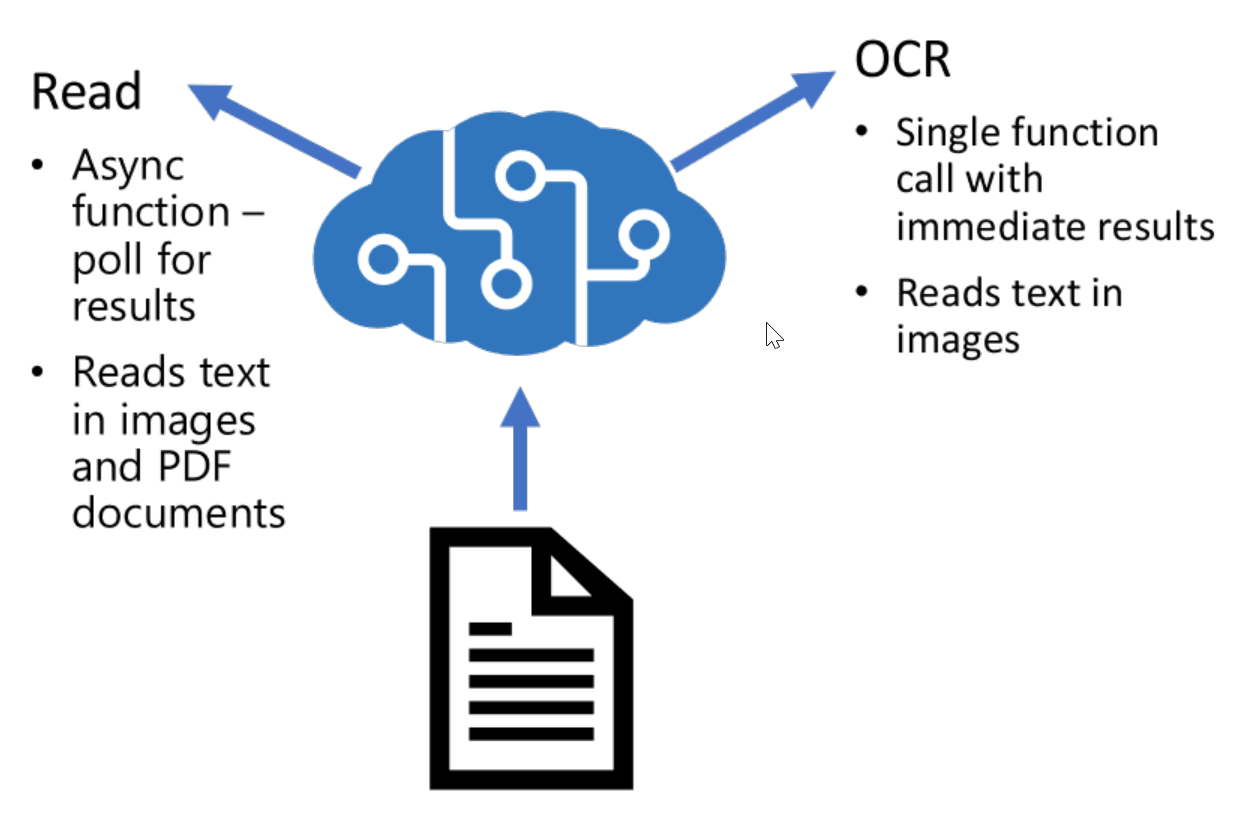

1) Read Text in Images

The Computer Vision service offers two APIs that you can use to read text.

- The OCR API: Use this API to read small to medium volumes of text from images.

- The Read API: Use this API to read small to large volumes of text from images and PDF documents.

In this lab, you will use the Computer Vision service to

- Read text using the OCR API.

- Read text using the Read API

Source: Microsoft

2) Extract Data from Forms

The Form Recognizer service enables you to extract data from forms, including a semantic understanding of the fields in the form and their corresponding values.

In this lab, you will use the Form Recognizer service to

- Train a custom model using unlabeled sample forms.

- Train a custom model using labeled sample forms

Module 12: Creating a Knowledge Mining Solution

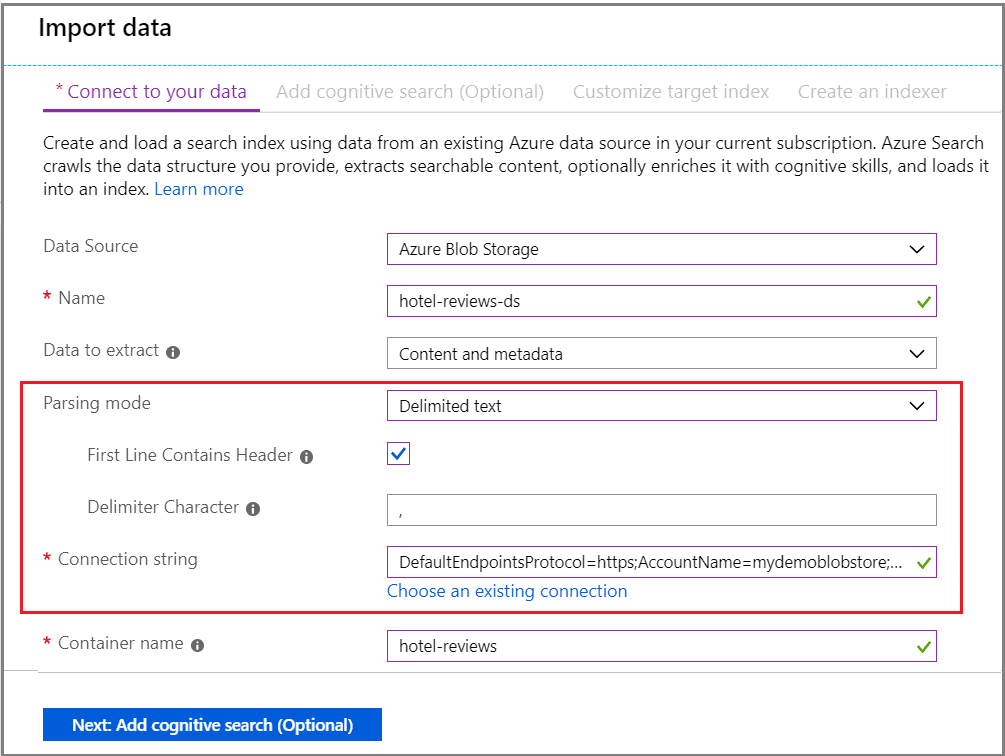

1) Create an Azure Cognitive Search solution

A cognitive search solution consists of multiple components, each playing an important part in the process of extracting, enriching, indexing, and searching data.

In this lab, you will use Azure Cognitive Search to

- Create an indexing solution.

- Modify an indexing solution.

- Query an index from a client application

2) Create a Custom Skill for Azure Cognitive Search

You can use the predefined skills in Azure Cognitive Search to greatly enrich an index by extracting additional information from the source data.

In this lab, you will:

- Use an Azure Function to implement a custom skill.

- Integrate a custom skill into a skillset.

3) Create a Knowledge Store with Azure Cognitive Search

Azure Cognitive Search supports these scenarios by enabling you to define a knowledge store in the skillset that encapsulates your enrichment pipeline. The knowledge store consists of projections of the enriched data, which can be JSON objects, tables, or image files. When an indexer runs the pipeline to create or update an index, the projections are generated and persisted in the knowledge store.

In this lab, you will create a knowledge store with object, file, and table projections.

Related/References

Next Task For You

In our Microsoft Azure AI Engineer Associate Certification AI-102 training program, we will cover all the Exam objectives, 24 Hands-On Labs, and practice tests. If you want to begin your journey towards becoming a Microsoft Certified: Azure AI Engineer Associate by checking our FREE CLASS.

Leave a Reply