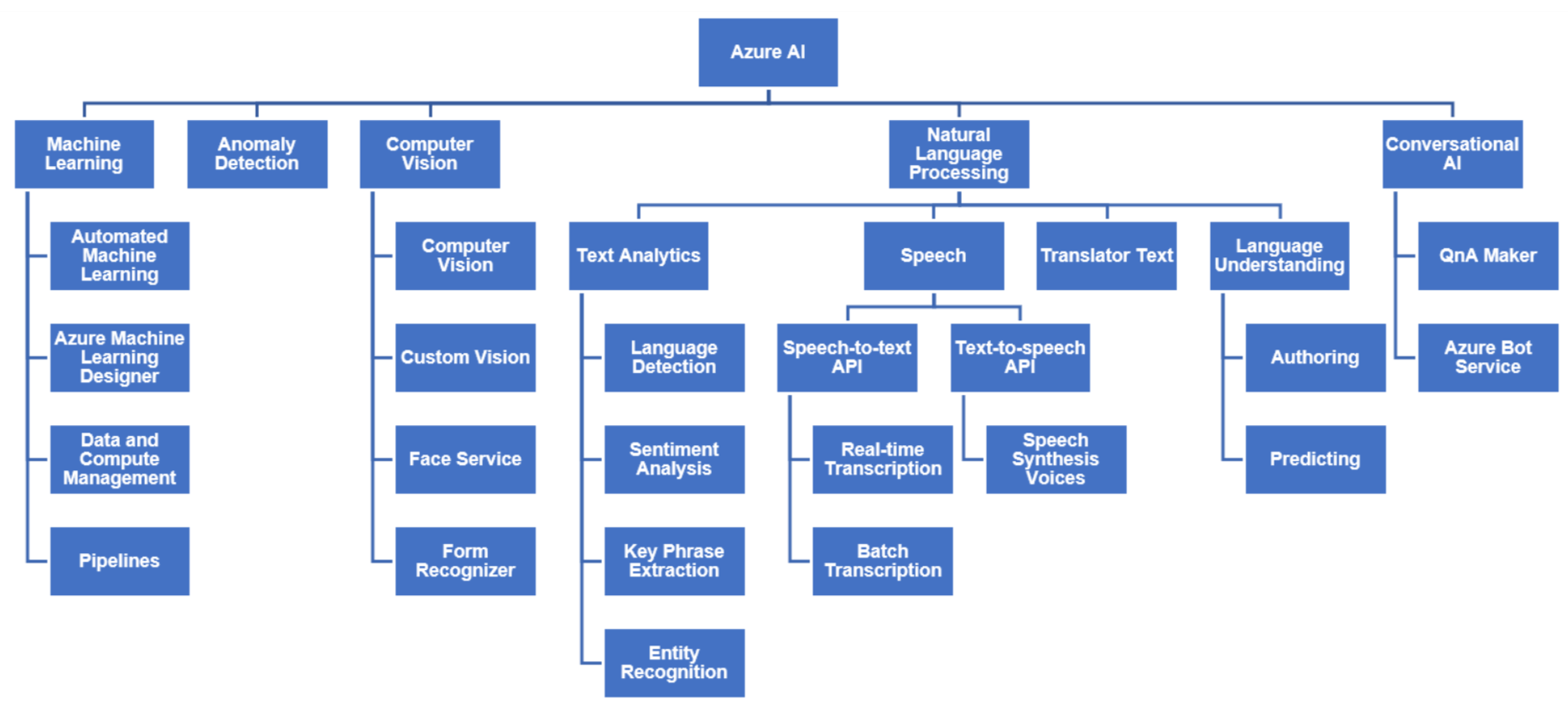

This blog post will give a quick understanding of Computer Vision, Cognitive Services, Logic App, QnA Maker, Text Analytics, and Conversational AI. The Microsoft Azure AI Fundamentals AI-900 Certification is for all those who are looking forward to starting working with or shifting their career in Azure AI and Machine Learning domain.

In the Day 2 session we got an overview of Custom AI algorithms, Classification, Clustering, Compute, and Automated ML

The following topics are covered in this blog Computer Vision, Cognitive Services, Logic App, QnA Maker, Text Analytics, and Conversational AI, Natural language processing, and also performed hands-on, where we have created Chatbot.

Here are the questions that we discussed in the Azure AI-900 Day 3 Session:

> Computer Vision, Cognitive Services

Image classification, object detection, object character recognition, Screen reader, QnA maker are some widely used applications of Computer Vision in Azure. We also saw how to make a chatbot in Microsoft Azure.

Source: Microsoft

Here are some FAQs:

Q.1 what is Computer Vision and write its applications?

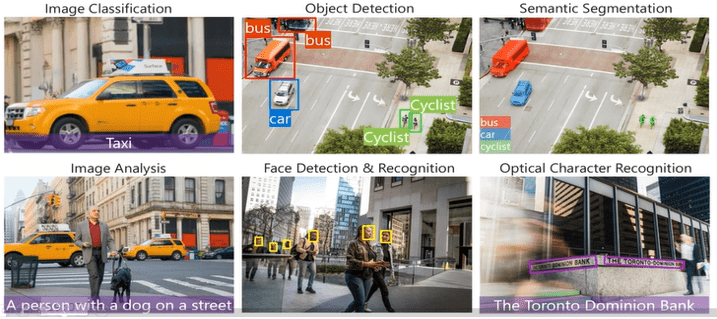

Ans. Computer vision is a field of artificial intelligence that trains computers to interpret and understand the visual world. Using digital images from cameras and videos and deep learning models, machines can accurately identify and classify objects — and then react to what they “see.”

The Computer Vision API is where Microsoft has built their own image models that can give you a few things:

- Image classification– This is where the API will give you a number of tags that classify the image. It should also give you a confidence score of how strongly the model predicts the image to be of that tag.

- OCR– The API can read text within the images and will give you the text. This API can also work with handwritten text instead of just text on signs.

- Facial Recognition– This API will recognize the faces of celebrities or other well-known people within images.

- Landmark Recognition– This will recognize landmarks within images.

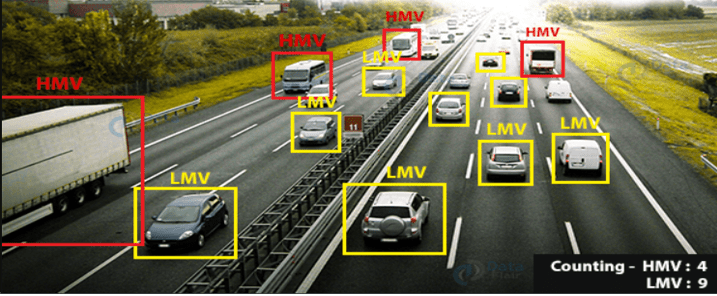

Example: This problem solves using Computer vision.

In the below diagram we can see with the help of Computer Vision we can see HMV (Heavy Motor Vehicle) and LMV (Light Motor Vehicle) vehicles and also counting of LMV and HMV.

Some important applications of Computer Vision:

- Image classification

- Object detection

- Semantic segmentation

- Image Analysis

- Face Detection

- Object Character Recognition and much more.

Source: Microsoft

Also Check: Object Detection And Tracking In Azure Machine Learning

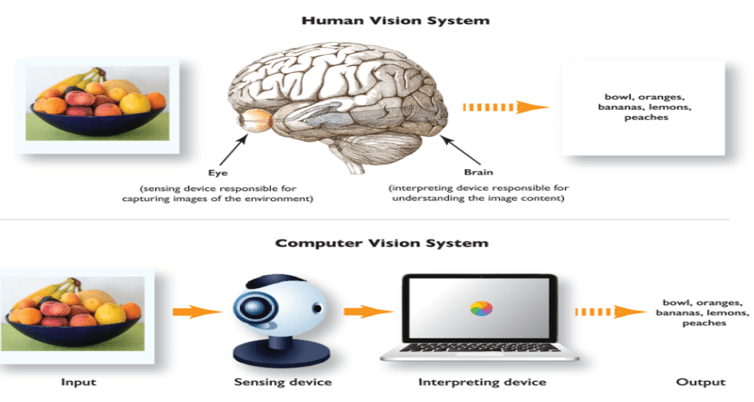

Q.2 How the Human vision and computer vision systems process visual data in a similar way?

Ans.

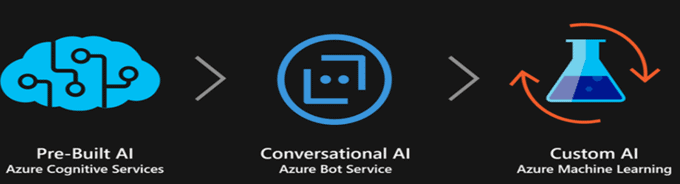

Q.3 What is Cognitive service?

Ans. In simple Cognitive service is deployment on the cloud. It helps in building effective and intelligent software applications. Computer Vision is a part of Cognitive vision.

Cognitive Services bring AI within reach of every developer — without requiring machine-learning expertise. All it takes is an API call to embed the ability to see, hear, speak, search, understand and accelerate decision-making into your apps

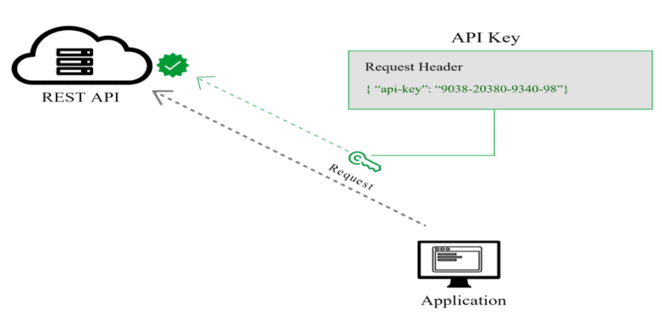

Consumed by application via:

- A REST endpoint (HTTPS:// address)

- An authentication key.

Pros and Cons of Cognitive service:

Let’s take a closer look at the advantages of cognitive services in Azure.

- Wide range of use cases. Comprehensive domain-specific capabilities allow companies to apply the product to a variety of business purposes.

- Human parity. Microsoft AI capabilities in vision, speech, and language are equal to those of humans.

- Easy, quick, and flexible deployment. The container-based architecture enables flexible deployment from the cloud.

There are still a couple of challenges:

- The connection of cloud-based apps with internal data sources (a hybrid network and an integration strategy should be implemented before the start of development)

- Availability(the product is available only in specific regions).

Source: Microsoft

Also Check: Cognitive Services

Natural language processing (NLP)

Language as a method of communication helps people write, read, and speak. Individuals make plans and decisions in natural language, particularly in words. The current era of artificial intelligence is very keen to determine if individuals can communicate the same way with computers.

To ensure that human beings communicate with computers in their natural language, computer scientists have developed natural language processing (NLP) applications. For computers to understand unstructured and often ambiguous human speech, they require input from NLP applications.

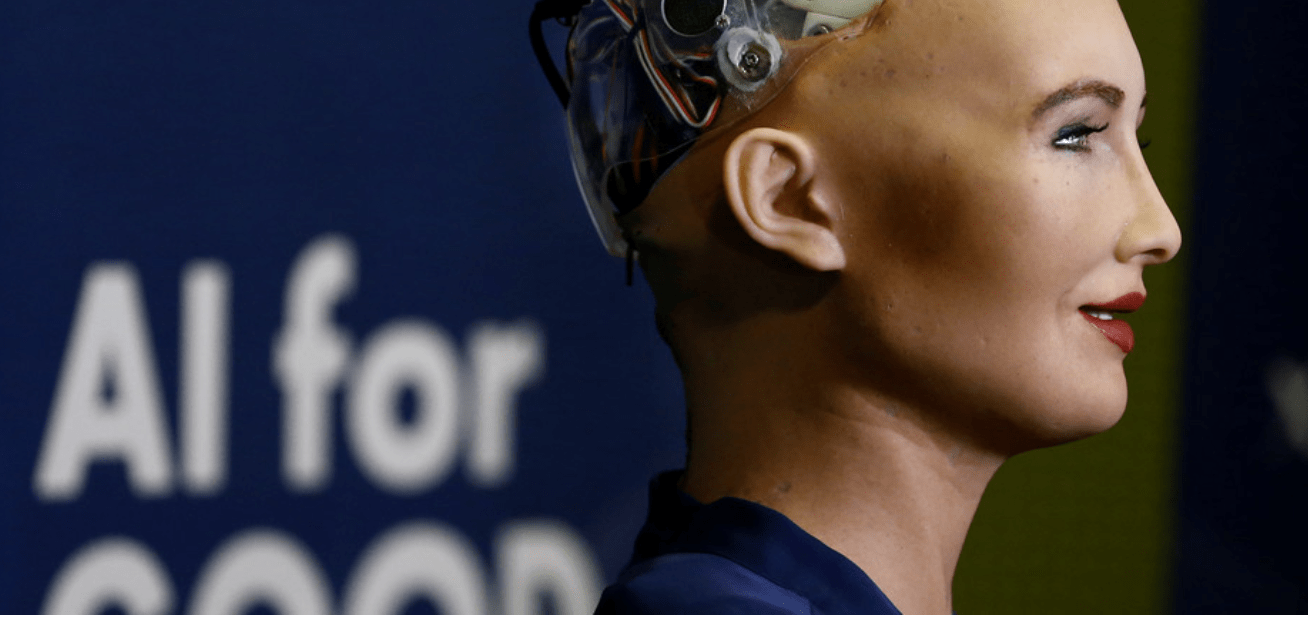

Have you ever wondered how robots such as Sophia or home assistants sound so humanlike? How do they understand you? All of this is because of the magic of Natural Language Processing or NLP. Using NLP you can make machines sound human-like and even ‘understand’ what you’re saying.

Here are some FAQs:

Q.1 What is Natural language processing? Also, write its Advantage and disadvantages?

Ans. Humans communicate with each other using words and text. The way that humans convey information to each other is called Natural Language. Every day humans share a large quality of information with each other in various languages as speech or text. So, on the other hand, computers cannot interpret this data, as computers communicate in 1s and 0s. Hence, we need computers to be able to understand.

Natural Language Processing or NLP refers to the branch of (AI) that gives the machines ability to read, understand human languages.

Advantages of NLP-

NLP helps users to ask questions and get a direct response within seconds.

It is very time efficient.

Disadvantages of NLP-

NLP may not show context.

NLP is unpredictable.

Q.2. Write Application of Natural language processing?

Ans. Real-life application of NLP –

- Chatbots: A chatbot is a software application used to conduct an online chat conversation via text or text-to-speech, in lieu of providing direct contact with a live human agent.

- Virtual Assistants: A virtual assistant is generally self-employed and provides professional administrative, technical, or creative assistance to clients remotely from a home office.

- Autocorrect: Autocorrection, also known as text replacement, replace-as-you-type, or simply autocorrect, is an automatic data validation function commonly found in word processors and text editing interfaces for smartphones and tablet computers.

- Email Filtering: It is used to detect unwanted e-mails getting to a user’s inbox

- Sentiment Analysis: It is used on the web to analyze the attitude, behavior, and emotional state of the sender. Also identify the mood of the context (happy, sad, angry, etc.).

Q.3 How can Businesses use NLP (Natural language processing)?

Ans. There are a wide variety of different applications for NLP. Below are just three different ways that companies can use the technology in their business.

Improve user experience:

NLP can be integrated with a website to provide a more user-friendly experience. Features like spell check, autocomplete, and autocorrect in search bars can make it easier for users to find the information they’re looking for, which in turn keeps them from navigating away from your site.

Automate support:

Chatbots are nothing new, but advancements in NLP have increased their usefulness to the point that live agents no longer need to be the first point of communication for some customers. Some features of chatbots include being able to help users navigate support articles and knowledge bases, order products or services, and manage accounts.

Monitor and analyze feedback:

Between social media, reviews, contact forms, support tickets, and other forms of communication, customers are constantly leaving feedback about the product or service. NLP can help to make sense of all that feedback, turning it into actionable insight that can help improve the company.

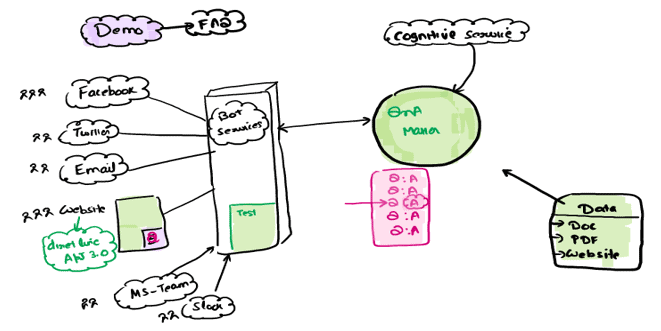

Q.4 What is QnA Maker?

Ans. QnA Maker is a cloud-based Natural Language Processing (NLP) service that allows you to create a natural conversational layer over your data.

QnA Maker is commonly used to build conversational client applications, which include social media applications, chatbots, and speech-enabled desktop applications.

QnA Maker doesn’t store customer data. All customer data (question answers and chat logs) is stored in the region the customer deploys the dependent service instances. For more details on dependent services see here.

Working of QnA =

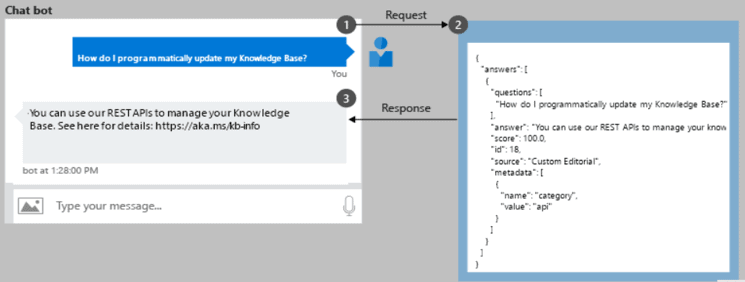

Create a chatbot programmatically

Once a QnA Maker knowledge base is published, a client application sends a question to your knowledge base endpoint and receives the results as a JSON response. A common client application for QnA Maker is a chatbot.

Source: Microsoft

Also Check: Microsoft Azure Chatbot Using Cognitive and Bot services

Quiz Time (Sample Exam Questions)!

Question. You want to train a model that classifies images of dogs and cats based on a collection of your own digital photographs.

Which Azure service should you use?

a) Azure Bot Service

b) Computer Vision

c) Custom Vision

Correct answer (c)

Explanation: Custom Vision enables you to train an image classification model based on your own images.

Related/References:

- Microsoft Learn Free Learning Path

- Microsoft Azure AI Fundamentals [AI-900]: Step By Step Activity Guides (Hands-On Labs)

- AZ-900 Dumps

Next Task For You

To know more about AI, ML, Data Science for beginners, why you should learn, Job opportunities, and what to study to clear Microsoft Azure AI Fundamentals Certification [AI-900].

Click on the below image to Register for our FREE Class on AI, ML, and Data Science for Beginners Azure Certifications | Live Demo & Q/A

Now to start your Artificial Intelligence journey as a beginner!

Leave a Reply