Do you want to get an overview of the things you must know about Hadoop Security? If yes, then Continue Reading this post where we cover Hadoop Security Overview, Why is security important for Hadoop, What Kerberos is and how it relates to Hadoop.

If you are just starting out in BigData & Hadoop then we highly recommend you to go through these posts below, first:

- Big Data Hadoop Keypoints & Things you must know to Start learning Big Data & Hadoop, check here

- Big Data & Hadoop Overview, Concepts, Architecture, including Hadoop Distributed File System (HDFS), Check here

Introduction to Hadoop Security

Today, data explosion is a reality of the digital universe and the amount of data extremely increases even every second. Hadoop has its heart in storing and processing large amounts of data efficiently and as it turns out, cheaply when compared to other platforms.

As the adoption of Hadoop increases, the volume of data and the types of data handled by Hadoop deployments have also grown. For production deployments, a large amount of this data is generally sensitive or subject to industry regulations and governance controls.

In order to be compliant with such regulations, Hadoop must offer strong capabilities to thwart any attacks on the data it stores, and take measures to ensure proper security at all times. The security scene for Hadoop is evolving rapidly. However, this rate of progress isn’t steady overall Hadoop parts, which is the reason the level of security capacity may seem uneven over the Hadoop environment. That is, a few segments may be perfect with more grounded security innovations than others.

Why Hadoop Security Is Important?

- Laws governing data privacy: Particularly important for healthcare and finance industries

- Export control regulations for defense information

- Protection of proprietary research data

- Company policies

- Different teams in a company have different needs

- Setting up multiple clusters is a common solution: One cluster may contain protected data, another cluster does not

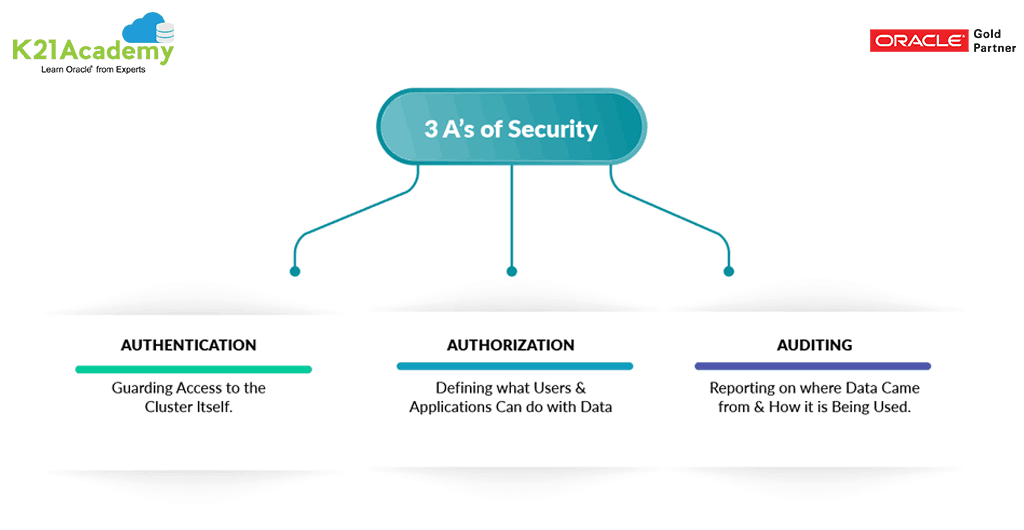

The Three A’s of Security and Data Protection

So what to do about Hadoop security? In part, security and governance in Hadoop require many of the same approaches seen in the traditional data management world. These include the “Three As” of security and data protection.

Authorization: It is the process of determining what data, types of data or applications that user is allowed to access

- Determining whether a participant is allowed to perform an action

- Typically done by checking an access control list

Authentication: It is simply the process of accurately determining the identity of a given user attempting to access a

Hadoop cluster or application based on one of a number of factors.

- Confirming the identity of a participant

- Typically done by checking credentials (username/password)

Auditing: It is the process of recording and reporting what an authenticated, authorized user did once granted access to the cluster, including what data was accessed/changed/added and what analysis occurred.

Data Protection: Data protection refers to the use of techniques such as encryption and data masking to prevent sensitive data from being accessed by unauthorized users and applications.

Types of Hadoop Security

- HDFS file ownership and permissions

- Enhanced security with Kerberos

- Encrypted HDFS data transfers

- HDFS data at rest encryption

- Encrypted HTTP traffic

What Kerberos is and How it Works?

The Role of Kerberos in CDH5

- Hadoop daemons leverage Kerberos to perform user authentication on all remote procedure calls (RPCs)

- Group resolution is performed on master nodes to guarantee group membership is not manipulated

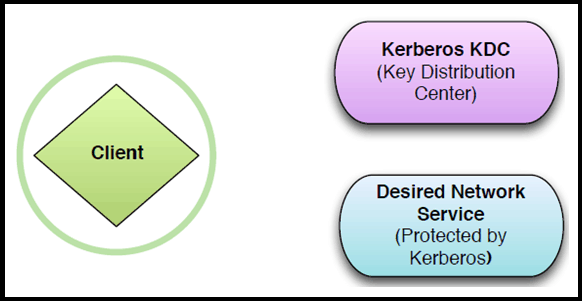

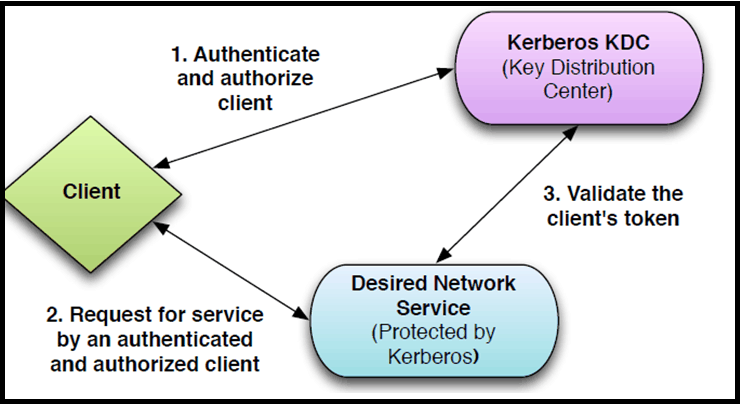

Kerberos Exchange Participants

- Kerberos involves messages exchanged among three parties

- The client

- The server providing the desired network service

- The Kerberos Key Distribution Center (KDC)

General Kerberos Concepts

- Kerberos is a standard network security protocol

- Currently at version 5 (RFC 4120)

- Services protected by Kerberos don’t directly authenticate the client

Essential Points

- Kerberos is the primary technology for enabling authentication security on the cluster

- Manual configuration requires many steps

- We recommend using Cloudera Manager to enable Kerberos

- Encryption can be enabled at the filesystem level, HDFS level, and the network level

- Sentry enables security for Hive and Impala

You will get to know all of this and deep-dive into each concept related to BigData & Hadoop, once you will get enrolled in our Big Data Hadoop Administration Training

Related Further Readings

- Hadoop Administration: Architecture & Overview

- Roadmap for Learning Hadoop

- Hadoop Distribution: Cloudera vs Hortonworks

- Apache Spark Vs Hadoop MapReduce

- BigData Hadoop: Introduction to Apache Spark

- What is Big Data from Oracle

If You’ve not looked at Our Big Data Hadoop Administration Workshop & want to check what we cover in the Workshop then check here & Step By Step Hands-On Activity Guide we cover in Training.

Be Prepared For Your Interview!

Looking for commonly asked interview questions for Big Data Hadoop Administration?

Click below Image and get that in your inbox or join our Private Facebook Group dedicated to Big Data Hadoop Members Only.

Thank you for sharing a good information

Hi Ahilaa,

We are glad that you liked our blog!

Please stay tuned for more blogs

Thanks & Regards

Rahul Dangayach

Team K21 Academy